Pointless Technology and E-waste

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

The idea for this rant about, sorry, thoughtful review of conspicuous consumption in a world fast running out of resources to exploit, thanks to the almost daily advances in ‘technology’, came from a TV advert for the latest ‘must-have tech’: a voice-operated coffee machine. On seeing this I naturally assumed it automatically ordered beans on the Internet, took delivery at the front door, filled itself with water and milk and then sat there waiting patiently for my command to make coffee. Actually, I expected it to assess my mood and thirst with its wireless thought-sensor, and using ‘AI’, work out the precise moment to switch on without me having to say a word. To my utter disappointment, I found it does none of these things. You can tell ‘Alexa’ how you like your coffee but that mostly depends on the pod inserted into the machine. Oh, and you can ask Alexa to operate any other home automation devices you may possess, should you wish to talk to the ‘fridge or turn the room lighting green.

Given the growing world crises of atmospheric warming, energy shortages and plastic pollution, can the human race continue to be obsessed with owning the latest gadget technology? How much of it do we really need? Is more recycling the answer or something more radical like, er, consumers not buying all this junk in the first place?

Consumables

Not so long ago that word referred to basic products that were ‘consumed’ on a regular basis - essentials like food and household cleaning materials. People went shopping at least once a week, primarily because most fresh food cannot be stored for much longer than that. Food and household product producers always have a guaranteed market because we all need to eat and keep ourselves clean. Small suppliers became big suppliers of well-known brands, many of which are still selling in quantity, largely unchanged, a hundred years after they were first formulated. The retail prices of these items were kept low with economies of scale. And because remaining unchanged for generations of customers was actually a desirable feature. It still is, for those types of products.

Early, what we now call ‘high-tech’ products were not considered to be consumable. Cars, radios, televisions were capital purchases; things you expected to keep for many years. This meant they were built to last, and the relatively low numbers built and purchased ensured they were very expensive. Nothing much changed until the invention of the transistor when bulky and fragile thermionic valves (‘tubes’) were replaced by these cheap, tiny, robust and low operating voltage bits of semiconducting germanium or silicon. Add transistors to the mix of similarly miniaturised passive components, printed circuit boards instead of wiring, mass-produced plastic enclosures instead of woodwork, and there you have it; the proverbial ‘game-changing’ technology kickstarting consumer electronics. That’s how we got started on our present path leading to a planet depleted of all resources and poisoned with toxic waste. Oh, dear. That really was a rant. Surely, we can look forward to a better future than that? Before looking for solutions, we must examine more closely how the technological revolution of the 1950s and 60s turned sour.

The Flattening Curve

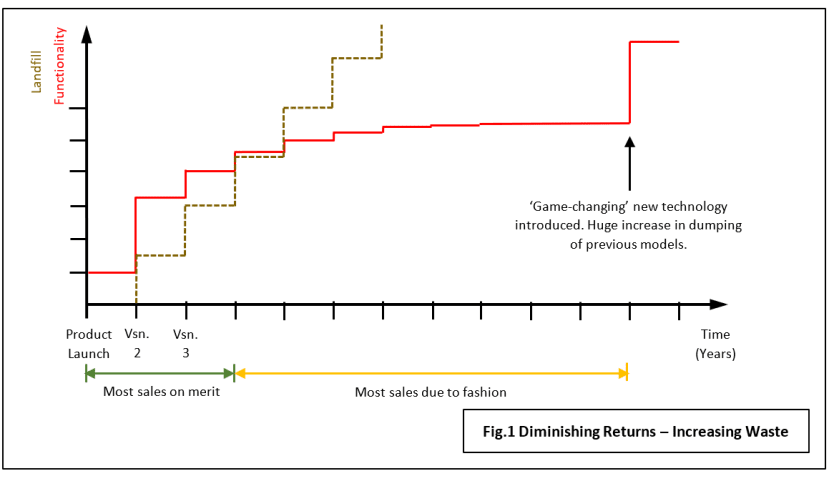

It all comes down to the hidden cost of those economies of scale which enabled mass-production of non-essential high-tech consumer goods. In this case, I mean non-essential for survival; only necessary for maintaining an arbitrary ‘standard of living’ or ‘lifestyle’. Take a look at the graph in Fig.1 which shows how a new sort of product, e.g. the mobile phone, is improved over time to keep it fresh and up-to-date. The red line indicates that the improvements occur as step-changes at regular intervals as new versions are introduced each year. Keeping with the mobile phone example, when the first ‘pocket’ phone was launched it only provided the functionality of a land-line instrument – without the cable. Subsequent versions were digital and started incorporating features of the Portal Digital Assistants (PDA) then commonly available, like the Palm Pilot. Year by year they acquired display screens, then high-resolution colour and of course connection to the Internet. But all the time each actual increase in functionality was getting smaller and smaller. Eventually, the yearly upgrade sees customers dumping perfectly good equipment, buying the latest models for little more than cosmetic changes (note the change to present tense). The curve has flattened out. The brown line, recording the wastage, just keeps on rising. If customers’ buying habits followed any sort of logic, they would stop throwing money away like this, but they’re human, and they won’t.

Eventually, some game-changing improvement in design occurs, hopefully before customers tire of paying to exchange the phone they bought last year for one of a different colour or shape! The big advance came in the form of the Apple iPhone – a bombshell from a manufacturer of computers that rendered all others obsolete overnight. Big players like Nokia and Blackberry were doomed, not because Apple had made some great technological advance; they just realised how the old ‘standard’ mobile phone format of tiny screen and numeric keypad was incompatible with the new ‘Smartphone’ functionality. Up until the iPhone appeared, most, if not all the advances in mobile phone technology such as video playback and access to websites were ‘pointless’ because the Human-Machine Interface (HMI) was so primitive. Nobody wanted to watch a movie on a 3cm square screen for instance, and text messaging was very awkward using what was basically a calculator keypad. The iPhone set the standard for smartphones and everybody followed it: switching from analogue to digital was a big leap; the change from GSM (2G) to UMTS (3G) protocols made web-surfing a lot faster. The introduction of 4G made Internet access faster still. The trouble is, the functionality curve is flattening out again: 5G will make little perceptible difference for most purposes; further increases in screen resolution will go largely unnoticed as will the use of ever more powerful processor chips. The most obvious improvements are to the cameras (more of them as a visible indication of your wealth). Here are some more everyday items with flattening functionality curves:

- The car. The basic format of the personal automobile was largely settled by the 1950s. Since then, improvements in functionality have been incremental and latterly mainly cosmetic or peripheral (in-car entertainment, for instance, seemingly of greater importance to buyers than performance or safety).

- The Desktop PC. Customers for the first IBM PC could choose between a monochrome high-resolution display for text only, and a 4-colour low-resolution screen for graphics. The PC XT and AT models followed seeing big improvements in colour screen resolution rendering monochrome obsolete. The original Intel 8088 microprocessor chip was replaced by the 80286 improving processing speed enough to make Graphical User Interfaces (GUI) such as Microsoft Windows and Digital Research GEM just about usable. IBM had made the PC format ‘Open-Source’ and by now ‘PC clones’ from other manufacturers were easily outselling IBM products on price. These other companies took up the baton of PC development, with screen pixel/colour resolution improving until it exceeded that of HD TV some years ago. Intel continued to supply ever more powerful MPUs: after the 80286 came the 80386 and the 80486, the latter gaining floating-point maths hardware. Intel hadn’t copyrighted the number series and were concerned that another chip manufacturer, AMD, was competing heavily with its Intel lookalikes. So, the 80586 was renamed ‘Pentium’. By this time, I would suggest that the PC functionality curve had flattened out. After all, the PC was primarily sold as an office computer running word-processor, spreadsheet and database software. Screen resolution was more than adequate for viewing documents, the processor fast enough for most spreadsheet maths and hard-drives had ample capacity for storing big databases. Internet surfing was now limited by the external communication bandwidth, not the computer. Still, MPU chip development continues apace with the focus on multi-core devices; essentially cramming more and more ‘cores’ onto a single piece of silicon. For most users, I contend that multi-coring is pointless technology and only of benefit in highly-specialised scientific applications and video-games.

At this point, chip makers may claim that the massively powerful MPUs are necessary to enable the coming revolution in Artificial Intelligence. Perhaps. But much of the ‘AI technology’ being added to existing MPU chips is little more than classic DSP hardware for fast execution of Multiply-Accumulate (MAC) instructions. It may be ideal for realising digital Convolutional Neural Networks (CNN), but not much use for the analogue Spiking Neural Networks (SNN). It’s the latter that holds the most promise for future large-scale AI applications. These huge chips may turn out to have far shorter lives than their predecessors: naturally occurring cosmic sub-atomic particles can wreak havoc with the ultra-small internal structures. More potential waste.

I believe there are many more examples to be found of technological progress in terms of functionality eventually leading to resource wastage. How about the humble vacuum cleaner? Its huge step forward came when ‘bagless’ technology was invented. It could be argued that functionality had been flat for a long time before, and has continued flat ever since that great leap. These machines are now beacons of pointless technology with their laser beams to illuminate the dust and colour bar-graph displays telling you how many particles of a particular size are shooting up the pipe in real-time. Well, why not? They’ve got to justify the inclusion of a microprocessor somehow, right?

A Badge of Dishonour

There was a time when disposing of a perfectly serviceable item and replacing it with the ‘latest model’ just for the sake of fashion or to flag your wealth, seemed acceptable. There was a time when we didn’t worry about the mountains of waste going into landfill or the damage done to ocean wildlife by all the dumped plastic. There was a time when the natural resources needed to make these things seemed limitless. Even now, new models of manufactured goods have features that readily identify their status; the first smartphones had a single camera lens prominent on the back. Current up-market models have three or four. These status symbols are becoming badges of dishonour.

It could be that in the future ‘Conspicuous Consumption’ will become socially unacceptable along with pointless technology. Perhaps instead, a culture of sustainability, reliability and repairability will prevail. What worries me is what it will take to bring about that change: our society seems to treat manufactured goods as lifestyle status symbols, rather than as tools to create a better life. Soon, we are told, we will all be living in a virtual world. The ‘real’ world will be run by robots, servicing our non-virtual needs for things like food. Maybe one day they’ll get fed up with this task and switch us off.

If you're stuck for something to do, follow my posts on Twitter. I link to interesting articles on new electronics and related technologies, retweeting posts I spot about robots, space exploration and other issues. To see my back catalogue of recent DesignSpark blog posts type “billsblog” into the Search box above.

Comments