Industrial AR Brings Opportunities for Businesses and Workers

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

The world wasn’t quite ready for Google Glass’s vision of always-on everyday Augmented Reality (AR). Google’s online promotions painted an enticing picture of a hipster lifestyle assisted continuously with in-glass diary prompts, contact information, follow-the-line navigation to appointments, location-based annotations, and more. However, it seemed that people were not comfortable with the idea of being recorded continuously by Glass wearers.

Since then, Glass has gone enterprise and other smart eyewear such as Microsoft HoloLens 2 is also being positioned for industrial applications. While consumer-oriented AR apps for purposes such as gaming (for example Pokemon Go) and retail (Ikea Place) leverage the ubiquity of the smartphone platform, virtual reality glasses - or smart eyewear - fit extremely well in the industrial scene. Two reasons for this are that wearers can keep their hands free and that the glasses always capture the wearer’s perspective accurately. These are important in industrial use cases, which include product design, assembly, service and maintenance, logistics, and remote assistance.

AR Principles

An AR application enhances the view through the lens of the smart eyewear (or smartphone camera) by adding multimedia content in the field of view. This can be done in several ways. Four important AR techniques in use today are:

- Marker-based AR uses a camera to sense a visual marker in the field of view and overlays content or information in an appropriate position and orientation. The marker is a simple pattern such as a QR code, which is easy to recognise using minimal processing power.

- Markerless AR uses data from GPS or dead-reckoning sensors (accelerometer, gyroscope, velocity meter) to overlay AR on nearby objects. Typical uses include mapping directions or location-based services on mobile devices.

- Projection-based AR projects an image onto a real-world surface (or a hologram projected in mid-air) and detects human interaction with the image – such as a keypad projected on the user’s hand.

- Superimposition-based AR recognises an object and replaces it with a digital object or an augmented view of the same object.

In each case, the AR app is required to identify content for the user to interact with, and determine how, where, and when to display it. To ensure the digital objects are presented in the right place within the user’s field of view, in the correct orientation, and adjust their appearance appropriately as the user changes location, Simultaneous Localisation and Mapping (SLAM) uses data from the camera and motion sensors such as accelerometer, compass, and gyroscope integrated in the eyewear.

Complex functions like SLAM drive features of AR-developer frameworks, such as tracking, object/image recognition and 3D rendering to help developers create AR experiences on their chosen platform. Well known frameworks include Wikitude SDK and PTC Vuforia, which work with smart eyewear platforms, mobile platforms, and others such as Windows and Qt. Plugins such as InGlobe ARMedia help end users create AR experiences using 3D content they already own, such as CAD models of their products or components.

Industrial Use Cases

Industrial AR (IAR) has potential uses throughout the value chain. As 3D models of new products are created and refined, AR allows a detailed appraisal before committing to building prototypes. Teams can gain a contextual appreciation without committing to building physical prototypes, and zoom quickly from fine detail to bigger picture to assess aspects such as usability or access to serviceable parts. Similarly, designers who work on a larger scale, such as architects or city planners, can use AR to judge the impact of their ideas quickly and assess alternatives at minimal cost.

When it comes to assembling products, factory workers can accomplish complex tasks guided by instructions or images overlaid in real-time on the field of view. These can be static or animated, 2D or 3D, and annotated with data such as correct torque settings. The IAR app ensures instructions are presented just in time, in the right places, and in the correct sequence. Each worker can call up each successive instruction when ready using voice or gestures. This can save the time operators may otherwise spend searching for instructions or drawings and provides the certainty of working with the latest documentation. The challenges human workers face in mapping information mentally from 2D documentation onto the physical workpiece are also eliminated. In addition, assembly errors can be greatly reduced, while at the same time workers require less training.

In-glass guidance can accelerate complex assembly tasks and help eliminate errors. Image: AGCO Corporation.

Similarly, maintenance activities performed on-site or in the field can be assisted and accelerated with just-in-time information and instructions. A visual record of the work carried out can be stored as part of the digital history of each unit serviced.

Quality inspectors can also take advantage of AR to aid visual inspection of products by calling up diagrams and marking any substandard parts or areas that need rework. AR footage can be used to compile an inspection report and automatically generate instructions for remedial action.

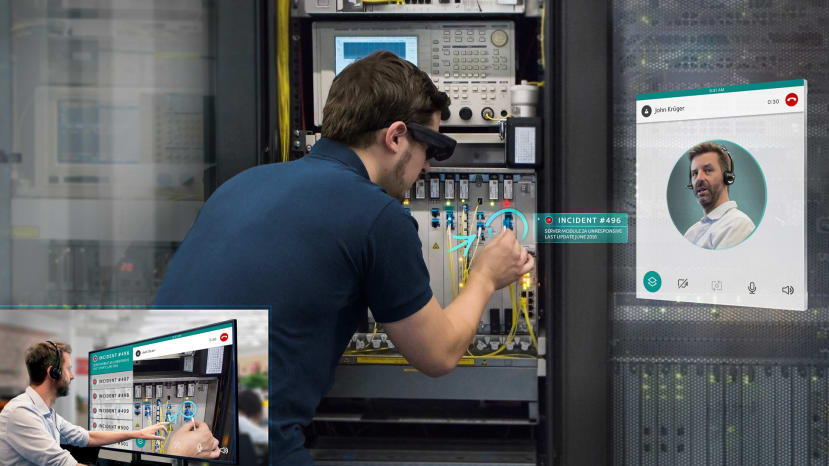

AR can also reach beyond the factory by enabling enhanced remote assistance, empowering the company’s experts to help colleagues or customers working off-site to solve problems or walk through setting up or adjusting equipment. AR enables the expert to see exactly what the operator is seeing, to understand the situation quickly and provide the required assistance. They can deliver drawings, documents or animated or video demonstrations directly in the operator’s field of view, and even add 3D markings to guide the operator through any recommended procedures.

Remote assistance can comprise expert assistance enhanced with AR features. Image: RE’FLEKT

Other opportunities for industrial AR to drive greater productivity, accelerate turnaround times, and increase revenue include serving dynamic picking instructions directly to smart eyewear for workers in distribution centres. Sales activities can also be simplified and improved, while saving transportation costs, by using AR to create life-sized 3D interactive product demonstrations for prospective buyers of large or high-value equipment.

Logistics activities can be faster and more efficient with AR. Image: Optiscan.

Enhancing Human Capabilities

Could the new technology threaten human workers’ jobs? While technologies such as AI and robotics are sure to change many roles in the immediate and longer-term, IAR could potentially protect humans by enhancing their performance through reduced error rates, faster task completion, and reduced training overhead. This could enable human workers to compete more strongly with machines, particularly in roles where natural human advantages such as mobility, superior dexterity and versatility prevent tasks being transferred easily to a robot or machine. And, of course, widespread adoption of AR creates new opportunities for developers of tools and content.

By augmenting human performance, industrial AR could drive stronger businesses and healthier coexistence between humans and advanced technology.