In Search of Durable Computing

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

Wouldn’t it be nice if computers had a lifetime similar to simpler everyday devices?

In previous articles on the theme of sustainability we’ve taken a look at sustainable hardware design and the associated challenges, the implications of software which is critical to the operation of hardware, and also tools for enabling self-repair.

In this article, we will now turn our attention to how computing platforms might be designed in such a way as to make them far more durable, which is to say that much like a classic television, radio or telephone of old, are able to provide useful service for decades to come. As we’ll come to see, this could provide far-reaching benefits, beyond environmental sustainability and cost savings.

Background

Manchester Baby Replica, Geni, CC-BY-SA.

So far we’ve mostly been concerned with the preservation of natural resources, while securing the maximum return on investment and avoiding or at least postponing equipment going to landfill. All of which clearly laudable aims. However, there are additional benefits to having computer systems which may be used many years after their initial development or end of sale even; such as enabling information to be retrieved a long time after it has been entered into a particular system.

One of the remarkable things about computing being that while we are still some way off the first centenary of the first ever stored-program electronic computer — with the University of Manchester’s Baby from 1948 widely recognised as being the very first — important details of many computer systems and the software that they ran have already been lost.

Earlier and physically large computers were destined for the scrap yard once they had become outmoded, with gold plating and copper cabling etc. being recovered. Though it’s not just the loss of original hardware which presents a problem — as that can often be emulated using modern hardware — but the software also, as old media is thrown out or succumbs to “bit rot” (degrades).

The problem is also not limited to just the oldest and most cumbersome systems, with numerous examples of smaller systems from as recent as the 1980s and 1990s, where little hardware remains and much of the associated software would appear to have been lost to the mists of time.

Such is the problem that there are numerous projects working to preserve information and software for historic computers, such as Bitsavers and The Internet Archive. There are also museums and academic researchers focused on the task at hand, along with groups such as the Computer Conservation Society, and of course private collectors and enthusiasts.

Levels of abstraction

RISC-V is an open-source instruction set architecture (ISA).

A computer system has numerous layers or levels of abstraction, which allow us to incrementally build up complexity in a methodical manner, with each having an impact on durability.

The fundamental building blocks of a computer are its instruction set architecture (ISA), such as x86-64 or Arm, for example. ISAs are specified via standards and official vendor documentation, together with manuals for design engineers, compiler writers and low-level programmers.

Building upon ISAs we have microprocessor designs which are tailored for a particular class of application, such as high performance or low power. Building upon which we might have a system-on-chip (SoC) design which integrates one or more processors into a single chip, together with peripheral controllers and other useful features in support of the intended application.

A computer hardware system may then be constructed around a microprocessor or SoC, integrating things such as RAM, storage and I/O etc. via one or more PCB assemblies.

A hardware platform would typically have firmware, such as a “BIOS” which is responsible for hardware configuration and early device access. This will more often than not load an operating system of some sort via a bootloader program. Then finally we will have our application(s).

Hence there are many points across the hardware and software stack where we might optimise for durability, by making choices which ensure or at least increase the likelihood that a system might still be practically usable in years to come. For example, through use of an open-source operating system, BIOS, ISA, or even a chip design — as far-fetched as the latter might seem — effectively facilitating the preservation and maintenance of such technologies further into the future.

Platforms

There are numerous examples of computer technologies which have stood the test of time, whether by accident or design. PDP-11 computers were on sale from 1970 and into the 1990s, proved popular in industrial applications and there are likely still systems running machinery today.

IBM z/Architecture mainframes provide the backbone of many banking operations around the world, while maintaining superb backwards compatibility and can trace their lineage all the way back in time to the System/360 mainframe, announced coming up for 60 years ago in 1964.

Of course, even the humble PC of today has a strong connection with its forebears and the Intel architecture can trace its roots back to the Datapoint 2200 terminal announced in 1970.

However, while all of these things are impressive, these are proprietary architectures and systems; when products become end-of-life or go out of service, upgrades are inevitable. Were you to discover a failed PDP-11 system on the factory floor today, finding spare parts may present a serious challenge. And of course, PC software compatibility can be somewhat variable at times.

Next, we’ll take a look at a selection of systems which in one way or another embody key aspects of what may be thought of as durable computing.

Framework Laptop

Framework Computer Inc.

The Framework Laptop is the most ambitious attempt yet at making a laptop for use by anyone and which embodies the principles of right to repair. Much like the Fairphone smartphone covered in the previous article, Right to Repair and Striking the Sustainability Balance, spare parts can be bought and Framework Computer Inc. provides support resources to facilitate self-repair. Furthermore, it’s possible to buy upgraded mainboards, and CAD and electrical connector information has been published on GitHub under a Creative Commons licence.

When paired with an open source O/S such as Linux, the Framework Laptop should be able to provide service for many years to come — provided that is that parts are still available to buy.

MNT Reform

The MNT Reform laptop was announced a number of years before Framework and with the first beta units shipping late 2018. Like Framework it’s possible to buy replacement and upgraded components. However, it’s also a fully open-source hardware design and anyone is free to manufacture compatible and derivative components. The computer is aimed at those with a little more technical expertise and integrates a number of features which invite modification.

One particularly exciting option for MNT Reform is an AMD Kintex-7 FPGA SoM, which enables use with open-source processor implementations such as VexRiscv, a 32-bit RISC-V CPU. Thereby enabling unprecedented transparency and providing opportunity for the creation of custom processor/SoC implementations. Which is admittedly a somewhat specialist feature, but this does also lend additional durability as such “soft” CPU implementations could subsequently be ported to future higher-performance FPGAs, or even just a similar replacement for an end-of-life device.

With the current CPU modules being Arm-based, MNT Reform performance will not be the same as an Intel-based Framework laptop. And of course, an FPGA soft-processor powered laptop would provide greatly reduced performance. However, the priority is clearly to provide a radically open platform and which by extension is particularly durable, rather than the highest performing.

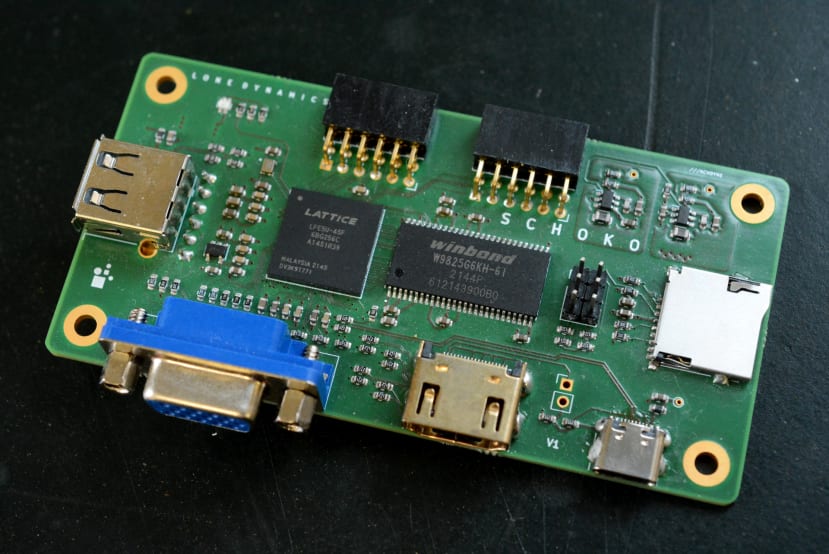

Schoko

Schoko is a member of a family of small computers from Machdyne, whose “focus is on supporting timeless applications (reading, writing, math, education, organization, communication, automation, etc.) with simple, secure, responsive, reliable and repairable hardware”.

The Schoko computer is a simple FPGA-based design, with 32MB RAM and 32MB flash, plus VGA, USB host port, USB-C for power and configuration, and Pmod compatible expansion ports.

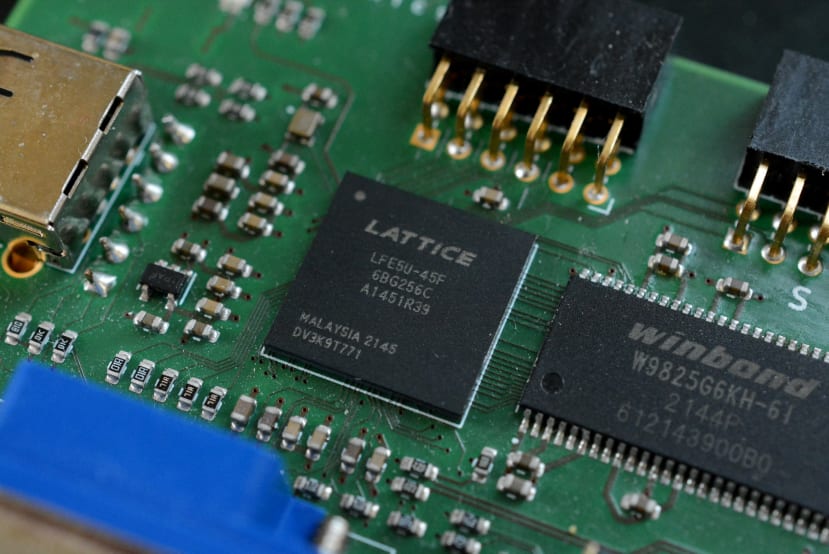

The FPGA used is much smaller and has far fewer resources than the Kintex-7 module available for MNT Reform, but as a result of which it is also a great deal cheaper. However, more than this, a standout feature of the Lattice ECP5 device used is that an open-source toolchain is available for it. This means it is not only possible to use an open-source processor/SoC design, but an open-source flow can also be used to parse the design and generate a bitstream to configure the FPGA.

The LiteX framework is used for the SoC design provided for Schoko and this is notable for, amongst other things, utilising a Python-based HDL. Naturally, the SoC runs Linux.

Given its fairly modest capabilities, Schoko and similar designs are perhaps best considered educational and research platforms, while also useful for simpler tasks or specialist uses.

Collapse OS

Collapse OS on the ZX Spectrum, Dmitry Khodyko.

This one is just a little out there, so please bear with me.

Linux is all very well, but what do you do when the global supply chain collapses as part of some sort of apocalyptic event? The answer is seemingly to adopt a technology scavenging strategy and revert to 8-bit computing! Enter Collapse OS, a Forth operating system and collection of tools and documentation with a single purpose: preserve the ability to program microcontrollers through civilizational collapse.

Collapse OS runs on Z80, 8086 and 6502 machines, amongst others. It has a command line text editor — handy if you’re using a terminal fashioned from a scavenged typewriter! — along with a visual editor and Forth interpreter, plus SPI, SD card, serial and PS/2 keyboard support.

Collapse is at the somewhat extreme end of the pursuit for durable computing, but nevertheless perhaps interesting at the very least as an exercise in exploring what is possible with very little.

Final words

A common criticism of technology is that it is increasingly disposable and gone are the days when you might buy a telephone, radio or TV which might last a decade or two, if not three. With computing, the situation can be made worse if you find yourself with old media or file formats which can no longer be easily read. Hence durable computing is about more than simply being able to repair hardware, minimise waste and secure the maximum return on investment made.

Right-to-repair and open-source software are two pillars of durable computing and products such as the Framework Laptop are a significant step in the right direction. However, MNT Reform demonstrates how it is possible to go further with open-source hardware added into the mix, which may even be extended as far as the processor and SoC design.

Schoko provides a low cost and hence low barrier to entry to getting hands-on with durable computing, additionally showing how a soft-processor which is targeted to an FPGA may also be synthesized using open source tools. Collapse OS meanwhile takes a decidedly pessimistic view of the future ahead of us — and one that if we are suitably environmentally and socially minded will never come to pass — but may have some interesting ideas, or at the very least focuses the mind.

Comments