Brain controlled multifunctional rolling robot based on openbci-Python-Arduino and "disk" system

Follow projectHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

We are now on a project using motor imaginary to control a unique robot in order to assist human working in certain conditions. What special about our project on using motor imaginary is that we develop a new method in using MI to control devices.

We are now on a project using motor imaginary to control a unique robot in order to assist human working in certain conditions. What special about our project on using motor imaginary is that we develop a new method in using MI to control devices.

Currently, the most widely used EEG BCI devices used SSVEP, mainly implemented for VR, while motor imaginary usually used in artificial limbs, those techs have problems below:

SSVEP(Stable State Visual Evoked Potential)

*User must focus on certain frequency graphics, making SSVEP’s limited in VR application.

MI(Motor Imaginary)

*Currently MI is mostly used in an exoskeleton, dimension of command is limited.

Both two can’t be used for reality(ar,mr,robotics, etc) to increase productivity.

How Can We Solve Them?

We developed a new method

—By using the”Disk” system,the user is able to access commands on multiple dimensions,thus the user can focus on controlled devices.

Can implement with ar and mr which can be used in practical production.

We also designed BCI controlled robot expertise in supporting human.

More BCI application Implement can also be developed.

We used the open-source Openbci Cyton board,8 channels EEG version,with the lowest price in the market, powerful community that growing in the world, support stm32 and Arduino programming,project resources are easy to spread and upgrade. We broadcast the Time Series by LSL protocol from the gui.

A test video:

By corporate with project north star and openbci community, we designed different versions of bci helmet. The latest one is the Project Ariel Supremancy, a combination design of Project Ariel mixed reality open-sourced headset with Openbci EEG device, currently, there is no similar product of our prototype’s function in the market. By implement mixed reality into our disk system, user can have a much better experience and enable more functionalities in mixed reality or IoT interfaces.

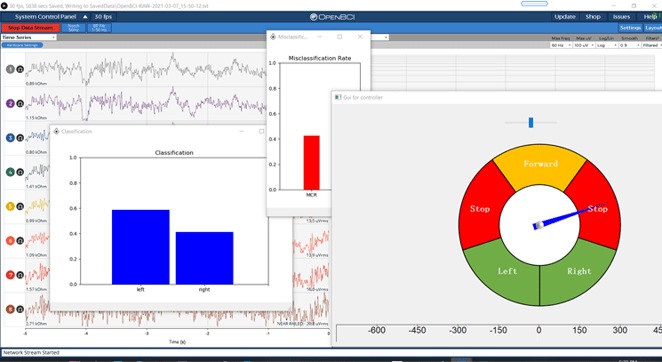

Using open-source software Neuropye and Openvibe to record, classify and train Openbci gui’s LSL output signal. We used python to record data of training direction and corresponding time axis,recorded data will then be filtered and used to conclude the user’s imaginary motor direction.

The disk

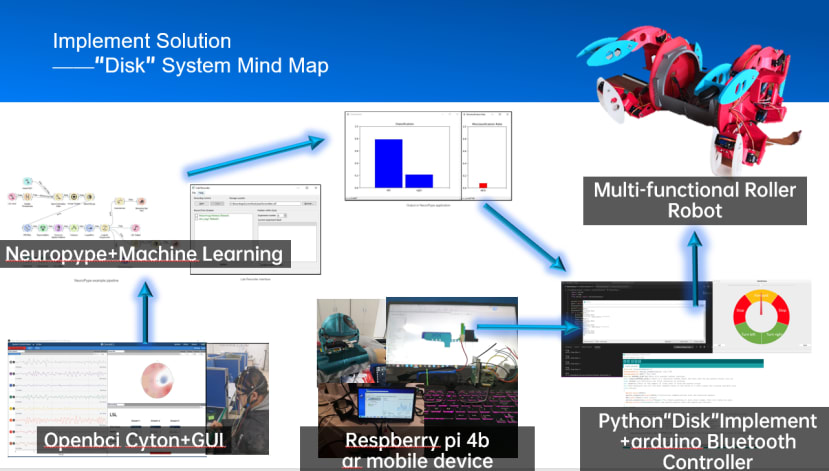

Implement Solution

——Raspberry pi/Rock Pi X ar mobile device

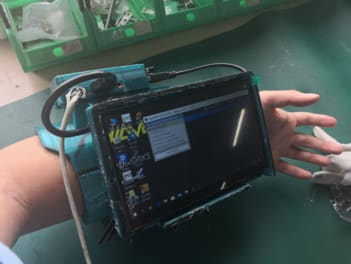

*We managed to install Windows 10 on Raspberry Pi 4b and Rock Pi X, together with mr headset and touch screen gauntlet, which enable the user to use our system mobility.

*Implemented with mr, enable usages in practical production, like IoT.

*python. openbci gui and neuropype were implemented, making the system mobile, wearable, allows further applications.

*Rock Pi X version is completely Implemented with openbci gui with X86 applications like neuropype.

Now a chart shows why our project can be interesting. Judge it yourself :)

Project summary:

*By using BCI tech, enable the user to send a correct command to the robot even all other output organs been occupied(hand, mouth, etc).

*Developed with arduino,python and openbci,easy for further development and updates,open-sourced,know-costed,suitable for tech spreading.

*By using “disk”, achieving no extra person control for human-robot cooperation at the same time.

*Implemented with ar, enable usages in practical production,like IoT.

BCI tech-focused robot for :

*The robot achieved highly mobility and environment adaptivity by using unique rotation movement.

*Structure of the robot is highly editable,capable of multiple tasks,like supporting astronaut,support high-land transportation,support or replace expressage by a human.

Powerpoint with videos included:

https://drive.google.com/file/d/12J8RYiCmKoDrVqtpcqL3KOpCqpMMS_d2/view?usp=sharing