Capturing 3D images with a SCANIFY

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

First of all, a special thank you to Fuel3D Labs for kindly letting us borrow SCANIFY, the company’s latest and greatest product. SCANIFY is an amazing device which lets you take 3D photographs at the blink of an eye - for those who don’t know, that’s a tenth of a second. The two 3.5 megapixel cameras, with the aid of three xenon lights, capture a high definition three dimensional image generated with the shadows that the flash creates. The simple and slick design is complemented with pleasing ergonomics to create an experience unrivalled by other 3D scanning equipment, no matter whether they’re cheaper or more expensive. However, nothing is perfect, the process does require a well-lit target within range for the scan to be performed.

We've had SCANIFY in use at RS for a couple of weeks now producing great results from random scans of items and faces around the office. Some of these scans have been imported into DesignSpark Mechanical and then edited to reflect a few of my fond childhood memories such as Lego and Star Wars. One lucky person from the office had his face scanned onto a Lego head whereas I pulled the short straw and had mine scanned so it looked as if it was made of carbonite, like Han Solo in the Empire Strikes Back. These scans were exported as an STI file, scaled down and then 3D printed to see how easy it was to create a physical model from a virtual 3D photograph. The whole process was fairly straight forward although there were a few hiccups, as you’d expect from someone experimenting with a totally new product. The problems I encountered were merely initial user issues: lighting while taking the shot; transferring the models between software programs; and struggling to stich up multiple scans. Fortunately all became second nature after completing a couple of scans.

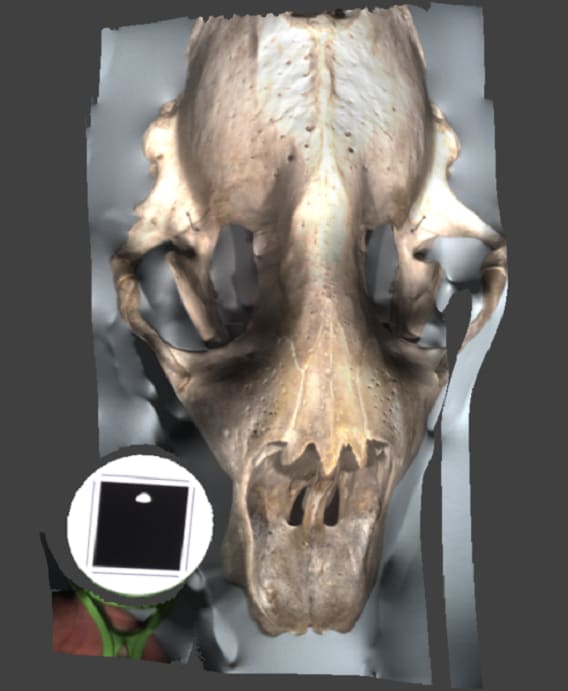

After these initial projects were completed, I thought it was time to spice things up and challenge SCANIFY'S performance by trying it out in a couple of museums in Oxford. I contacted Oliver from Fuel3D and we arranged to go to the museums with a couple of his colleagues, one of whom was a SCANIFY engineer so no-one knew the scanner better than him. First of all we went to the Natural History Museum where we took the majority of the scans since there were a large amount of perfectly-sized and complex objects. We took a few shots of animal skulls, ancient fossils and life-sized models that were located around the main room. Unfortunately a few of the models, mainly the skulls, didn’t come out very well because they have large cavities, which can’t produce the required shadows to enable a 3D scan. Therefore some absurd results were returned, for example this grey seal skull looks more like a zombie…

We did however manage to get some excellent results from a couple of sculptures, where a high level of detail and depth can be clearly seen. The best working models were non-shiny and without any cavities and produced great shadows from the three xenon lights. Fossils scanned extremely well because they are one solid piece with details on the top that can be easily picked up. We scanned a Mosasaur jaw fossil where the teeth and bone were modelled in great detail but unfortunately the target took up a large area, which slightly affected the 3D model.

Another impressive scan was an Asian elephant tooth, which showed edges and lips in excellent detail. When I came back to the office I initially forgot what this scan was because of the sheer size of the tooth and its ridged texture. The SCANIFY captures ridges and colours very well but unfortunately once these models are 3D printed most of the detail on the screen is lost and the different colours just turn into one.

Once we had scanned a handful of models we left the Natural History Museum. Since I had another 20 minutes until my parking ticket ran out, we decided to head further into town to visit the Ashmolean Museum, which is the world’s first university museum, built in 1683 and home to hundreds of Egyptian, Greek and Roman collections. We wandered through and realised that there were only a couple of artefacts that weren’t behind glass so we only had a limited choice of objects to scan. We did come across a Greek sculpture of a man on a horse that had a small amount of depth to it and so decided to scan it. It scanned really well considering the lack of depth, plus all of the detail was captured and mimicked on screen. The next and final model we tried was the stone model of Anubis, the Egyptian God depicted as a man with a canine head. Unfortunately the target couldn’t be located due to the lighting in the room and the colour of the artefact, so we weren’t able to produce a scan of Anubis.

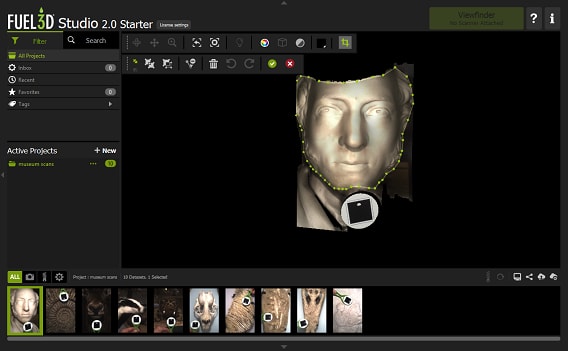

Once back at the office, I began cropping and finalising a few of the scans using Fuel3D Studio software. SCANIFY uses this software to capture the 3D photographs then computes calculations to create the 3D image.

There are many functions in Fuel3D Studio to enable a closer look at the acquired models. Obviously it does the basic orbiting, zooming in and out, and cropping of your scans but SCANIFY also has some unique features:

- Light is a handy tool where you can control the angle at which the light hits the model showing a better 3D view; the intensity can also be changed to help this.

- There are also four different filters to choose from that improve your viewing. Mono is really good to use with the light tool to show all the indents that are hard to see when it’s coloured normally.

- Stitching is used to join together multiple scans at different orientations of an object to produce a larger 3D model. Fuel3D Labs have recently released the 2.0 version of Fuel3D Studio which is more powerful than the last and has a built in stitching procedure.