A First Look at the NVIDIA Jetson AGX Orin

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

Flagship Jetson platform provides blistering performance for embedded AI and more.

In this article, we take a look at the NVIDIA Jetson AGX Orin, the latest and greatest GPU and Tensor core accelerated System-on-Module (SoM) for embedded applications. However, before we get on to Jetson Orin and the AGX variant in particular, we’ll take a brief look back at the development of NVIDIA Jetson family over the years and some architectural considerations.

Jetson 101

An AI powered follow trolley built using the low-cost Jetson Nano.

NVIDIA Jetson is a family of SoMs for embedded computing, which combine an Arm CPU for general-purpose processing, together with a graphics processing unit (GPU) for accelerating compute-intensive workloads. In addition to which they boast plenty of high-performance I/O for interfacing things such as cameras, displays and PCIe peripherals, plus general-purpose I/O for lower-speed device interfacing.

GPUs are today used in many high-performance computing (HPC) applications outside of graphics processing, and indeed have been fundamental in enabling the enormous advances made in AI in recent years. However, while AI models may be trained using large clusters of servers equipped with full size PCIe GPUs, something far more compact, energy-efficient and tailored to embedded use is needed to then execute those models — a.k.a. “inference” — in smart products. Enter Jetson.

The first Jetson board shipped coming up for 10 years ago, back in 2014. Since then there have been numerous subsequent generations, with each in turn providing significantly higher performance and improved features. The Jetson Nano announced in 2019 meanwhile lowered the barrier to entry and was aimed at getting hobbyists up and running with AI applications, with only minimal outlay, while enabling all manner of novel apps, such as an AI powered Follow Trolley, for example.

However, as we will come to see, Jetson Orin is far more powerful than Jetson Nano, and AGX variants in particular are aimed at more advanced uses. It should also be noted that while AI is a key application and where much of the focus tends to be, Jetson and GPU platforms, in general, may be put to use in many other applications which will benefit from high-performance parallel computing.

Timeline

NVIDIA Jetson AGX Orin Developer Kit and Module.

So let’s take a look at the Jetson family timeline and compare the GPU performance figures.

- Jetson TK1 (2014): 326 GFLOPS

- Jetson TX1 (2015): 1 TFLOP

- Jetson TX2 (2017): 1.33 TFLOPS

- Jetson AGX Xavier (2018): 32 TOPS

- Jetson Nano (2019): 472 GFLOPS

- Jetson Xavier NX (2020): 21 TOPS

- Jetson Orin Nano: (2023): 20-40 TOPS

- Jetson Orin NX (2023): 70-100 TOPS

- Jetson AGX Orin (2023: 200-275 TOPS

We can see that the numbers jump significantly with the arrival of AGX Xavier in 2018, but we need to be careful as the performance is expressed as Tera Operations Per Second (TOPS) — which could be integer or float etc. — rather than Tera/Giga Floating Point Operations (T/GFLOPS). Although it’s safe to assume a major performance improvement, and we can see once again with the arrival of Orin that the numbers jump significantly with the comparable NX and AGX variants.

While less powerful than the higher-end Jetson SoMs, the original Jetson Nano and Jetson Orin Nano are no less important, as they address needs at particular price/performance levels.

This is of course a very simplistic comparison, as we’ve not considered the parallel improvements in CPU performance, RAM and interfaces etc. also. However, we’ll be taking a look at what Orin provides in this respect in due course.

Architecture

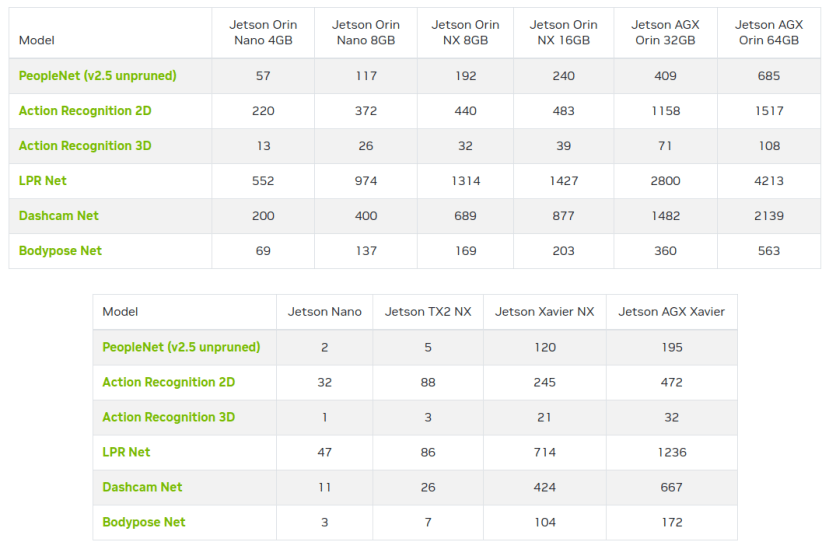

Jetson pre-trained model benchmarks.

While simple benchmarks such as operations per second give a good indication of relative performance, it can also be important to take into consideration the GPU microarchitecture. Every discrete GPU and Jetson platform (combined CPU+GPU) alike is implemented using a particular NVIDIA GPU architecture, with optionally some number of Tensor cores also. For example, Jetson AGX Orin can have up to a 2048-core Ampere architecture GPU with 64 Tensor cores.

The overall performance for a given application will depend on the nature of the workload, GPU architecture and number of cores, and number of Tensor cores if any, amongst other things.

Fortunately, NVIDIA also provide AI benchmark figures for the Jetson family, using both MLPerf and pre-trained model benchmarks. Here we can see with the latter that we would typically get more than 3x performance increase by upgrading from AGX Xavier to AGX Orin 64GB.

Other considerations

GPU-accelerated applications are developed using NVIDIA’s CUDA Toolkit and as one would expect, the SDK is updated over time to introduce new features, with some only being available on more recent or the very latest GPU architecture. Meanwhile, newer releases will also drop support for older architectures. For example, the CUDA 12.0 release notes state that support has been dropped for hardware with Compute Capability versions 3.5 and 3.7, which are Kepler architecture.

So let’s take a look at the Jetson product family and which CUDA Compute Capability version and hence GPU architecture they implement.

- Nano: v5.3 (Maxwell)

- TX2: v6.2 (Pascal)

- Xavier AGX & NX: v7.2 (Volta)

- Orin AGX, NX & Nano: v8.7 (Ampere)

Each product implements a specific Compute Capability version and this will in turn determine which CUDA SDK features are supported, in addition to how long CUDA support is likely to be provided; the higher the Compute Capability version, the longer that hardware will be supported.

Additionally, some frameworks and applications which build upon CUDA may also specify a minimum Compute Capability version or GPU architecture as a requirement. So while it should be possible to migrate applications to newer hardware and take advantage of performance increases, it may be that certain software requires features which are only available with more recent hardware.

Jetson AGX Orin

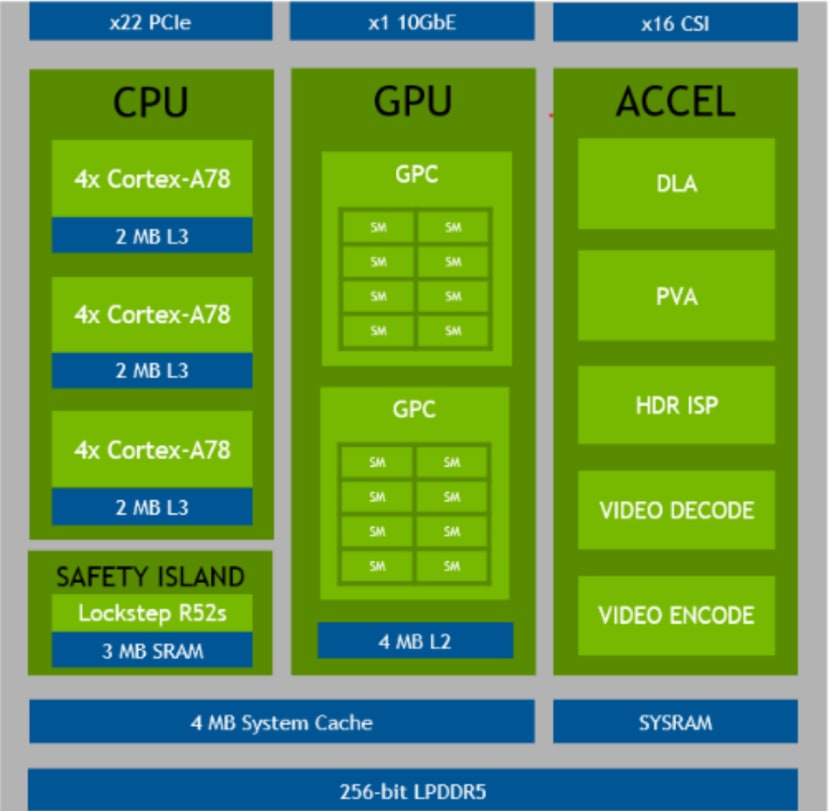

Orin SoC block diagram.

Jetson AGX Orin is provided in two versions, which are differentiated by the amount of RAM they have: either 32GB or 64GB. However, the differences by no means end there, with the 64GB variant also benefiting from upgraded SoC specifications.

Above can be seen the block diagram for AGX Orin 64GB, which in terms of processing features:

- 12-core Arm Cortex-A78 (3x clusters of 4-cores)

- Ampere GPU with:

- Two graphics processing clusters (GPC)

- Eight texture processing clusters (TPC)

- Deep learning accelerator (DLA) specified at up to 105 INT8 TOPS

The 32GB variant meanwhile has an 8-core Arm CPU (2x clusters), the GPU has a slightly reduced seven TPCs, and the DLA is specified with a performance of up to 92 INT8 TOPS. So not a huge difference, aside from the 64GB model having 50% more Arm cores. However, it may be worth upgrading to the 64GB variant for applications which need every bit of GPU processing power they can get, along with those which are of course CPU-bound.

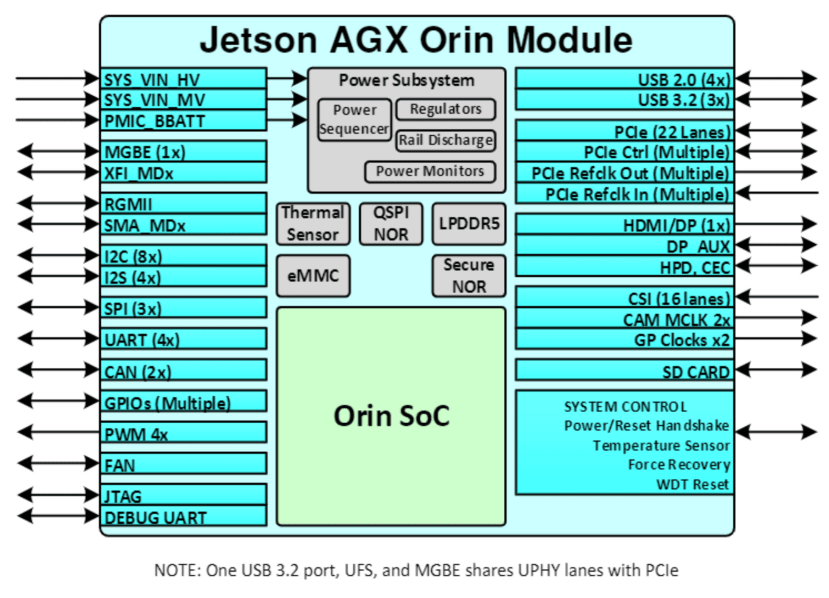

Jetson AGX Orin SoM

The Jetson AGX Orin module builds upon the powerful SoC to add a wealth of interfaces, which can be seen pictured above. These notably include 2x USB 3.2 ports, 22x lanes of PCIe, HDMI and DisplayPort, and 16x lanes of CSI for convenient camera interfacing. The latter meaning that up to 8x MIPI CSI cameras may be attached, although the Video Input (VI) engine supports a maximum of 6x concurrent video streams — which should be more than enough for most applications!

In terms of networking AGX Orin features 1x 10G and 1x 1G port. In some applications the 10G interface might be used for local networked sensors, while 1G is used for uplink, for example.

There is no shortage of low-speed I/O either, with this including 4x USB 2.0 ports, 4x UART, 3x SPI, 2x I2C and 2x CAN bus, plus GPIOs. Perfect for interfacing peripheral devices, simpler sensors, actuators, displays and buttons etc.

The AGX Orin module utilises the same form factor and connectors as the earlier Jetson AGX Xavier, and is also pin-compatible, albeit with some limitations. This is understandably larger than the SO-DIMM form factor utilised by both Orin and previous generation Nano/NX modules.

Jetson AGX Orin Developer Kit

Finally, we get to the Jetson AGX Orin Developer Kit, which in our case is the top specified variant with 64GB RAM and a more powerful SoC.

Contents

The developer kit comes well packaged and the first impression you get is that this feels more like a consumer product than a dev kit; the SoM and carrier board are integrated into a very smart enclosure, which has a notably quality feel and it wouldn’t look out of place on a desktop or in a living room. The kit also includes a PSU and with this the usual selection of mains power cords.

Carrier board I/O

On one side of the enclosure, there are both USB-C and barrel jack power inputs, with the supplied desktop PSU using the former. Next to which we have 10G Ethernet, 2x USB 3.2 ports and DisplayPort. Then on the far right of that side a USB Micro-B port for accessing a debug UART.

On another side we then have power, force recovery and reset buttons, a Micro SD card slot, and access for routing cabling out from connectors which are not PCB edge mounted.

Underside view showing the 40-pin connector.

Then on the third side, we have a 40-pin connector which exposes low-speed I/O and next to this an additional 2x USB 3.2 ports, plus just out of sight a USB-C port (UFP and DFP, USB 3.2 Gen 2).

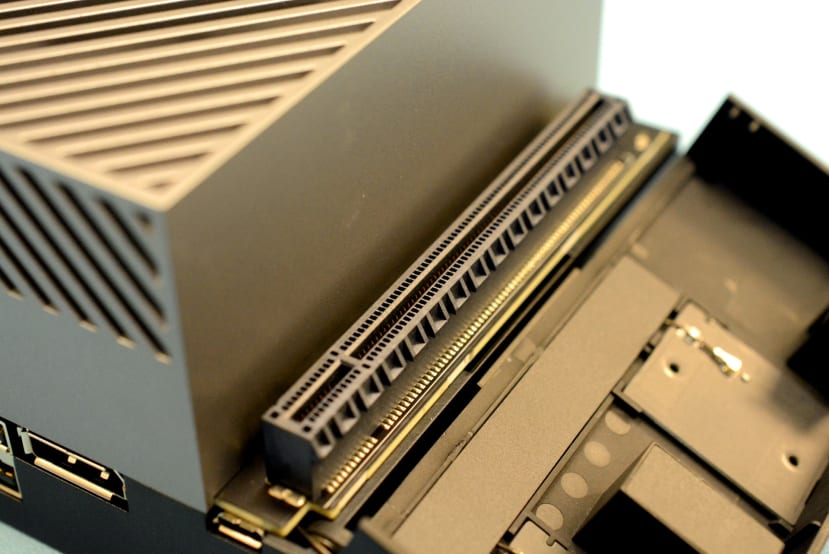

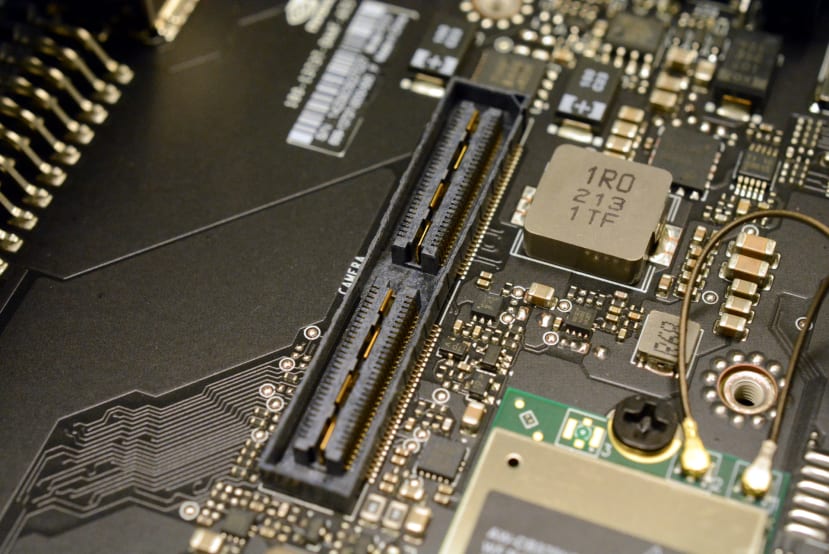

The fourth side meanwhile has a rather neat magnetic cover which snaps away to reveal a full-size PCIe slot with Gen4 x8.

Flipping the kit over again we can see that a WLAN adapter is included for convenience.

A high-speed connector is provided for MIPI CSI camera interfacing.

Other connectors include an M.2 M-key slot for an NVMe SSD, along with connectors for HD audio, automation, JTAG and RTC battery backup.

One thing to note is that there is no base cover, hence providing easy access to connectors which are not exposed along the sides, and with cables being routed out of the aperture below the buttons.

Final words

The AGX Orin 64GB is currently at the top end of the Jetson family, and AI benchmarks show that with pre-trained models you can typically expect a real-world performance of around three times that of the previous generation AGX Xavier. While compared with the original Jetson Nano the Jetson AGX Orin 64GB achieves close to 200x the performance running the DashCamNet model.

The Jetson AGX Orin SoM integrates no shortage of both high and low-speed I/O, and the Developer Kit provides a smart way of evaluating the platform and facilitating development. In a future article, we will get hands-on with this, setting up the developer kit and running through some of the provided examples.

Comments