We Interrupt this Program…

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

Ferranti Atlas – State-of-the-art computing in 1963. Note all the incredibly slow peripheral devices: teleprinter, paper-tape readers and punches. Extensive use of prioritised interrupts would have been used to keep each device operating with maximum efficiency.

Image credit: Wikipedia

The need to interrupt a computer program and switch to another piece of code when an event occurs that just won’t wait has been present since the early mainframes were built. Every computer possesses interrupt hardware requiring a fair amount of software code to support it. The thing is, this hardware and code are often ‘invisible’ to the programmer writing in a high-level language on a PC running under an Operating System (OS) such as Linux or Windows. As you sit there typing, watching things happen on the display, the processor is constantly receiving and reacting to interrupts from peripheral systems such as the keyboard, timers, graphics chips and network hardware. And you are completely unaware of it. A properly set up interrupt system can make all the difference between efficiently run code with little or no computing time wasted waiting for slow peripherals including fast recovery from fault conditions, and a program that is so slow and unresponsive that real-time operation of an embedded application becomes impossible. But a poorly designed one is a nightmare to debug and can put you off interrupts for life! Fortunately, it doesn’t have to be that way – given a bit of care.

When to fire-up the interrupt system

Probably the only time you’re likely to get closely involved with interrupts is when writing code for a stand-alone (no OS involved) embedded microcontroller application. A single-board computer (SBC) such as Raspberry Pi is controlled by an OS and that handles the interrupts. A basic Arduino project or anything running on a microcontroller development board such as the MikroElektronika Clicker 2 board I use for FORTHdsPIC may well benefit from enabling the interrupt system. These are the general areas where they are used:

- For external devices to signal the processor they are ready to receive a command to do something. This is the main reason interrupts were invented in the 1950s, so that the new ‘super-fast’ electronic computer wasn’t held up waiting for the old super-slow mechanical printer to print a character. Up to that point, it had been the printer that was hanging around waiting for the slow human (keyboard) input!

- For internal devices to signal a need for attention to the user program. For example, a timer/counter signalling that a pre-programmed count has been reached indicating the end of an interval of elapsed time.

- A user program can interrupt itself by executing a special instruction: for the 80x86 series of microprocessors used in PCs, it’s called INT. It’s often used instead of a subroutine call where the target address for the call is not known.

- To signal critical processing errors or internal hardware failures. Dedicated on-chip hardware designed to detect particular fault conditions will generate these interrupts, sometimes called Traps or Exceptions.

The first two categories in the above list cover hardware-generated interrupts enabled and serviced by the user’s program as part of normal operation. A software interrupt, at first sight, seems to be rather pointless – when would a program need to interrupt itself? There aren’t many situations where a software interrupt is pretty vital for normal operation, but there is one, and any computer device you are using now will execute an INT – or its equivalent - every time you press a key (see Software Interrupts below for an explanation). The final category contains interrupts that occur only in response to abnormal operation in an attempt to prevent a complete processor ‘crash’. Examples include ‘division by zero’ attempts by the user’s program or processor clock failure signalled by the time-out of a Watchdog timer.

Maskable interrupts and non-maskable traps

Before moving on to describe the basic operation of interrupts, I’d just like to clear up some confusion that can arise with the jargon. For most microprocessor/microcontroller chips the normal interrupts described above are disabled from power-up or reset, and your code will have to enable the ones you want to use by setting the appropriate bits in an Interrupt Enable register. The problem is that some texts refer to the latter as an Interrupt Mask register implying that its purpose is to disable interrupts. Both terms are in regular use: just remember Masked = Disabled and Unmasked = Enabled. Whatever the register is called, maskable interrupts are always masked/disabled from reset.

But what of Traps or Non-Maskable Interrupts (NMI)? As the name suggests, they are permanently enabled. The addresses of code routines for handling all types of interrupts are held in a dedicated part of the program memory called the Interrupt Vector Table (IVT). Every entry is initialised to point to the reset handler unless set differently in the source code of the user’s program. It means that any NMIs occurring will reboot the program; if every time you run your code it just restarts then this is a pretty good indication that it contains a serious bug. A good tip to help with debugging is to include a set of Interrupt Service Routines (ISR) with your code that just print out an appropriate error message for each NMI – it saves a lot of guesswork!

Does the function I’m designing need to be interrupt-driven?

Bearing in mind that interrupt-based code can be tricky to debug, you might want to consider some other way of achieving the same end. Let’s take the example of a routine that gets ASCII data from a standard keyboard. A simple low-speed RS-232 serial bus is to be used, connected to UART interface hardware built-in to the target processor chip. The question is: how should the program running on the processor detect that a keyboard character has been received by the UART?

- By sitting in a tight loop reading or ‘polling’ a data-received flag bit in the UART status register. The processor won’t be doing anything else while it’s waiting for the flag to be set. But the code is very simple and likely to work first time.

- By reacting to a data-received interrupt from the UART, reading the data and then returning immediately to the program task where it left off. The code and its interaction with hardware is rather more complicated than for the first option.

The answer is, of course, it depends. It depends on the code that requires the input data. If it’s a single-task program on an embedded microcontroller that loops around forever, then a simple polling routine may suffice as it can be incorporated in the main task loop. An example of this approach is the console keyboard input on my FORTHdsPIC embedded development system.

On the other hand, if the main program is an OS on a microprocessor-based system, able to multitask user applications, then an interrupt solution is best as there is absolutely no time to waste polling the UART in case a key has been pressed. Any SBC system with an OS, like Raspberry Pi, will make extensive use of interrupts for I/O.

Anatomy of an interrupt 1 – a program pause function for FORTHdsPIC

Listing 1 shows the dsPIC code necessary to implement the WAIT instruction in FORTHdsPIC. This interrupt-driven routine causes the processor to suspend execution of the user’s Forth program for a specified time in milliseconds. The on-chip timer T1 is programmed to provide interrupts at precisely 1ms intervals.

How it works

The first four lines of the WAIT function code set up the dsPIC chip’s Timer 1 so that it will overflow 1ms after being turned on.

Line 5 enables the interrupt to be triggered when that overflow occurs.

Line 6 pops the user-selected interval in milliseconds from the parameter stack.

The next four lines clear the timer, turn it on, and wait in a tight loop for the timer to be turned off by the interrupt service routine after 1ms. That’s how the program knows that an interrupt has occurred.

The next three lines cause the above loop to be repeated until the selected time has elapsed.

Finally, line 13 disables further Timer 1 interrupts. Keeping interrupts masked until they are needed helps to avoid any random inexplicable program deviations.

The interrupt service routine is very short. All it has to do is stop Timer 1, signalling the main program that an interrupt has occurred. Before executing the return-from-interrupt instruction, it’s essential that the Timer 1 interrupt flag is cleared. Otherwise, a re-interrupt will occur immediately following the return to the interrupted WAIT program.

Anatomy of an interrupt 2 – an interval timer for FORTHdsPIC

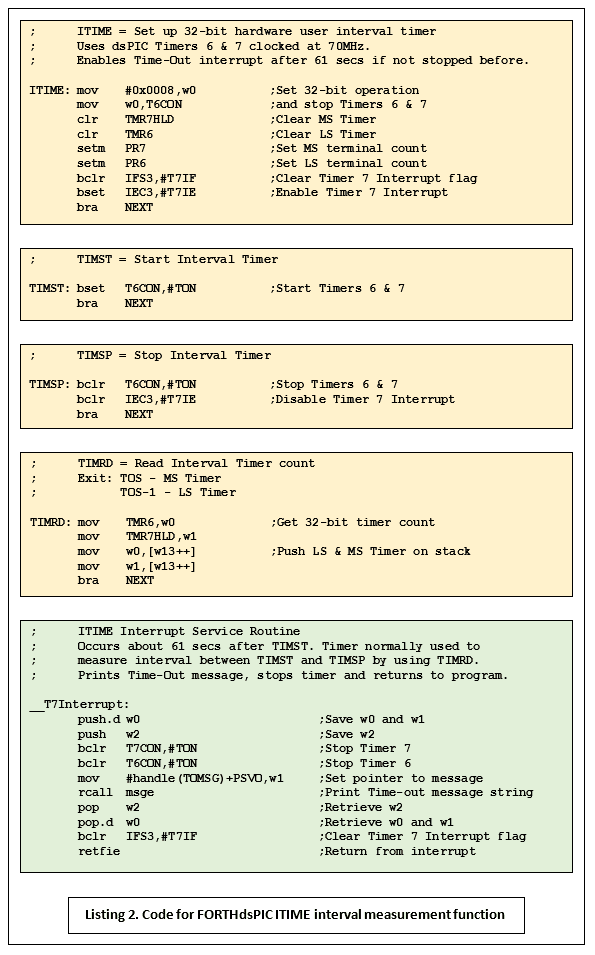

Listing 2 shows the dsPIC code for a different kind of timing function: one that’s used to measure the interval between two points in the user’s Forth program code. ITIME sets up Timers 6 and 7 as a single 32-bit counter clocked at the processor rate of 70MHz. TIMST starts the timer and TIMSP stops it. The count value can be read with TIMRD then divided by 70 to give a measurement in microseconds. A very useful function for creating benchmark timings. Note that no interrupts are involved unless the counter overflows after about 1 minute: the maximum interval that can be timed. The ISR stops the timers and prints an alert message before returning to the user program.

How it works

The ITIME routine sets up a 32-bit counter, clears it, sets the terminal count to maximum (FFFFh) and enables the overflow interrupt.

Nothing further happens until the TIMST routine is executed and all that does is turn the timers on. Once started, the timer increments at the system clock speed while the user’s Forth code is executing.

When the TIMSP routine executes the timer is halted and the interrupt enable cleared.

TIMRD may then be used to push the count value onto the stack for further processing.

Under normal conditions, no interrupt is triggered and the ISR is never entered. When it is, the timer is stopped and an alert message sent to the console. The interrupt enable is cleared and control returns to the interrupted user program.

System watchdog

With some modification of the ISR, this code suite could function as a ‘Watchdog’ in an embedded system which would force a reboot if, say, a peripheral device took too long to respond to a request for data. In the case of the dsPIC, the ISR code would be reduced to a single instruction: reset.

Probably the single biggest reason for erratic interrupt operation

Go back and look closely at the ISR in Listing 2. The very first instructions Push the contents of working registers w0, w1 and w2 onto the machine stack. Why? Because the subsequent code changes the contents of those registers. The original contents must be restored by the Pop instructions before execution returns to the interrupted program. Failing to ensure this continuity can cause random mayhem that is difficult to debug. This particular ISR contains a subtle trap if you’re not paying enough attention: it looks as if only w1 is used, but what about that subroutine call? Checking the code for msge reveals that it uses w0 and w2 as well. As I said, the failure by an ISR to preserve the contents of any registers used, is probably the single biggest reason for erratic interrupt operation.

Software Interrupts

The term ‘software interrupt’ is something of a misnomer, mainly because no interrupt is involved. It actually refers to a machine instruction with a mnemonic such as SWI or INT followed by an 8-bit code. This instruction performs the same function as a subroutine CALL, but with one major difference: it gets the target address indirectly like an interrupt, from a vector table. A normal interrupt request is directed to the correct entry in the table by ‘hardwired’ logic; the SWI gets the correct entry in the table from the 8-bit code number that follows it in the program.

What’s the point? The SWI comes into its own on an OS-based system like a PC or a Raspberry PI SBC. The vector table is provided by the OS and contains addresses of useful ‘low-level’ routines for console I/O, USB I/O, reading/writing to disk drives, and many others. It provides access to these routines for application programs running under the OS, without the program needing to know absolute memory addresses. If the OS is updated and addresses are changed, it won’t affect the application. However, a stand-alone embedded application is unlikely to need software interrupts.

Interrupt Latency

This is the time delay between the interrupt signal being asserted and the start of ISR execution. Interrupt latency can be of major concern in high-speed real-time applications, but just reading a figure off a processor chip’s datasheet may not tell the whole story.

- In most systems, the ‘jump’ to the ISR doesn’t take place until the processor has finished executing the instruction being worked on when the interrupt came in. This of course introduces random jitter into the latency.

- Does the ISR begin before or after any software ‘housekeeping’ code such as the context-saving Push instructions in Listing 2? Some processor cores such as those from ARM will perform context-saving and restoration automatically and much more quickly.

Interrupt Priority

The problem of resolving simultaneous interrupts is handled by giving them all individual priorities. ‘Natural’ priorities are often assumed with position in the IVT being the deciding factor; those at the bottom with the highest priority through to those with the lowest at the top. In addition, Interrupt Priority Registers (IPR) are provided to re-assign priorities if necessary. Personally, I’ve found that most embedded projects will work with the default values.

Interrupt Nesting

Most hardware will support nesting, the essential feature of which is the ability of a higher priority interrupt to interrupt a running lower priority ISR. It’s usual to assign the highest priority to the least-frequent interrupts. So, for example, a very slow line-printer on old mainframe (see heading photo) would have priority over the paper-tape readers and punches, with magnetic drum or tape storage further down the pecking order. Get the priorities right, and all these devices will operate at optimum speed, get it wrong and everything slows to a crawl.

Finally

Interrupt handling still strikes fear into the hearts of programmers. It shouldn’t, as long as they take note of: ‘Probably the single biggest reason for erratic interrupt operation’ mentioned above.

If you're stuck for something to do, follow my posts on Twitter. I link to interesting articles on new electronics and related technologies, retweeting posts I spot about robots, space exploration and other issues.