Beyond Hearing - An AR interface for Deaf people localise sound

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

Introduction

Beyond Hearing are an interface designed for Deaf people to intuitively localising and experience sound on an AR device with a multi-sense system. This design creates the accessibility for the Deaf community to know more information about sound.

Motivation:

My sister was traumatised and suffered from hearing loss since year 2021. She lost her right hearing ability which obstructs her from identifying the source of sound correctly.

1 in 6 people in UK is suffering from hearing loss and 448M people in the world have issues in hearing ability. Sound localisation and engagement for Deaf people are the major issues for their daily life. 80% of my interviewees from the international Deaf community mentioned these issues limits their activities and sound experiences.

Currently, many products try to enhance the sound recognition ability of Deaf people, for example, hearing aids or cochlear implants, but some problems existed. Like hearing aids will amplifier everything, expensive to get and they can not help Deaf people know the direction of sound since it is not built for spatial hearing.

Problem:

There still not a mature solution for indication localisation of sound and engagement for Deaf people.

Many devices used different senses to transfer sound into other sensory signals for Deaf people to experience sound. For instants, the Neo Sensory Buzz, a band that provides different vibrations when receiving different sounds, Vibrotextile, a shirt that transfers music into sound, and AR glasses transferred dialogue into the caption. Nevertheless, they convey the sound signal to users, which didn’t tell them the localisation of sound.

Approach:

My approach is through design process including exploration, interviews, experiments, prototyping, iteration and user tests.

Exploration:

What is the better ways to present the shape of sound and indicate location?

Shape of sound

What is the shape of sound in hard-of-hearing people's minds? With collecting the sound in a different place in London, using p5.js coding generates different shapes with various environmental sounds. But in hearing loss people's minds, there is just a simple circle, not have many patterns on it. User testing is a good way of experimenting but needs more information to indicate the location of sound.

Tattoo

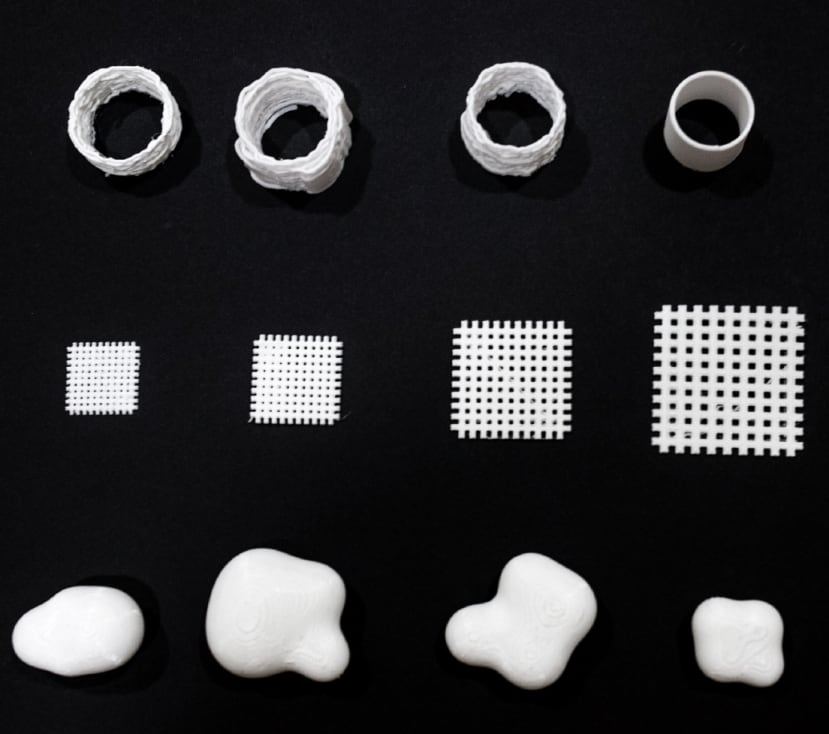

A tattoo-like interface is a form with presents the direction of the sound by the tactile feedback in a different place in the tattoo grids. However, the movement of the tattoo is not so clear since the distance between each grid is so close that users are not easy to distinguish and know where the sound comes from.

Metaball

The metaball shape will change with the environment. For example, if the surrounding is quiet or gentle sound, the shape will be smoother. If the environment sound is sharp or high pitch, the 3D form will change and people feel the touch differently. It will be like a dynamic fluid sphere, changing shape with various sounds.

Conclusion

After user tests and feasibility consideration, the Tattoo and Metaball interface is not a suitable solution for this stage. So I move my focus to my next idea, the Augmented Reality interface to indicate the direction of the sound.

Experiment:

What is the better ways to present the shape of sound and indicate location?

AR interface glasses

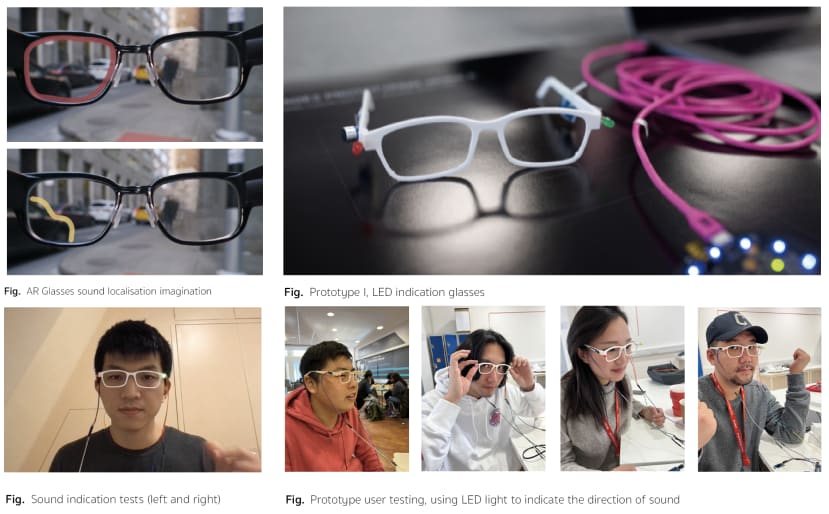

To quickly check if the AR display indicated sound direction is a good way or not, I add the LEDs and sound sensors on the side of the 3D print glasses.

The first test is a success. The LED on the site can blink through the sound around.

The user tests also got positive feedback. For example, one said that the LED on the sites is good since it can remind me there is sound but also not block the whole scene.

Direction:

Create an Augmented Reality interface for Deaf people to localise and experience shape of sound

Interview indicates that 80% of hearing-impaired people struggle to localise sound and therefore do not detect dangerous situations or participate well in conversations, so, the main focus solution is threefold:

1) localise sound with visuals (AR)

2) localise with vibration (multi-senses)

3) Add on: visualise more contextual information, f.e. category of sound, danger or not, etc.

Hardware:

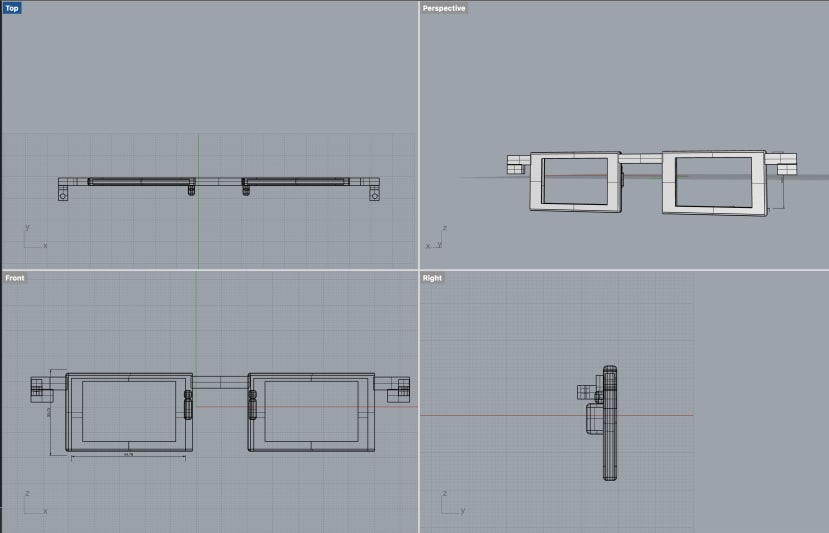

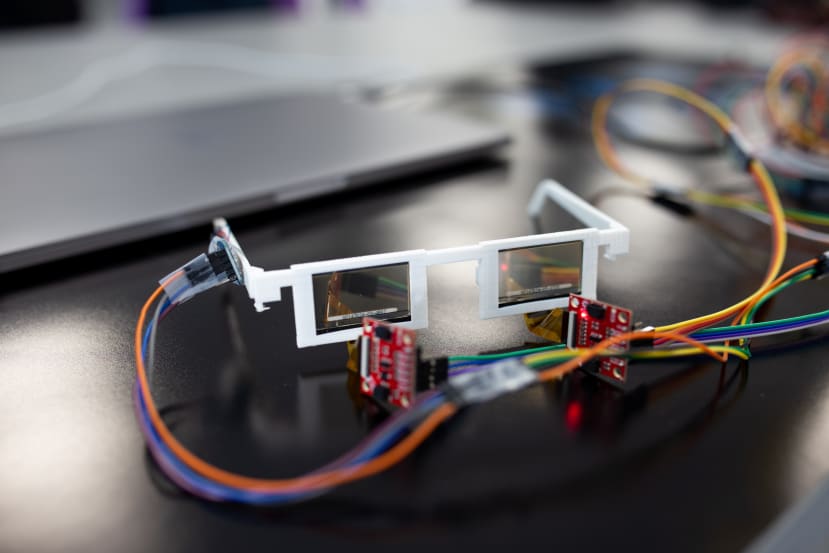

I design the glasses appearance and with sound sensors (Respeaker V2.0), haptic motors and transparent display to made the AR glass prototype-II.

Software:

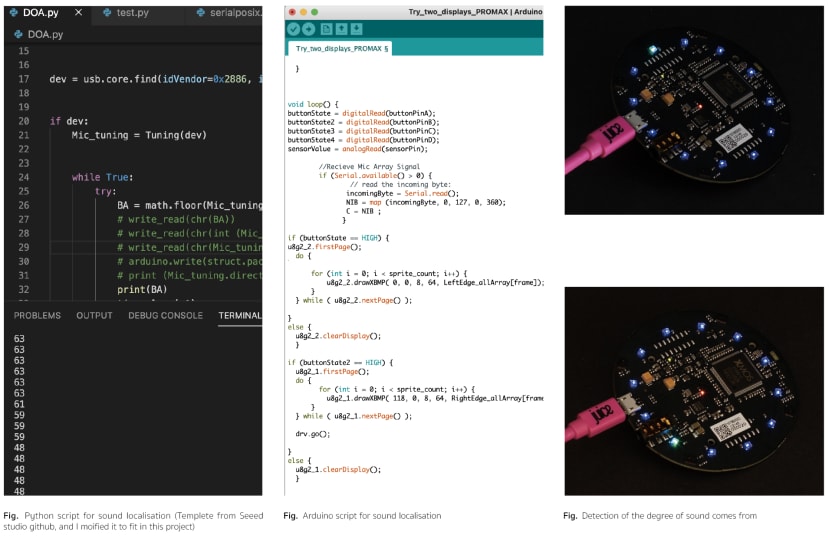

Using the sound sensor (Re-speaker v2.0) and the python script to calculate the directional of sound.

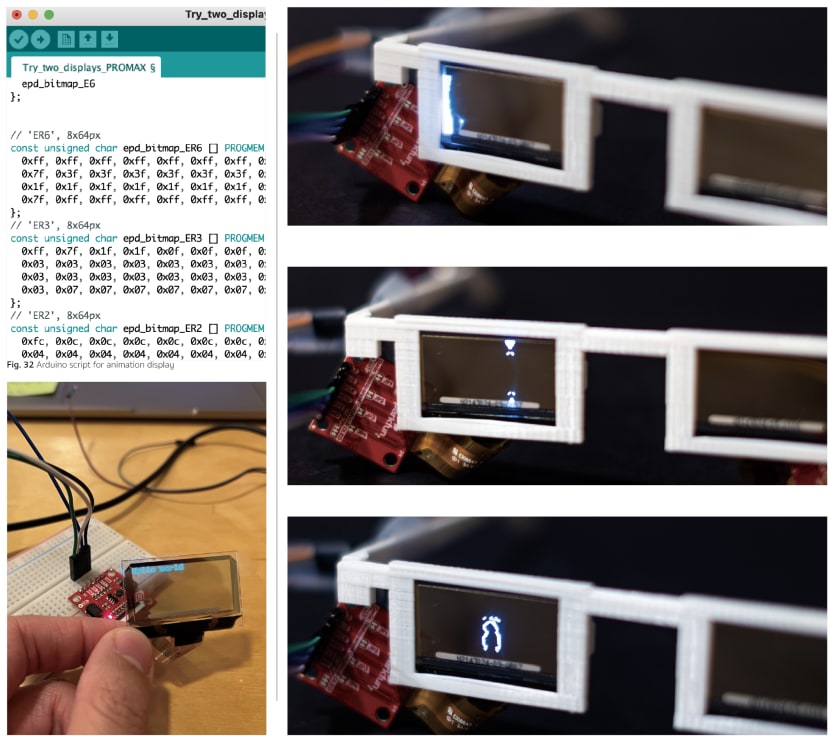

After collecting the degree to which the sound came from, I use Pyserial to communicate this with Arduino and made the transparent display show the pattern I design on the location of sound.

Functional Demo:

User tests:

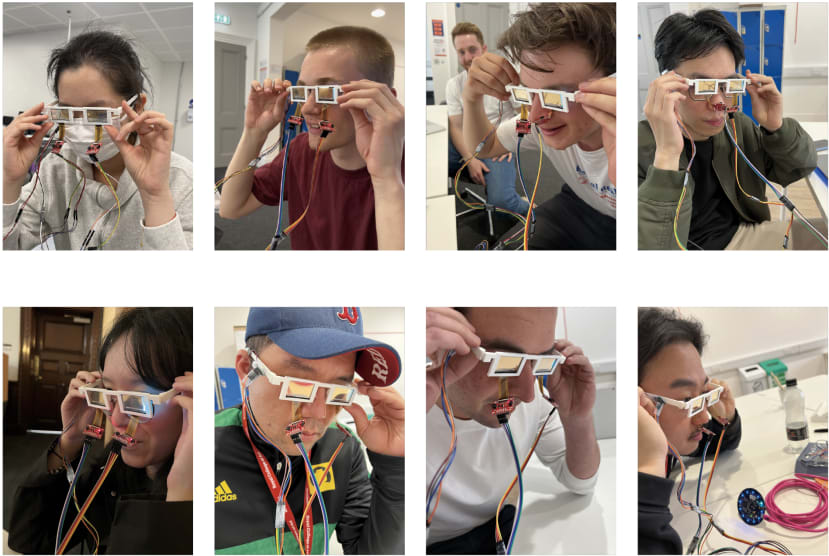

User tests including 3 hard of hearing people and 8 cohorts.

Most of feedback are quiet positive. One deaf person mention that this is what she wants and it really helps on indicating the location of sound.

They also mention that this device can design the on/off mode, since if this always on might cause the information overload.

Reflection & Learning:

One of the challenges was engaging the Deaf community, because most of them were defensive at first. By the time my credibility and trust were built, I get to know their actual needs. Also, during the designing phase. How to keep the device informative but not sensational overloaded is challenging. Moreover, everyone has their own interpretation of sound, therefore, connecting the sound with visual effects and haptic cues in a universal way is also tricky. I managed to find the balance of these through multiple iterations and testing, and with more testing cycles, the interface can be more comprehensive.

Outcome & conclusion:

Sound localised AR interfaces for Deaf people to know the direction of sound and unleash hearing superpower

Beyond Hearing is an argument reality interface for Deaf people localise and experience sound by visual and tactile cues. In this project, the functional prototype has demonstrated that it can localise sound and successfully transfer the local information from the sound sensor to AR glasses transparent display as one of the carriers.

In this report, the basic function and interface have been developed corresponding to various scenarios, for example, alert, communication and entertainment. However, there is a more potential application that can use on this interface.

Comments