利用Jetson Nano、Google Colab實作CycleGAN:將拍下來的照片、影片轉換成梵谷風格 – 訓練、預測以及應用篇 (繁體)

关注文章你觉得这篇文章怎么样? 帮助我们为您提供更好的内容。

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

你觉得这篇文章怎么样?

訓練CycleGAN

首先先取得訓練資料:

from tqdm import tqdm

import torchvision.utils as vutils

total_len = len(dataA_loader) + len(dataB_loader)

for epoch in range(epochs):

progress_bar = tqdm(enumerate(zip(dataA_loader, dataB_loader)), total = total_len)

for idx, data in progress_bar:

############ define training data & label ############

real_A = data[0][0].to(device) # vangogh image

real_B = data[1][0].to(device) # real picture

我們要先訓練G,總共有三個標準要來衡量生成器:

1.是否能騙過鑑別器 (Adversial Loss ):

對於G_B2A來說,將A轉換成B之後給予1的標籤,並且計算跟real_B 之間的距離。

############ Train G ############

optim_G.zero_grad()

############ Train G - Adversial Loss ############

fake_A = G_B2A(real_B)

fake_out_A = D_A(fake_A)

fake_B = G_A2B(real_A)

fake_out_B = D_B(fake_B)

real_label = torch.ones( (fake_out_A.size()) , dtype=torch.float32).to(device)

fake_label = torch.zeros( (fake_out_A.size()) , dtype=torch.float32).to(device)

adversial_loss_B2A = MSE(fake_out_A, real_label)

adversial_loss_A2B = MSE(fake_out_B, real_label)

adv_loss = adversial_loss_B2A + adversial_loss_A2B

2.是否能重新建構 (Consistency Loss):

舉例 G_B2A(real_B) 產生風格A的圖像 (fake_A) 後,再丟進 G_A2B(fake_A) 重新建構成B風格的圖像 (rec_B),並且計算 real_B 跟 rec_B之間的差距。

############ G - Consistency Loss (Reconstruction) ############

rec_A = G_B2A(fake_B)

rec_B = G_A2B(fake_A)

consistency_loss_B2A = L1(rec_A, real_A)

consistency_loss_A2B = L1(rec_B, real_B)

rec_loss = consistency_loss_B2A + consistency_loss_A2B

3.是否能保持一致 (Identity Loss):

以G_A2B來說,是否在丟入 real_B的圖片後,確實能輸出 B風格的圖片,是否能保持原樣?

############ G - Identity Loss ############

idt_A = G_B2A(real_A)

idt_B = G_A2B(real_B)

identity_loss_A = L1(idt_A, real_A)

identity_loss_B = L1(idt_B, real_B)

idt_loss = identity_loss_A + identity_loss_B

接著訓練D,它只要將自己的本份顧好就好了,也就是「能否分辨得出該風格的成像是否真實」。

############ Train D ############

optim_D.zero_grad()

############ D - Adversial D_A Loss ############

real_out_A = D_A(real_A)

real_out_A_loss = MSE(real_out_A, real_label)

fake_out_A = D_A(fake_A_sample.push_and_pop(fake_A))

fake_out_A_loss = MSE(real_out_A, fake_label)

loss_DA = real_out_A_loss + fake_out_A_loss

############ D - Adversial D_B Loss ############

real_out_B = D_B(real_B)

real_out_B_loss = MSE(real_out_B, real_label)

fake_out_B = D_B(fake_B_sample.push_and_pop(fake_B))

fake_out_B_loss = MSE(fake_out_B, fake_label)

loss_DB = ( real_out_B_loss + fake_out_B_loss )

############ D - Total Loss ############

loss_D = ( loss_DA + loss_DB ) * 0.5

############ Backward & Update ############

loss_D.backward()

optim_D.step()

最後我們可以將一些資訊透過tqdm印出來

############ progress info ############

progress_bar.set_description(

f"[{epoch}/{epochs - 1}][{idx}/{len(dataloader) - 1}] "

f"Loss_D: {(loss_DA + loss_DB).item():.4f} "

f"Loss_G: {loss_G.item():.4f} "

f"Loss_G_identity: {(idt_loss).item():.4f} "

f"loss_G_GAN: {(adv_loss).item():.4f} "

f"loss_G_cycle: {(rec_loss).item():.4f}")

接著訓練GAN非常重要的環節就是要記得儲存權重,因為說不定訓練第100回合的效果比200回合的還要好,所以都會傾向一定的回合數就儲存一次。儲存的方法很簡單大家可以上PyTorch的官網查看,大致上總共有兩種儲存方式:

1.儲存模型結構以及權重

torch.save( model )2.只儲存權重

torch.save( model.static_dict() )而我採用的方式是只儲存權重,這也是官方建議的方案:

if i % log_freq == 0:

vutils.save_image(real_A, f"{output_path}/real_A_{epoch}.jpg", normalize=True)

vutils.save_image(real_B, f"{output_path}/real_B_{epoch}.jpg", normalize=True)

fake_A = ( G_B2A( real_B ).data + 1.0 ) * 0.5

fake_B = ( G_A2B( real_A ).data + 1.0 ) * 0.5

vutils.save_image(fake_A, f"{output_path}/fake_A_{epoch}.jpg", normalize=True)

vutils.save_image(fake_B, f"{output_path}/fake_A_{epoch}.jpg", normalize=True)

torch.save(G_A2B.state_dict(), f"weights/netG_A2B_epoch_{epoch}.pth")

torch.save(G_B2A.state_dict(), f"weights/netG_B2A_epoch_{epoch}.pth")

torch.save(D_A.state_dict(), f"weights/netD_A_epoch_{epoch}.pth")

torch.save(D_B.state_dict(), f"weights/netD_B_epoch_{epoch}.pth")

############ Update learning rates ############

lr_scheduler_G.step()

lr_scheduler_D.step()

############ save last check pointing ############

torch.save(netG_A2B.state_dict(), f"weights/netG_A2B.pth")

torch.save(netG_B2A.state_dict(), f"weights/netG_B2A.pth")

torch.save(netD_A.state_dict(), f"weights/netD_A.pth")

torch.save(netD_B.state_dict(), f"weights/netD_B.pth")

測試

其實測試非常的簡單,跟著以下的步驟就可以完成:

1.導入函式庫

import os

import torch

import torchvision.datasets as dsets

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

from tqdm import tqdm

import torchvision.utils as vutils

2.將測試資料建一個數據集並透過DataLoader載入:

這邊我創了一個Custom資料夾存放我自己的數據,並且新建了一個output資料夾方便察看結果。

batch_size = 12

device = 'cuda:0' if torch.cuda.is_available() else 'cpu'

transform = transforms.Compose( [transforms.Resize((256,256)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.5, 0.5, 0.5], std=[0.5, 0.5, 0.5])])

root = r'vangogh2photo'

targetC_path = os.path.join(root, 'custom')

output_path = os.path.join('./', r'output')

if os.path.exists(output_path) == False:

os.mkdir(output_path)

print('Create dir : ', output_path)

dataC_loader = DataLoader(dsets.ImageFolder(targetC_path, transform=transform), batch_size=batch_size, shuffle=True, num_workers=4)

3.實例化生成器、載入權重 (load_static_dict)、選擇模式 ( train or eval ),如果選擇 eval,PyTorch會將Drop給自動關掉;因為我只要真實照片轉成梵谷所以只宣告了G_B2A:

# get generator

G_B2A = Generator().to(device)

# Load state dicts

G_B2A.load_state_dict(torch.load(os.path.join("weights", "netG_B2A.pth")))

# Set model mode

G_B2A.eval()

4.開始進行預測:

取得資料>丟進模型取得輸出>儲存圖片

progress_bar = tqdm(enumerate(dataC_loader), total=len(dataC_loader))

for i, data in progress_bar:

# get data

real_images_B = data[0].to(device)

# Generate output

fake_image_A = 0.5 * (G_B2A(real_images_B).data + 1.0)

# Save image files

vutils.save_image(fake_image_A.detach(), f"{output_path}/FakeA_{i + 1:04d}.jpg", normalize=True)

progress_bar.set_description(f"Process images {i + 1} of {len(dataC_loader)}")

5.去output察看結果:

可能是因為我只有訓練100回合,梵谷風格的細節線條還沒學起來,大家可以嘗試再訓練久一點,理論上200回合就會有不錯的成果了!

|

ORIGINAL |

|

|

TRANSFORM |

|

好的,那現在已經會建構、訓練以及預測了,接下來我們來想個辦法應用它!講到Style Transfer的應用,第一個就想到微軟大大提供的Style Transfer Azure Website。

Azure 的Style Transfer

|

這種拍一張照片就可以直接做轉換的感覺真的很棒!所以我們理論上也可以透過簡單的opencv程式來完成這件事情,再實作之前先去體驗看看Style Transfer 。

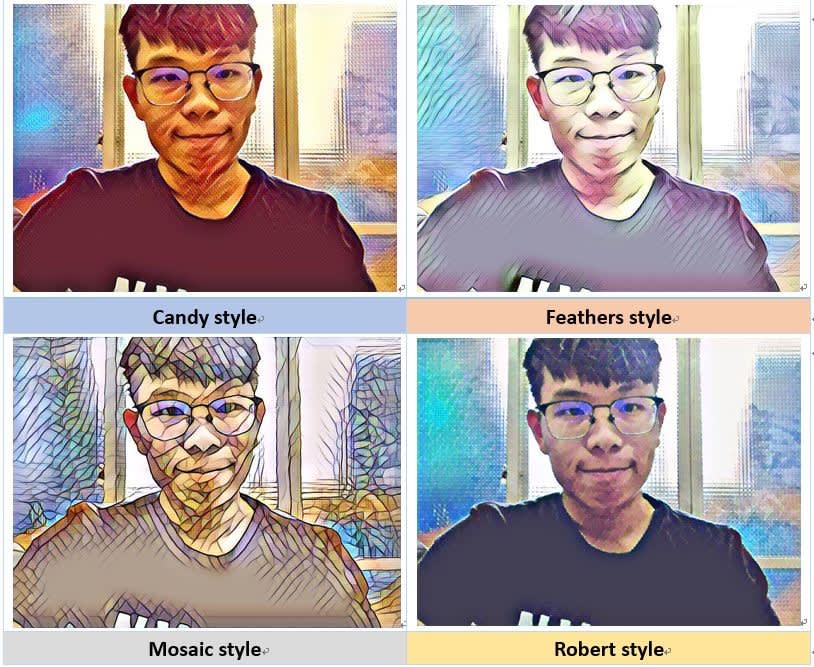

按下Create就能進來這個頁面,透過點擊Capture就可以拍照進行轉換也可以點擊Upload a picture上傳照片,總共有4種風格可以選擇:

感覺真的超級酷的!所以我們也來試著實作類似的功能。

在JetsonNano中進行風格轉換

1.首先要將權重放到Jetson Nano中

我新增了一個weights資料夾並且將pth放入其中,此外還在同一層級新增了jupyter book的程式:

2.重建生成器並導入權重值

這邊可能會有版本問題,像我就必須升級成Torch 1.6版本,而安裝PyTorch的方法我會放在文章結尾補述,回歸正題,還記得剛剛我儲存的時候只有儲存權重對吧,所以我們必須建一個跟當初訓練一模一樣的模型才能匯入哦!所以來複製一下之前寫的生成器吧!

import torch

from torch import nn

from torchsummary import summary

def conv_norm_relu(in_dim, out_dim, kernel_size, stride = 1, padding=0):

layer = nn.Sequential(nn.Conv2d(in_dim, out_dim, kernel_size, stride, padding),

nn.InstanceNorm2d(out_dim),

nn.ReLU(True))

return layer

def dconv_norm_relu(in_dim, out_dim, kernel_size, stride = 1, padding=0, output_padding=0):

layer = nn.Sequential(nn.ConvTranspose2d(in_dim, out_dim, kernel_size, stride, padding, output_padding),

nn.InstanceNorm2d(out_dim),

nn.ReLU(True))

return layer

class ResidualBlock(nn.Module):

def __init__(self, dim, use_dropout):

super(ResidualBlock, self).__init__()

res_block = [nn.ReflectionPad2d(1),

conv_norm_relu(dim, dim, kernel_size=3)]

if use_dropout:

res_block += [nn.Dropout(0.5)]

res_block += [nn.ReflectionPad2d(1),

nn.Conv2d(dim, dim, kernel_size=3, padding=0),

nn.InstanceNorm2d(dim)]

self.res_block = nn.Sequential(*res_block)

def forward(self, x):

return x + self.res_block(x)

class Generator(nn.Module):

def __init__(self, input_nc=3, output_nc=3, filters=64, use_dropout=True, n_blocks=6):

super(Generator, self).__init__()

# 向下採樣

model = [nn.ReflectionPad2d(3),

conv_norm_relu(input_nc , filters * 1, 7),

conv_norm_relu(filters * 1, filters * 2, 3, 2, 1),

conv_norm_relu(filters * 2, filters * 4, 3, 2, 1)]

# 頸脖層

for i in range(n_blocks):

model += [ResidualBlock(filters * 4, use_dropout)]

# 向上採樣

model += [dconv_norm_relu(filters * 4, filters * 2, 3, 2, 1, 1),

dconv_norm_relu(filters * 2, filters * 1, 3, 2, 1, 1),

nn.ReflectionPad2d(3),

nn.Conv2d(filters, output_nc, 7),

nn.Tanh()]

self.model = nn.Sequential(*model) # model 是 list 但是 sequential 需要將其透過 , 分割出來

def forward(self, x):

return self.model(x)

接下來要做實例化模型並導入權重:

def init_model():

device = 'cuda:0' if torch.cuda.is_available() else 'cpu'

G_B2A = Generator().to(device)

G_B2A.load_state_dict(torch.load(os.path.join("weights", "netG_B2A.pth"), map_location=device ))

G_B2A.eval()

return G_B2A

3.在Colab中拍照

我先寫了一個副函式來進行模型的預測,丟進去的圖片記得也要做transform,將大小縮放到256、轉換成tensor以及正規化,這部分squeeze目的是要模擬成有batch_size的格式:

def test(G, img):

device = 'cuda:0' if torch.cuda.is_available() else 'cpu'

transform = transforms.Compose([transforms.Resize((256,256)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.5, 0.5, 0.5], std=[0.5, 0.5, 0.5])])

data = transform(img).to(device)

data = data.unsqueeze(0)

out = (0.5 * (G(data).data + 1.0)).squeeze(0) return out

我們接著使用OpenCV來完成拍照,按下q離開,按下s進行儲存,那我們可以在按下s的時候進行風格轉換,存下兩種風格的圖片,這邊要注意的是PyTorch吃的是PIL的圖檔格式,所以還必須將OpenCV的nparray格式轉換成PIL.Image格式:

if __name__=='__main__':

G = init_model()

trans_path = 'test_transform.jpg'

org_path = 'test_original.jpg'

cap = cv2.VideoCapture(0)

while(True):

ret, frame = cap.read()

cv2.imshow('webcam', frame)

key = cv2.waitKey(1)

if key==ord('q'):

cap.release()

cv2.destroyAllWindows()

break

elif key==ord('s'):

output = test(G, Image.fromarray(frame))

style_img = np.array(output.cpu()).transpose([1,2,0])

org_img = cv2.resize(frame, (256, 256))

cv2.imwrite(trans_path, style_img*255)

cv2.imwrite(org_path, org_img)

break

cap.release()

cv2.destroyWindow('webcam')

執行的畫面如下:

最後再將兩種風格照片合併顯示出來:

res = np.concatenate((style_img, org_img/255), axis=1)

cv2.imshow('res',res )

cv2.waitKey(0)

cv2.destroyAllWindows()

在Jetson Nano中做即時影像轉換

概念跟拍照轉換雷同,這邊我們直接在取得到攝影機的圖像之後就做風格轉換,我額外寫了一個判斷,按下t可以進行風格轉換,並且用cv2.putText將現在風格的標籤顯示在左上角。

if __name__=='__main__':

G = init_model()

cap = cv2.VideoCapture(0)

change_style = False

save_img_name = 'test.jpg'

cv2text = ''

while(True):

ret, frame = cap.read()

# Do Something Cool

############################

if change_style:

style_img = test(G, Image.fromarray(frame))

out = np.array(style_img.cpu()).transpose([1,2,0])

cv2text = 'Style Transfer'

else:

out = frame

cv2text = 'Original'

out = cv2.resize(out, (512, 512))

out = cv2.putText(out, f'{cv2text}', (20, 40), cv2.FONT_HERSHEY_SIMPLEX ,

1, (255, 255, 255), 2, cv2.LINE_AA)

###########################

cv2.imshow('webcam', out)

key = cv2.waitKey(1)

if key==ord('q'):

break

elif key==ord('s'):

if change_style==True:

cv2.imwrite(save_img_name,out*255)

else:

cv2.imwrite(save_img_name,out)

elif key==ord('t'):

change_style = False if change_style else True

cap.release()

cv2.destroyAllWindows()

即時影像風格轉換成果

結語

這次GAN影像風格轉換的部分就告一段落了,利用Colab來訓練風格轉換的範例真的還是偏硬了一點,雖然我們只有訓練100回合但也跑了半天多一點了,但是!GAN就是個需要耐心的模型,不跑個三天兩夜他是不會給你多好的成效的。

至於在Inference的部分,Jetson Nano還是擔當起重要的角色,稍微有一些延遲不過還算是不錯的了,或許可以考慮透過ONNX轉換成TensorRT再去跑,應該又會加快許多了,下一次又會有什麼GAN的範例大家可以期待一下,或者留言跟我說。

補充 – Nano 安裝Torch 1.6的方法

首先,JetPack版本要升級到4.4哦!不然CUDA核心不同這部分官網就有升級教學所以就不多贅述了。

將PyTorch等相依套件更新至1.6版本:

$ wget https://nvidia.box.com/shared/static/yr6sjswn25z7oankw8zy1roow9cy5ur1.whl -O torch-1.6.0rc2-cp36-cp36m-linux_aarch64.whl

$ sudo apt-get install python3-pip libopenblas-base libopenmpi-dev

$ pip3 install Cython

$ pip3 install torch-1.6.0rc2-cp36-cp36m-linux_aarch64.whl

將TorchVision更新至對應版本:

$ sudo apt-get install libjpeg-dev zlib1g-dev

$ git clone --branch v0.7.0 https://github.com/pytorch/vision torchvision

$ cd torchvision

$ export BUILD_VERSION=0.7.0 # where 0.x.0 is the torchvision version

$ sudo python3 setup.py install # use python3 if installing for Python 3.6

$ cd ../ # attempting to load torchvision from build dir will result in import error

评论