The World of FPGAs and ASICs: Part 2

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

In Part 1 of this two-part post, I looked at the origins of programmable hardware which has reduced the chip-count of many PCB designs. Part 2 will show how the FPGA is now replacing the microprocessor and DSP chips in many high-speed processing applications. Or is it?

Inside the FPGA

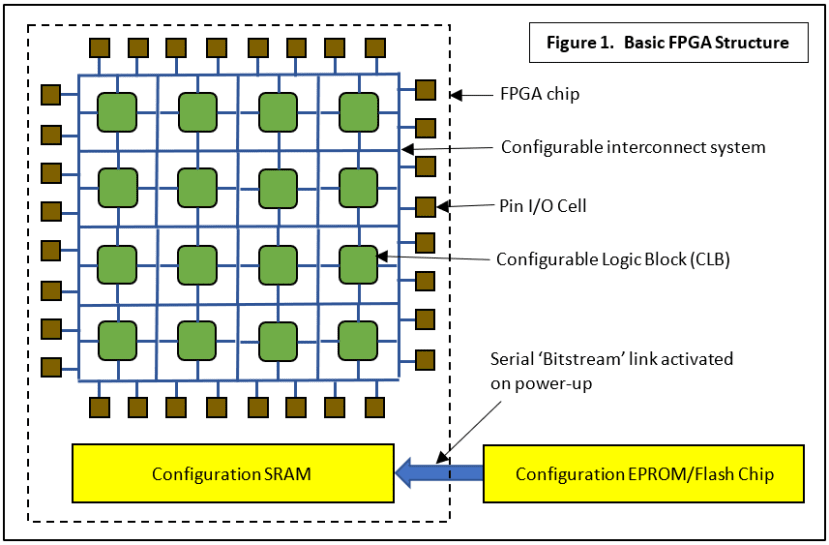

The internals of a basic FPGA device were introduced in Part 1 and these are illustrated in a very simplified form by the diagram below (Fig.1). There are four main components:

- An array of Configurable Logic Blocks or CLBs. Each of these will allow you to create a combinational logic function consisting of AND, OR and NOT gates by configuring a Look-Up Table or LUT. There may also be sequential logic components, flip-flops or latches included, allowing a simple State-Machine to be set up.

- An Interconnect System which can, mostly, offer any permutation of links between CLB inputs and outputs across the device.

- Input/Output Cells. These contain buffers and latches for communicating with the outside world via the device pins.

- Configuration Memory. Normally volatile SRAM for speed, it’s automatically loaded from an external non-volatile Flash chip via a dedicated serial channel on boot-up. The configuration file data is called the FPGA ‘Bitstream’. Some recent chips use on-board Flash, dispensing with SRAM and its attendant boot delay.

That’s about as general a description as you can get. The problem is that the precise definition of a CLB, LUT or LE (Logic Element) varies between manufacturers making performance comparison difficult if not impossible. So, one may claim that a particular device contains 12000 LUTs and another boasts 6000, but it could be like comparing apples and oranges for the user! Many new devices have special complex-function blocks performing common tasks, saving the user some design effort. These include:

- Phase-Locked Loop (PLL) block. These can be used to generate all the clock frequencies needed for a particular application.

- Multiplier Multiplication of two numbers is a standard feature of any digital signal processing algorithm. Processing parallel streams of data is facilitated by having multiple multiplier blocks available. If an adder is included after the multiply logic then the block is usually described as a:

- Digital Signal Processing (DSP) block. It’s the ability to perform very fast multiply-adds, or more specifically multiply-accumulate operations (MAC) that separates a basic microprocessor from a DSP chip. Having lots of DSP blocks available makes an FPGA a much better choice for processing video graphics than a single dedicated DSP chip because for this task, parallel processing massively increases throughput.

- Serial communication LVDS signalling, for example, is a common feature of digital video systems.

- Memory Controller blocks for external DDR SDRAM. By now you might be thinking: is it possible to implement the functions of a complete microprocessor system on an FPGA? Yes, it is.

It’s not possible to go into any more detail here concerning the internals of an FPGA, but this article: All about FPGAs although dated, may help make the basics clearer.

Software Programming: Microprocessor

Developments in silicon technology have seen the number of transistors making up an integrated circuit increase from a few dozen in the early 1960s to many millions now. In 1970 Intel delivered a custom-designed chip to Busicom, a Japanese manufacturer of electronic calculating machines. This calculator chip contained all the essential components of a computer’s Central Processor Unit (CPU) and in 1971 Intel marketed it as the 4004, the world’s first commercial 4-bit microprocessor. As well as shrinking the size of general-purpose computers from a room full of cabinets down to a box that would fit on a desktop, they could replace a mass of ‘discrete logic’ components in what we now call embedded designs. Further development led to the microcontroller (MCU), optimised for embedded systems with its onboard non-volatile program memory, peripheral devices, and input/output pins. This chip can run a user-generated program – a set of ‘instructions’ executed one after the other to control an external device, for example, a motor, in real-time. The microcontroller is cheap, replaces a lot of smaller chips and, here is the real benefit, its function is entirely created (programmed) by the end-user. Not only that, debugging and future updating may not involve any expensive hardware changes. The downside is that they are limited to relatively low-speed applications because of the overhead involved in fetching and executing instructions one at a time from not very fast non-volatile memory.

Hardware Programming: FPGA

If your task is to perform some processing on an audio signal, then a fast microcontroller, maybe one with onboard DSP hardware and appropriate additions to the instruction set will probably suffice. But what if it’s a video signal? Until fairly recently, you would be looking at a custom-manufactured device or ASIC (See Part 1) designed in hardware not software with all the huge cost that entails. An FPGA, on the other hand, can have its function programmed, debugged and updated by the user just like the MCU. The latter has an internal firmware Program Memory, normally Flash, corresponding to the FPGA’s hardware Configuration Memory, usually SRAM (Fig.1).

In order to develop code for an MCU-based application you need Integrated Development Environment (IDE) software running on a PC: for example, MPLAB X® for Microchip PICs. This allows you to create a source code file based on a high-level language such as C, compile the source into a machine code file for the chosen MCU, and then download it into the device Flash program memory. A similar process is used to program the configuration memory of an FPGA using, for example, Quartus® Prime Design for Intel/Altera FPGAs. The two main Hardware Description Languages (HDL) in use today are VHDL and Verilog. They are very similar to each other, and have the ‘look and feel’ of a software language like C.

The design process is usually divided into two parts, first Behavioural and then Structural. Somewhat simplified, it looks like this:

- The Behavioural phase involves generating a source code file in VHDL or Verilog which defines the function of the configured FPGA. This, together with a simulated data input or Stimulus file is fed into a simulator whose output will be used to confirm that the programmed behaviours will yield the correct outputs. When they do, after any necessary debugging, we move to the Structural phase.

- The source code is now processed by the Synthesizer which tries to ‘design the hardware’. That is, knowing the resources available for the particular chip specified, it will attempt to construct the configuration or Bitstream file.

- If successful, the simulator will once again use the Stimulus file to check that the logic hardware structure works. At this point, it may be necessary to tweak things like individual signal timings to get reliable operation.

- Now the configuration file can be loaded into the real configuration memory and the actual hardware tested to confirm that it really works.

Soft-Core Processors

As I suggested above, the huge capacity of modern FPGAs means that very complex systems can be implemented by the end-user. That includes complete microprocessor cores. ARM has made the design files for their FPGA-optimised Cortex-M1 core, part of the widely used Cortex-M family, free to download. Another example is the Parallax Propeller 1, an 8-core 32-bit device. The company made its FPGA-design Verilog files open-source some years ago. These have been optimised for two inexpensive ‘starter kits’ - the Terasic Cyclone IV DE0-Nano and Cyclone IV E DE2-115.

Hard-Core Processors: The System-on-Chip (SoC)

The next logical development is for the chip manufacturer to include processor core(s) as part of the device hardware. The programmable logic is used to provide high-speed application-specific processing while the core handles control and interfacing with the outside world. A popular device family is the Xilinx Zynq-7000 series of FPGA SoCs featuring an on-chip single- or dual-core ARM Cortex-A9 processor. The Red Pitaya oscilloscope and test instrument in the heading picture is based on one of these devices.

Is the FPGA just a development tool on the way to an ASIC?

One might have said an unequivocal ‘Yes’ to this up to a few years ago when aiming at end-product quantities in the hundreds of thousands. The ASIC beats the FPGA with those numbers because:

- No loading of a configuration memory is required, so the device is fully functional from power-up.

- The internal links are metal without the propagation delays caused by the interconnection transistors of the FPGA. Hence the ASIC is faster and consumes less power.

- The fixed metal links offer much better security from hackers attempting to attack the FPGA’s Bitstream.

- In high-reliability applications, the FPGA configuration SRAM is prone to corruption from power glitches and cosmic particles.

No contest? Well as I said before, it’s only economic with high production quantities, except of course for money-no-object Space and Military applications. However, Artificial Intelligence (AI) may just have given the FPGA a genuine role in life as a finished product.

The FPGA as an Inference Engine

The latest devices now include large numbers of ‘DSP’ or Multiplier blocks. These were intended to perform real-time signal processing algorithms such as FIR filtering on sampled video signals. As it happens, Convolutional Neural Networks (CNN) are also heavy in their use of the multiply-accumulate function. These AI algorithms have suddenly been catapulted from the realms of theoretical academic research into the domain of real engineering because autonomous vehicles need object recognition as part of their vision system. Neural networks are ‘trained’ to recognise objects by ‘showing’ them many pictures of objects with tags identifying the object of interest. A very large file of weighting factors is produced which is used by the Inference Engine in the vehicle to classify objects it ‘sees’ in real-time. This file is normally created off-line or in the ‘Cloud’ by very powerful computers: time and processor power is not a constraint at that stage.

- A modern FPGA is well suited to the task of implementing an inference engine because the latter consists largely of interconnected DSP modules.

- Updating the network installed in the vehicle may involve more than just changing the weighting factors; the network topology might also have to be altered.

The ability to reprogram the network hardware could be crucial to the use of AI in embedded systems. Training a system is not the same as designing it; because of the limitless number and variety of training images that could be used, the neural network will never deliver 100% accuracy. There will always be a need to update it while in service. It means, for this application area at least, that the FPGA may not get replaced by the equivalent ASIC at the end of the development phase.

Finally

FPGA application design and Artificial Neural Network development have at least one thing in common: there are very many ‘correct’ solutions to every task, some better than others. With industry massively increasing its interest in AI recently, there has been a corresponding surge in demand for people with skills in these areas. If you have a lot of experience with VHDL or Verilog and know your way around ANNs and Deep Learning algorithms, I suggest you head to the nearest global vehicle manufacturer and name your price….

If you're stuck for something to do, follow my posts on Twitter. I link to interesting articles on new electronics and related technologies, retweeting posts I spot about robots, space exploration, and other issues.