The State of Quantum Computing

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

Let's just throw this out there: whatever device you are using to read this article is a finite state machine. Any device storing the status of something at a given time is a state machine. This status can change as a function of inputs and the current state to generate a new output status.

This concept is the basis of digital logic design and the algorithms that run on these designs. Sequentially stepping through states to solve problems has powered our computer revolution for the last fifty years. There are limits to this approach though, even when run on an Exa scale supercomputer. Some problems are just too hard to solve in a reasonable time frame. This is where quantum computers may come to the rescue.

Problems Even Supercomputers Find Hard

Despite the number-crunching power available, there are still many real-world problems that don’t play nicely with conventional computers. These tend to fall into the broad category of problems that scale exponentially and include solving combinatorial optimisations.

The classic way to demonstrate how exponentials rapidly get out of hand is with the apocryphal story of rice grains on a chess board. The story goes that a wandering sage brought the game of chess to the Emperor of China, who was so impressed by the intellectual challenge posed by the game that he decided to reward the sage with whatever his heart desired.

The sage had no desire for material wealth but wanted to make sure the poor were fed. So he requested:

“Give me one grain of rice on the first square of this chessboard, then two grains on the second square, four grains on the third square, eight grains on the fourth square and so on, so that each square contains double the amount of rice of the previous square.”

The Emperor thought he was on to a winner as his servants started counting out the rice grains. one grain on the first square, two grains on the second square, four grains on the third square and so on; completed the row with 128 grains of rice on the eighth square.

By the end of the second row, when 32 768 grains of rice were being counted out, the Emperor realised things were going south rapidly. If we really were to go on counting to the 64th square, 18 446 744 073 709 551 616 grains of rice would be needed. To put that into perspective, that amount of rice would pile up higher than Everest and weigh around 461 billion metric tonnes!

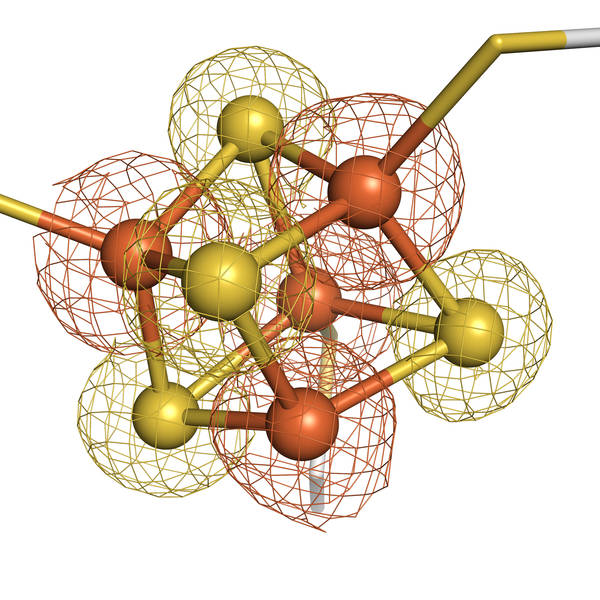

Scientists face similar scaling problems when trying to precisely simulate chemical reactions. One fundamental reaction that scientists would like to work with is this one:

FeS + H2S -> FeS2 + 2H+ + 2e-

Why is this interesting? Because this reaction is predicted to occur on the ocean floor around hydrothermal vents and is thought to be a first step in protein creation: the basis of life. Unfortunately, the largest iron sulphide cluster that can be simulated, even on the most powerful supercomputers, is the one shown above. Yes, that is just four atoms.

To accurately simulate the molecule, you have to simultaneously account for every single electron/electron repulsion and electron/nuclei attraction force. Change one electron and you have to recalculate all the electron energies again. Iron sulphide has in the order of 76 orbitals to account for and these numbers grow exponentially as the molecule grows becoming intractable for conventional computing methods. Being able to run the Schrödinger equation for complicated molecules would allow scientists to determine the properties of new materials without having to physically synthesise them.

Optimisations also face similar difficulties with the rapid growth of complexity. Even a simple one, like seating 10 people round a table has 10! (factorial) possible combinations: that’s 3 628 800 different ways to seat your guests.

When considering well known computer science problems like the knapsack problem, minimum spanning tree, assignment problem and so on, the time complexity to solve for optimal rapidly becomes a problem when adding more elements. When brought into the real world of logistics, routing and scheduling we often have to settle for approximations of the most optimal solution.

The Quantum State Machine

Quantum mechanics is a strange and mysterious world full of mind-bending concepts. Although quantum computers take advantage of quantum phenomena to make calculations, I want to try and keep our minds as un-bent as possible, so we will mostly run with the take-home conclusions of what these quantum phenomena make possible, rather than trying to understand how it happens.

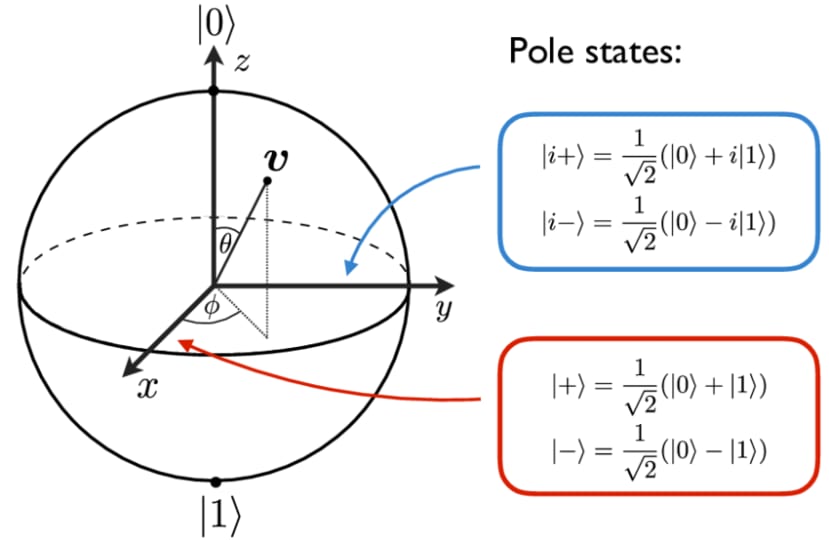

Where a conventional bit can only be one of two states (on or off, true or false, 1 or 0) quantum mechanics allows for an electron used as a quantum bit (or qubit) to be in a superposition state, which is a combination of both 1 and 0. This is depicted above in what is known as a Bloch sphere. Keeping it simple, the ‘latitude’ of the sphere gives a representation of the probability of whether the bit will fall out to a 1 or a zero when it is measured.

The magic starts to appear when we add more bits. If we have two convention bits, then each state contains two bits of information.

| Value (state) | Bit 1 | Bit 0 |

|---|---|---|

| 3 | 1 | 1 |

| 2 | 1 | 0 |

| 1 | 0 | 1 |

| 0 | 0 | 0 |

Quantum mechanics allows the superposition of these 4 states. This means that 2 qubits contain 4 bits of information. If we add another bit, 3 qubits will hold 8 bits of information.

If we keep going you can probably already see that for n qubits we can hold 2n bits of information. In other words, our ability to process information scales exponentially. Pretty useful when you want to work on exponentially scaling problems.

If you had a machine with 300 cubits you could contain 2300 bits of information, which is also the estimated number of particles in the universe.

In order to process the information, quantum logic operations are designed that use the quantum phenomenon of entanglement along with processes that are very similar to creating interference patterns. An application of phase information to the qubits in superposition will amplify probabilities that are in phase and reduce out of phase probabilities, just as waves in phase amplify and out of phase waves cancel out. When measured, the qubits in superposition will fall out to one of the base states of 1 or 0 depending on the strength of probability and all the other information is lost.

It is important to realise that quantum computers are not a replacement for conventional computers. Indeed, convention computers are used to present data to the quantum processor and retrieve results.

They are not universally faster either and are really a niche coprocessor for calculations that benefit from the parallelism that superposition affords. In fact, individual operations are likely to be slower on a quantum computer than on a conventional computer. The improvement is not in the speed of operation but in the number of operations required to get a result, which is exponentially fewer.

Technologies

One of the take-aways from Shannon’s information theory is that “the message isn’t the medium, baby!”. Information isn’t what it is stored on. This holds true for quantum computing technology too and it turns out there’s more than one way to skin Schrödinger’s cat. In this section we will take a brief look at who is doing what in quantum computing and what the major pros and cons of each technology may be.

Superconducting Qubits

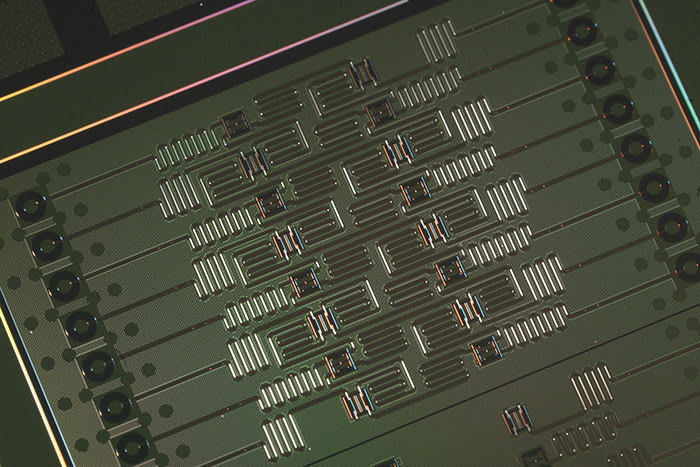

This is the most widely used technology and the most advanced in terms of development. It has the major advantage that the Josephson junctions and other features used in superconducting qubits can be microfabricated using existing integrated-circuit processing techniques so scaling to larger numbers of qubits should become relatively straightforward.

The squiggly lines you can see on the chip are microwave coupling resonators that allow data to be input uncoupled, as microwave pulses.

The major downside is that the qubits need to be cooled to between 10 and 20 millikelvin, which is colder than deep space. Another limiting factor is that the quantum effects fade quite rapidly: the decoherence time is around 10µS.

The big players with this technology are IBM, Google and Rigetti. In 2019, Google were the first to claim quantum supremacy with a 54-qubit processor called “Sycamore”, though this was disputed by IBM. I will leave you to make your own mind up about who is right.

If you’re hankering to try out quantum computing yourself, IBM’s quantum computers are hooked up to IBM’s cloud infrastructure. This isn’t a gimmick: some 60 or so scientific research papers have been published off the back of work done with these tools.

Photonic Qubits

Qubits in photonic quantum computers use another particle with quantum properties, the photon. In these devices, pulsed laser light is fed into microscopic small ring resonators (the circular structures in the picture) called squeezers which induce a special quantum state of light called the squeezed state. This is a superposition state and is the qubit for this technology.

This light if fed by wave guides into a network of beam splitters and phase shifters where the squeezed photons interact and become entangled. These are the logic operations and they are electronically set up by the user. The output is then fed to photon detectors called transition edge sensors which count up the photons in each output and give the user an array of integers which are an encoding of the result.

The big plus of this technology is that it can, in principle, be operated at room temperature, though a current limitation is the use of superconducting photon counters which require ultra-cold temperatures less than 1 degree above absolute zero. Quantum coherence lasts much longer as photons do not interact with the environment. They can also integrate with existing fibre optic telecomms infrastructure: potentially connecting quantum computers together into powerful networks and maybe even a quantum Internet.

The down side is that the device hardware is physically larger with the laser guides and optical components, making it trickier to scale up.

Toronto-based Xanadu is a major player in this technology with the first publicly available photonic quantum computing platform with 8, 12, and 24 qubit machines accessible over the cloud. In addition to its quantum cloud, Xanadu, which is part of IBM's quantum computing Q network, has made a variety of open-source tools widely available on Github.

Another big name is PsiQuantum, who are working to scale this technology to deliver an error corrected general purpose quantum computer with 1 million qubits, which is considered the benchmark for where quantum computing would be truly useful rather than just academically interesting.

Ion Traps

Trapped-ion quantum computers use chains of individual ions to hold quantum information. Starting with a neutral atom like ytterbium(Yb), an electron is removed with lasers as a part of the trapping process. This ionization leaves the atom with a positive electrical charge and only one valence electron. The ions are held by a linear ion trap to holding them precisely in 3D space so each ion can be manipulated and encoded using microwave signals and lasers.

A big benefit of this method is the length of coherence times, which can be a few minutes. The devices themselves are similar in size to supercooled quantum chips and also need to be cooled, but not quite to the same extreme: down to a few kelvin will suffice.

The biggest player in this field is Honeywell who claimed in June 2020 to be running the best quantum computer in terms of the quantum volume metric, which was originally defined by IBM to account for the number of qubits, how connected they are, and how well they avoid generating errors rather than the intended outcome. Honeywell’s quantum volume was 64 using six qubits. Since then, Honeywell have achieved a benchmark of 512, where it was only this summer that IBM reached Honeywell's earlier mark of 64 this past summer using a machine with 27 qubits but a higher rate of errors.

There's also the other ion trap computing company, a startup called IonQ that seems to have raised its qubit quality to be comparable to Honeywell's.

Wildcards

A couple of other technologies that may be showing promise are topological quantum computing and nitrogen vacancy.

Topological quantum computing is born out of the idea that if the qubits could be made from a configuration of electrons called Majorana zero-mode (MZM) quasiparticles, errors won't occur because the principles of topology, the mathematics of physical shape, prevents it. However, the research on this at TU Delft had a major setback this year.

Nitrogen vacancy uses the idea of replacing a carbon atom in a lattice with a nitrogen atom. Research has demonstrated controlled entanglement can be achieved by applying a magnetic fields to these nitrogen-vacancy centres.

Both of these fields have great potential and could become technologies to watch in the coming year.

Big Breakthroughs in 2021

Some of the things that caught my eye this year were:

This month, Quantinuum — a new quantum computing company created by the merger of software company Cambridge Quantum and Honeywell Quantum Solutions — announced the world's first commercial quantum computer based encryption key generator.

In November, IBM announced the arrival of Eagle a 127 qubit device which more than doubled what had been achieved by either Google (54 qubits) or the University of Science and Technology of China (USTC) in Hefei at 60 qubits. As well as showing IBM’s technical prowess, it showed they are sticking to their roadmap that has them producing a 1,121-qubit system by 2023.

At the end of November, QuEra Computing, a Boston startup led by Mikhail Lukin and Markus Greiner at Harvard and Vladan Vuletić and Dirk Englund at MIT revealed a 256 qubit machine which uses arrays of neutral atoms that produce qubits with high coherence. The machine uses laser pulses to make the atoms interact, exciting them to an energy state known as the “Rydberg state” (described in 1888 by the Swedish physicist Johannes Rydberg) at which they can do quantum logic in a robust way with high fidelity.

In August, Maryland-based IonQ unveiled an evaporated glass trap technology for its ion trap quantum computing chips, which supports multiple chains of ion-based qubits. This has the potential to increase the number of reconfigurable chains of ions than can be supported on a chip into triple digit amounts of qubits.

Final Thoughts

There have been many more breakthroughs this year and if there were any that you feel deserve particular attention, please feel free to mention them in the comments below.

What is certain is that real progress is being made in this exciting field and with the developments seemingly accelerating at a dizzying pace, I am sure some truly jaw-dropping announcements will be coming our way next year.