Hands-on with OpenVMS x86

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

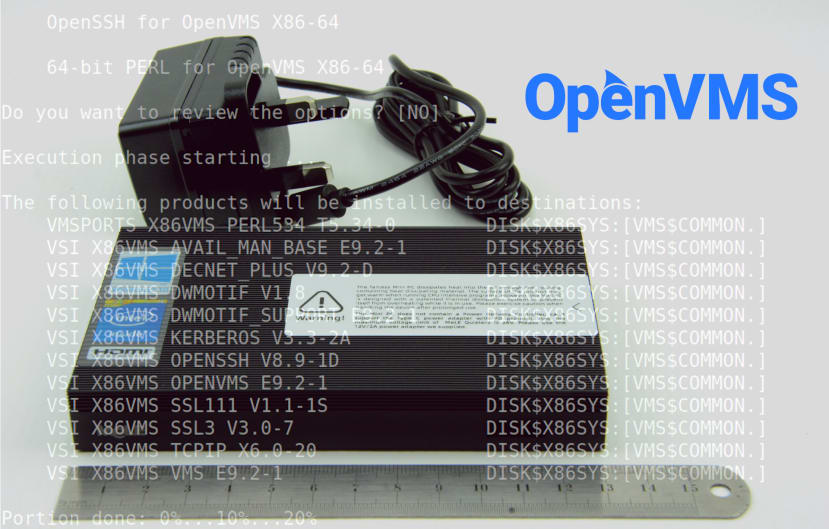

Installing the Intel port of the OpenVMS operating system in a virtual machine running on a Mele Quieter2 mini PC.

Thanks to VMS Software Inc. (VSI) having ported the venerable operating system to the Intel architecture and providing access to this via their Community License Program (CLP), it is now possible for enthusiasts to run OpenVMS inside a virtual machine (VM) on a modern PC. At this point in time, it’s not supported on bare metal Intel hardware, with the reason being that data centre workloads are typically virtualised these days, while writing O/S drivers for a small set of virtualised hardware requires much less effort than supporting a selection of real hardware.

In this article, we’ll take a look at installing and configuring OpenVMS x86, running inside a virtual machine provided by KVM and Qemu, running in turn on Ubuntu on a Mele Quieter2 mini PC.

OpenVMS 101

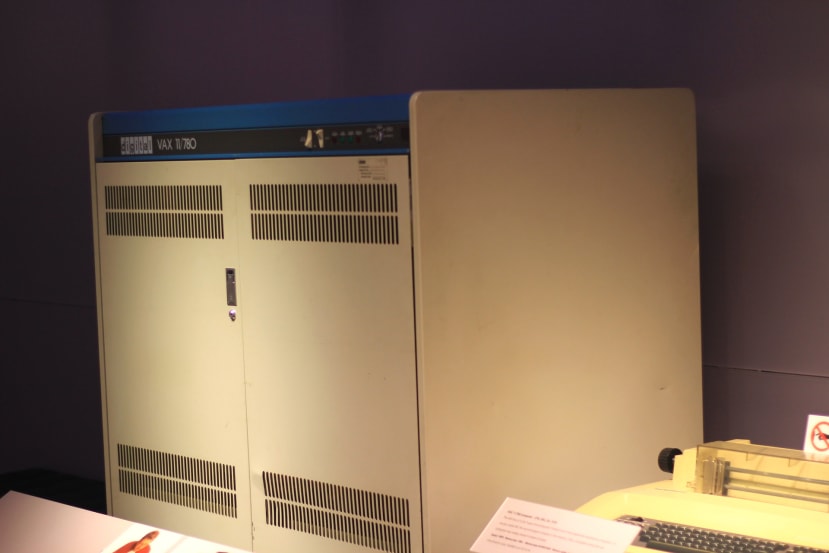

The first VAX, a model 11/780 with paper terminal to the right. Michael Hicks, CC BY 2.0.

OpenVMS started life as the native operating system for Digital Equipment Corporation’s (DEC) VAX minicomputers and was originally named VAX/VMS. The VAX — short for Virtual Address eXtension — was the successor to their incredibly successful PDP/11 minicomputer family and went on itself to prove extremely popular, in production for 23 years and with over 100 models.

The 32-bit VAX was succeeded by the 64-bit DEC Alpha architecture and this naturally ran OpenVMS, along with UNIX as a second option — much like the VAX. Then when in turn Alpha came towards the end of the line, OpenVMS was ported to the Intel Itanium (IA64) architecture. IA64 was around for a number of years, but ultimately never took off in the way that was hoped and so OpenVMS needed to find a new hardware architecture, with 64-bit x86 being the obvious choice.

Along the way DEC was acquired by Compaq and then Compaq by Hewlett Packard (HP). HP Enterprise (HPE) were custodians of OpenVMS for a number of years and operated an OpenVMS Hobbyist program, which enabled enthusiasts to run the O/S on vintage/surplus hardware, along with simulators. Development and support of OpenVMS was then transitioned to VMS Software Inc. (VSI) around 10 years ago and at this time they announced plans for the x86-64 port. So here we are, 45 years after release of VMS v1.0 and it lives on, running on its fourth architecture!

So what’s so interesting about OpenVMS anyway?

Well, a whole series of articles could be dedicated to exploring its features, but in short, OpenVMS provides a secure environment with four distinct processor access modes, plus fine-grained user account permissions and quotas, and integrated security auditing. The O/S is notably user-friendly, with a comprehensive help system and commands which may be shortened for convenience (provided the result is unambiguous). Files are automatically versioned. Support for “volume shadowing” (disk mirroring) is integrated, as is clustering support and VMS pretty much set the benchmark in this respect; it’s trivial to cluster systems and once set up, disk and tape drives, batch processing queues and network addresses, may all be instantly shared across the cluster.

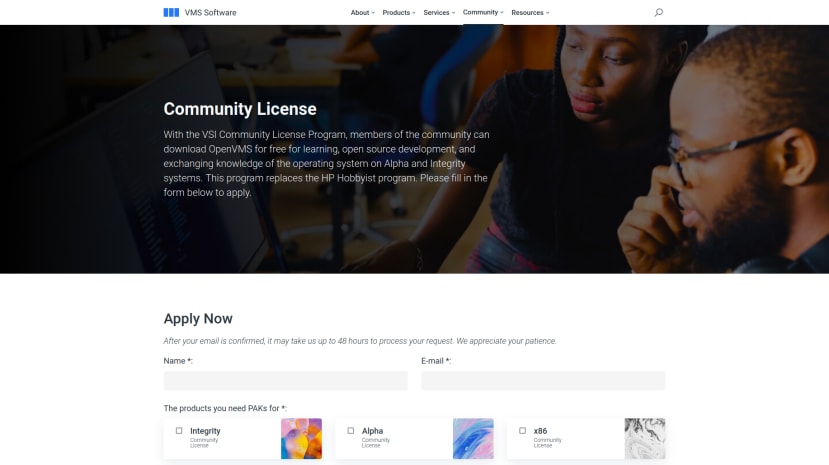

VSI Community License Program

The CLP picks up where HPE’s OpenVMS Hobbyist program left off, in providing enthusiasts with free time-limited licence keys for OpenVMS and key “layered products” such as the C compiler, for non-commercial use. VSI make these available for Alpha, Itanium and now x86-64 architectures.

At the time of writing access to x86-64 installation media and licences was being rolled out to existing CLP members in stages, rather than being made immediately available to everyone. Hence some existing members and likely all new may have to wait for x86_64 access. Once this has been provided it is then possible to download a DVD ISO file for OpenVMS, together with ZIP archives of layered products, plus the Product Authorization Keys (PAKs) required to activate software.

Next, let’s take a look at setting up a virtualisation environment upon which to run OpenVMS.

Qemu configuration

For details of the Ubuntu host operating system install and dependencies, see the previous article, Using the Mele Quieter2 with KVM and Qemu Virtualisation.

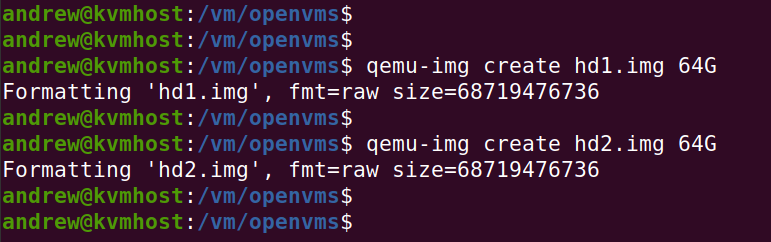

With Ubuntu Server, KVM and Qemu installed on the Quieter2, the next step was to create two disk images for our virtual machine. According to the OpenVMS x86-64 V9.2 Installation Guide these need to be at least 8GB in size and with plenty of space, we decided to make them each 64G.

$ mkdir /vm/openvms

$ cd /vm/openvms

$ qemu-img create hd1.img 64G

$ qemu-img create hd2.img 64GThe OpenVMS x86-64 Operating Environment v9.2 ISO file had been downloaded from the VSI portal and this was also copied to the /vm/openvms directory.

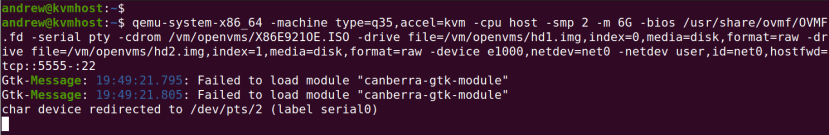

With our virtual HDDs created and an installation ISO in place to use as a virtual DVD-ROM, the command used to launch Qemu was:

$ qemu-system-x86_64 -machine type=q35,accel=kvm -cpu host -smp 2 -m 6G -bios /usr/share/ovmf/OVMF.fd -serial pty -cdrom /vm/openvms/X86E921OE.ISO -drive file=/vm/openvms/hd1.img,index=0,media=disk,format=raw -drive file=/vm/openvms/hd2.img,index=1,media=disk,format=raw -device e1000,netdev=net0 -netdev user,id=net0,hostfwd=tcp::5555-:22This is based on the options previously used in the aforementioned article, Using the Mele Quieter2 with KVM and Qemu Virtualisation, with some minor differences:

- Two disk image files are mounted instead of one

- OpenVMS ISO is mounted instead of Windows 10

-serial ptyoption is used to create a virtual serial port- User networking is configured with

hostfwd=tcp::5555-:22

The serial port is required for the OpenVMS installer and once the system is fully up and running this will also become our operator console, designated OPA0: in OpenVMS parlance.

Meanwhile the addition of hostfwd=tcp::5555-:22 to the networking configuration means that connections to the host on 5555/tcp will be forwarded to 22/tcp — the SSH port — inside the VM.

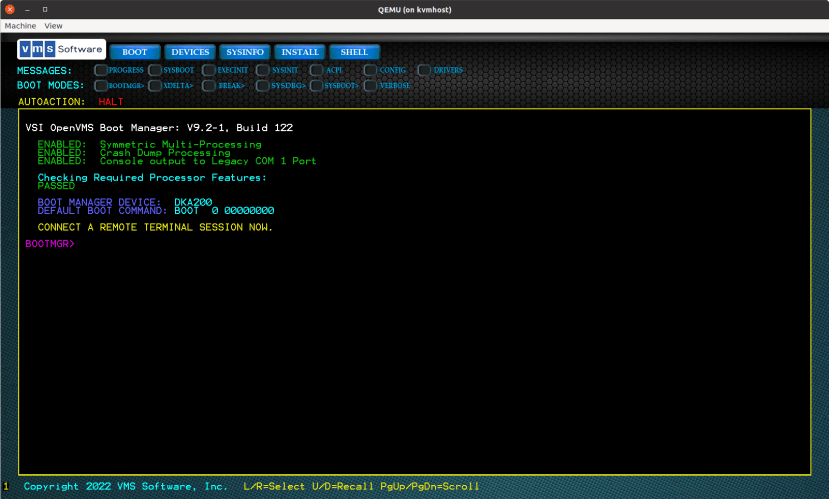

VSI Boot Manager

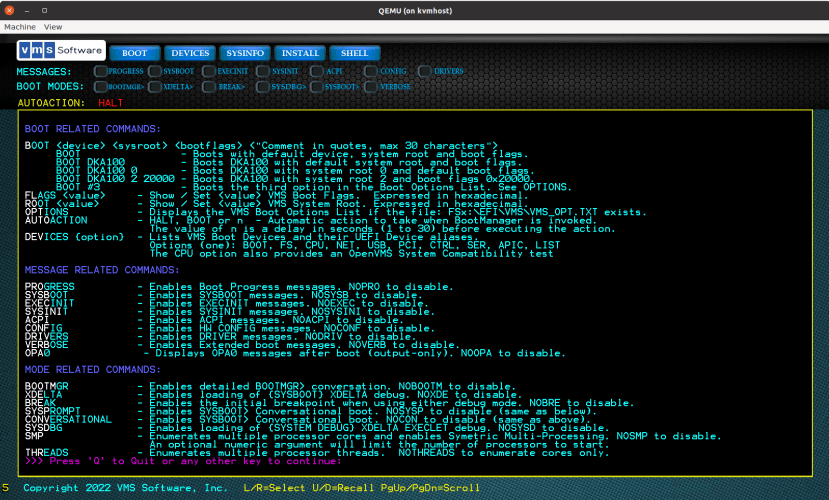

VAX machines had a firmware console in the form of a command line interface (CLI) where you could discover information about the hardware configuration, carry out tests and boot an O/S etc. Similar with the Alpha machines which followed. With Itanium and x86_64 systems we have UEFI, which can also provide a CLI to carry out various tasks, including running UEFI applications.

VSI provide a UEFI application for x86-64 named Boot Manager, “that knows very little about the underlying platform setup, but knows everything about booting VSI OpenVMS.” This provides us with a graphical interface and a CLI, where the latter can be used to execute commands such as DEVICES, which will list CD/DVD and HDDs which may be booted from. Other features include boot configuration, conversational boot (interactive startup), kernel dump configuration and debugger support. For further details, see the VSI OpenVMS x86-64 Boot Manager User Guide.

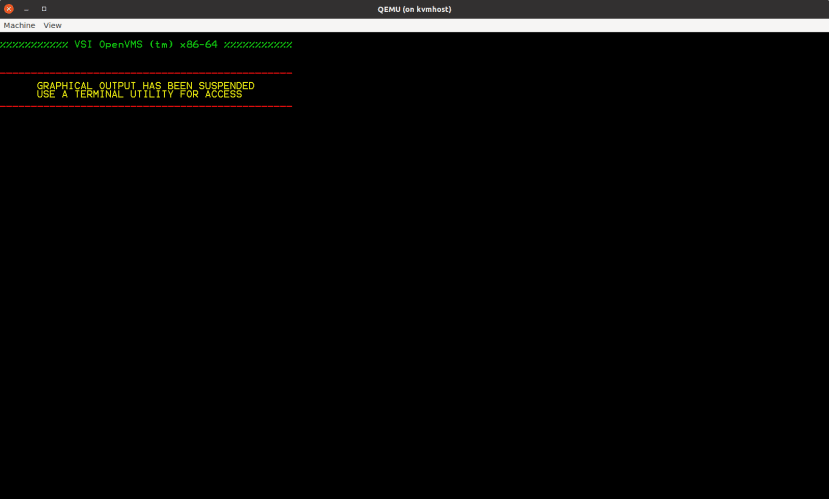

Boot Manager is accessed via the virtual machine VGA display and keyboard input. However, early on in the OpenVMS installation process and also once our installed system has booted, we can no longer use the VGA display and keyboard, and must instead switch to using the (virtual) serial port.

OpenVMS installation

First, we must remember to use the -Y option when connecting via SSH to the Quieter2, so that X11 graphical output is forwarded to our local machine:

$ ssh -Y kvmhost.localQemu was then launched with the options as previously described:

$ qemu-system-x86_64 -machine type=q35,accel=kvm -cpu host -smp 2 -m 6G -bios /usr/share/ovmf/OVMF.fd -serial pty -cdrom /vm/openvms/X86E921OE.ISO -drive file=/vm/openvms/hd1.img,index=0,media=disk,format=raw -drive file=/vm/openvms/hd2.img,index=1,media=disk,format=raw -device e1000,netdev=net0 -netdev user,id=net0,hostfwd=tcp::5555-:22In our terminal window (SSH session) we saw that the virtual serial port has been redirected to device /dev/pts/1. At this point we could now connect to this from another terminal window with:

$ screen /dev/pts/1 115200Again running this on the VM host, which in our case is “kvmhost.local”.

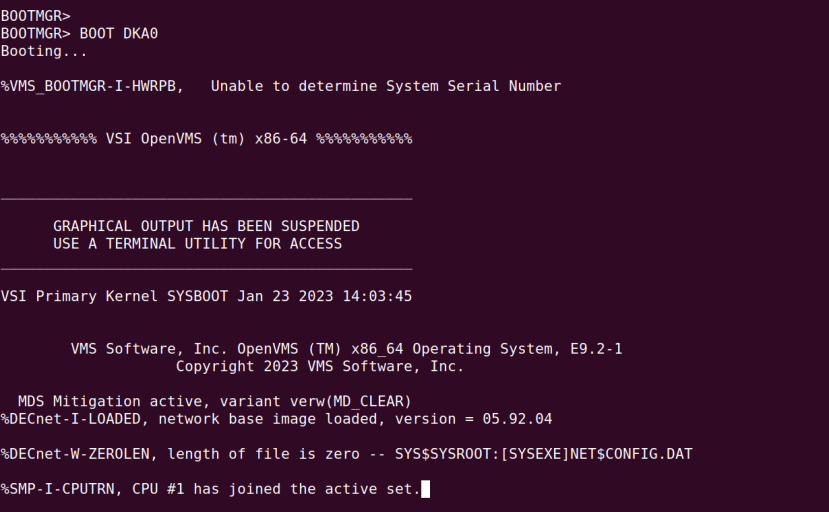

Following which we are greeted with the BOOTMGR> prompt. Anything we now type here will be mirrored in the Boot Manager screen displayed via the Qemu VGA display — and vice versa. Hence we could use either for issuing the BOOT command, but soon after we would have no choice but to use the virtual serial port for all subsequent interaction.

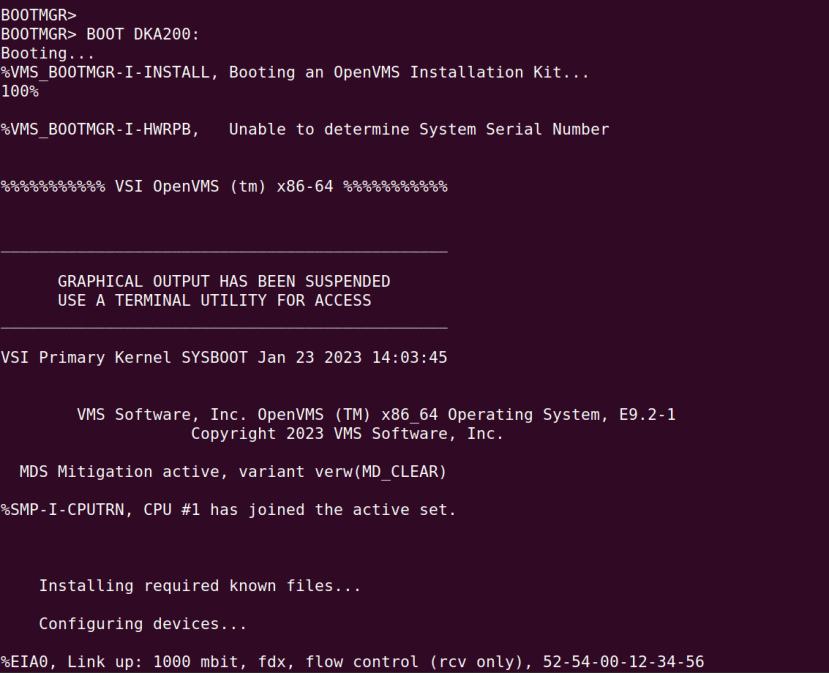

Our virtual DVD-ROM device had enumerated as DKA200: and so to boot from this we entered:

BOOTMGR> BOOT DKA200:For the next steps we simply followed the VSI OpenVMS x86-64 V9.2 Installation Guide and somewhat abridged details are provided here.

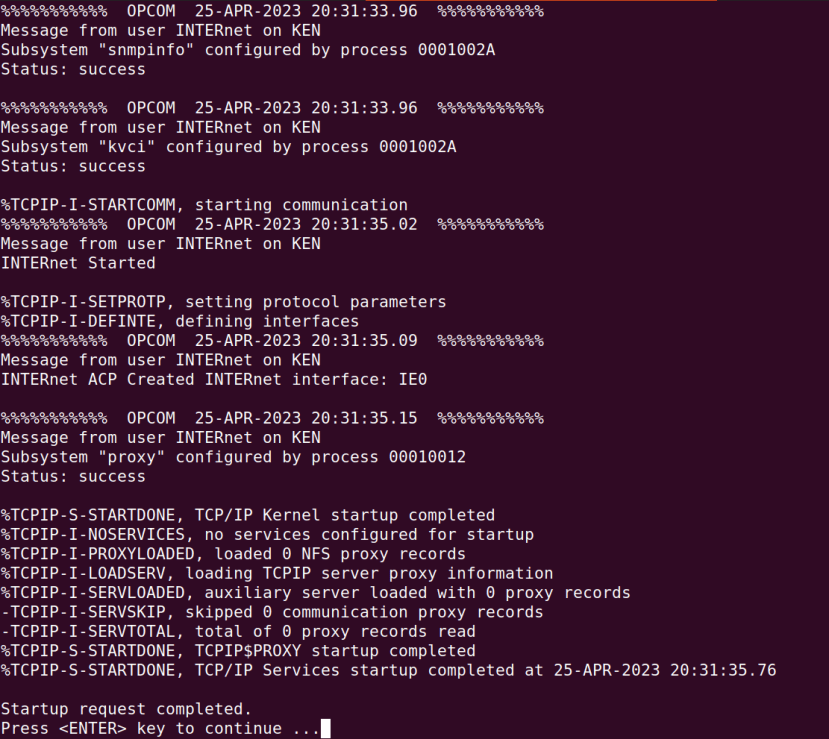

Here we can see that the installation kit has been booted and the notice output here via the serial port was duplicated on the Boot Manager graphical display, which could no longer be used.

Installation now proceeded back at the terminal window connected to the virtual serial port.

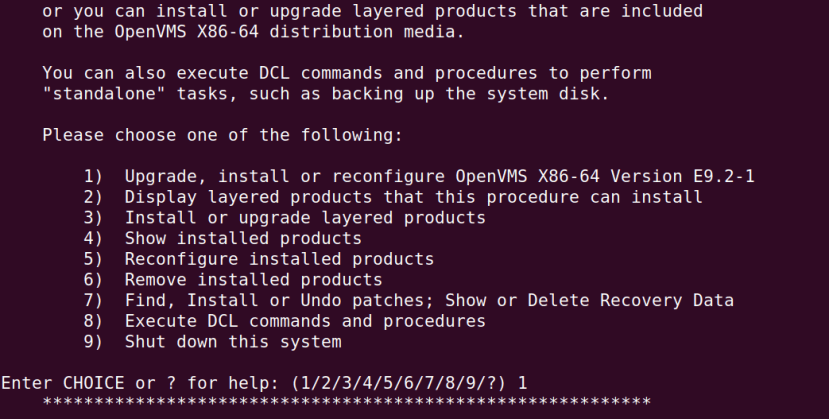

Option 1 was selected and when prompted we entered that we wanted to INITIALIZE the system rather than PRESERVE.

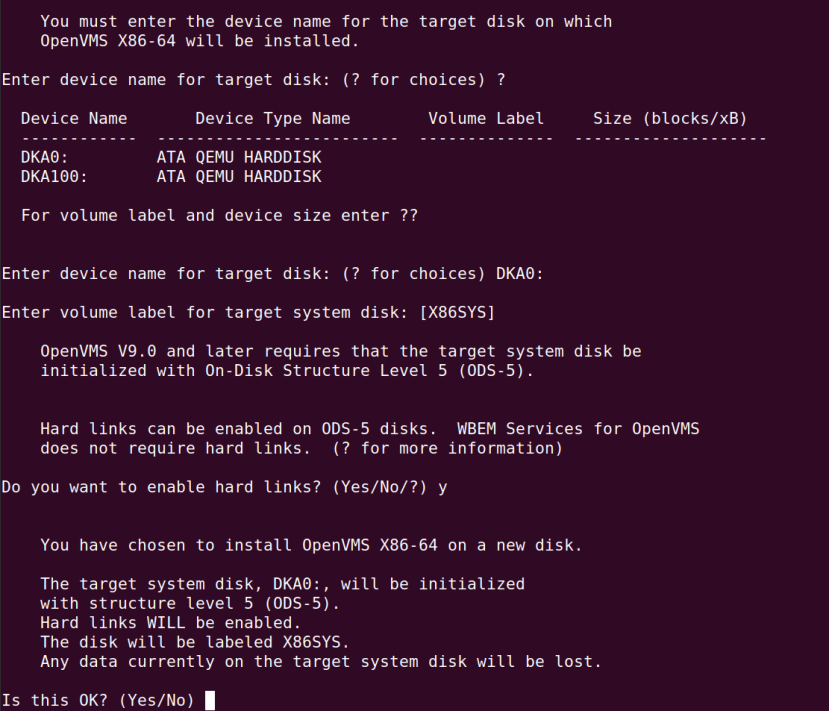

The first virtual 64G drive, DKA0:, was selected for installation, with the default volume label used and hard links enabled.

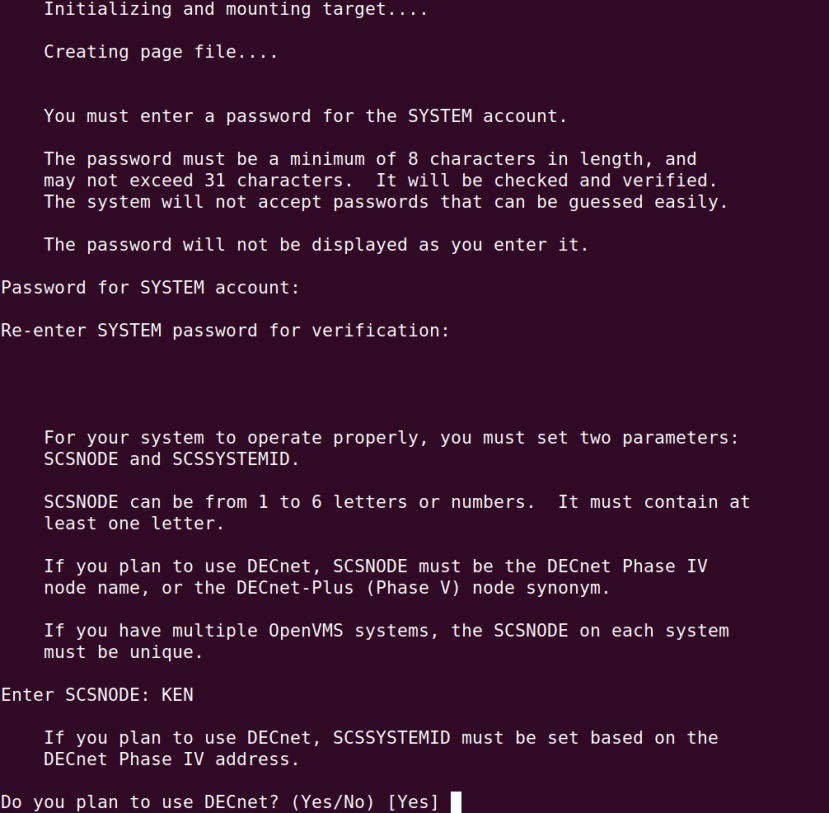

We had to set a password for the SYSTEM account, which is the OpenVMS equivalent of root with Linux or UNIX. We also had to set the SCSNODE parameter, which is essentially the hostname. Also if we wanted to use DECnet networking we would have to set an SCSSYSTEMID. We responded that we did plan to use DECnet and entered a Phase IV address of 1.11, which the installer then used to calculate and set the SCSSYSTEMID (the two are directly related).

DECnet can probably be ignored with most basic hobby use and in any case it can also be configured at a later stage. Alternatively, some random address can be set. For more details, see the VSI DECnet-Plus Planning Guide — and unless you’re an arcane networking technologies enthusiast, it’s perhaps best to ignore anything to do with DECnet Phase V a.k.a. DECnet/OSI.

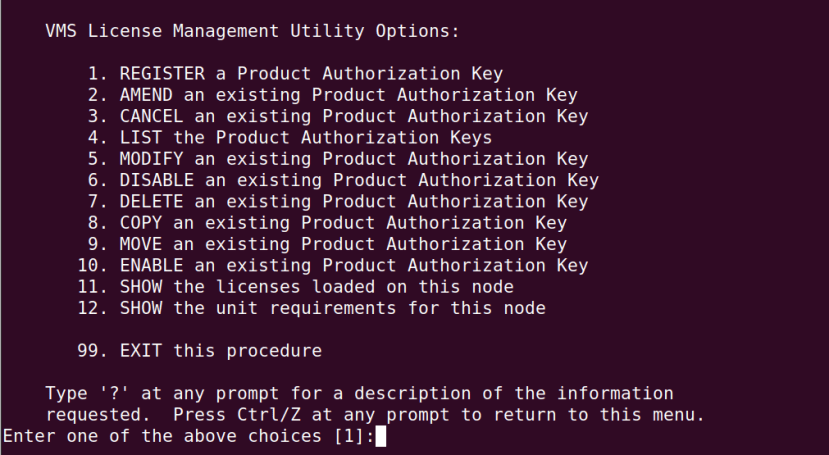

When prompted, the timezone and DST offset were entered. Following which we were presented with the opportunity to register licences. While we can boot OpenVMS without a Base Operating Environment licence loaded, restrictions include that the TCP/IP stack will not start. Hence it was decided to REGISTER and then ENABLE this licence, but not any of the others, as the complete licence file could later be copied across via SSH once the IP stack was up and running.

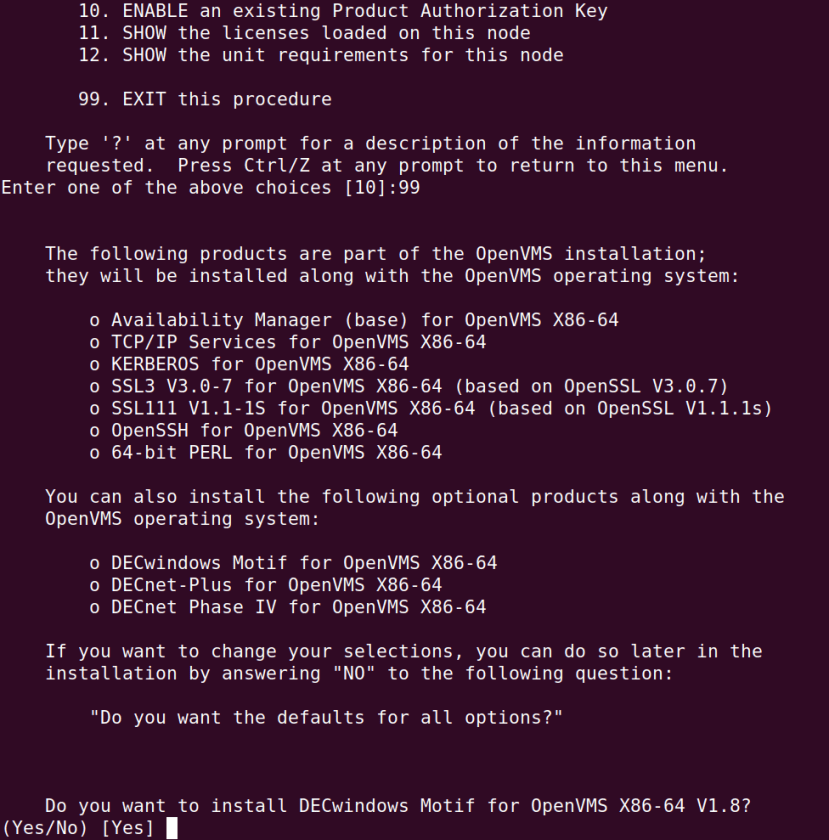

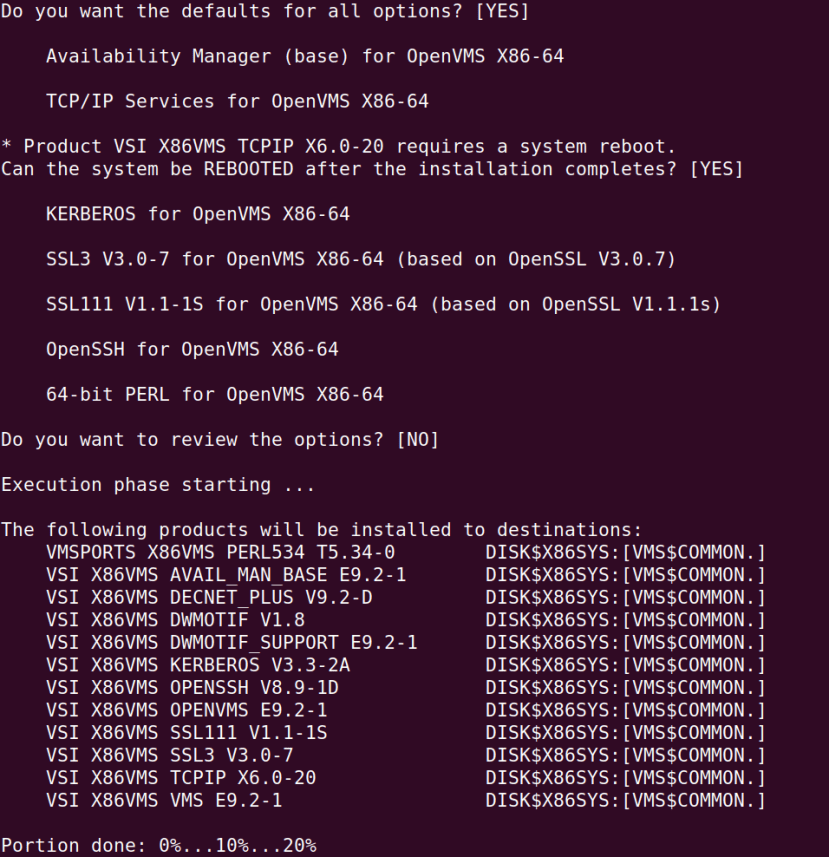

Next, we were asked if we would like to install optional software such as DECwindows Motif and for which selected the defaults. Things such as DECwindows take up very little additional space and this is something that we are not short of with a 1TB NVMe SSD.

We confirmed that the system could be rebooted after installation.

This first reboot wasn’t fully automatic for some reason and so Qemu was quit and restarted, following which this time Boot Manager was used to boot DKA0:.

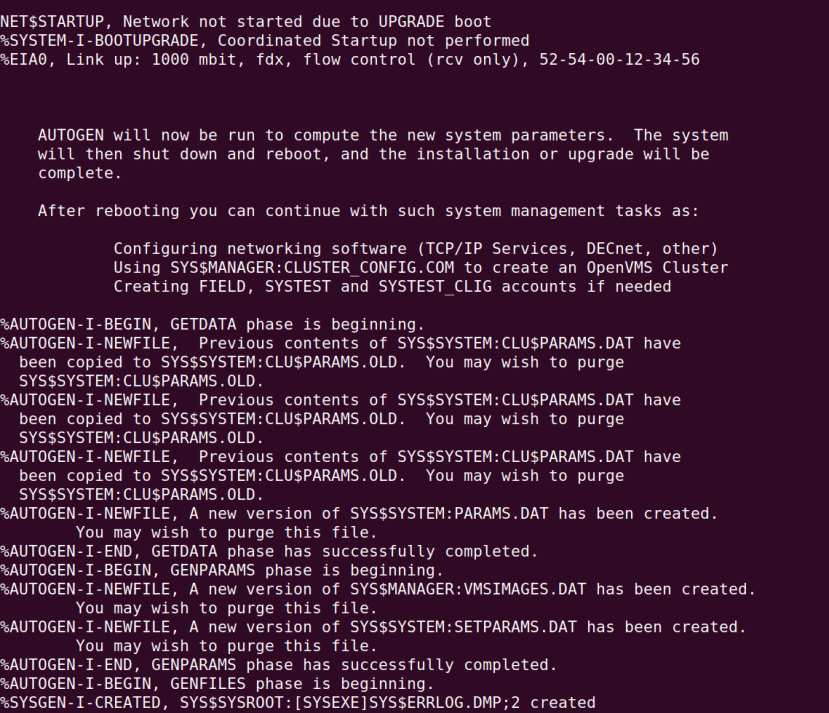

When a new installation is first booted the AUTOGEN procedure will be run, which creates the page and swap files (for virtual memory and process swapping), along with the file used for kernel dumps in the event of the system crashing. In addition to which SYSGEN parameters are set.

SYSGEN and AUTOGEN are whole topics unto themselves and used for setting system parameters and automating their tuning respectively. AUTOGEN might be run periodically and historical system performance feedback gathered, following which new SYSGEN parameters calculated and set. This might also be done after a hardware upgrade or installing certain new software. SYSGEN parameters are similar to Linux kernel parameters and configure things such as filesystem caching.

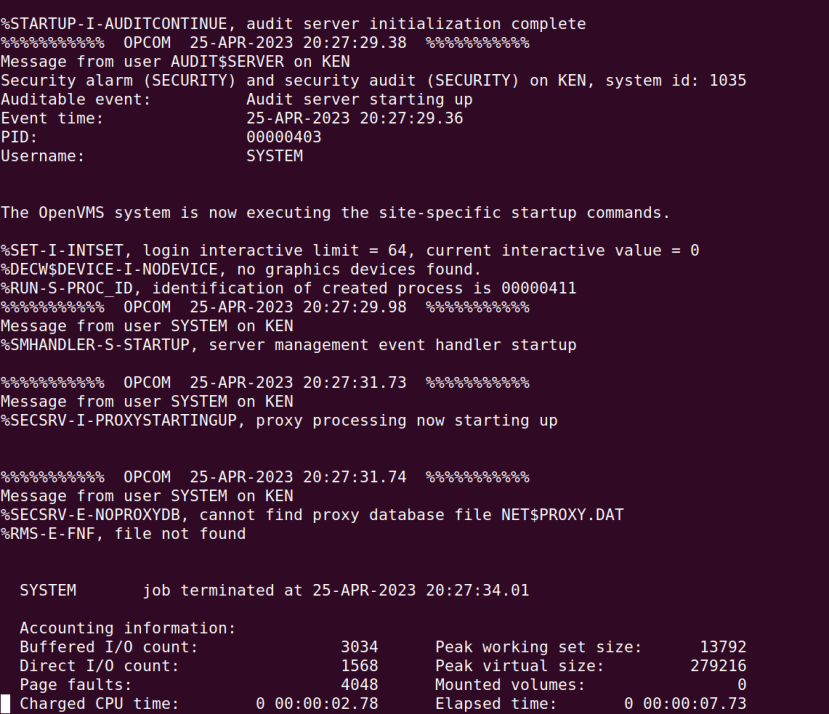

Another reboot later and we were greeted with the familiar block of text starting with “SYSTEM job terminated”, which means that our system is now fully booted and we may press ENTER to get an operator console login prompt.

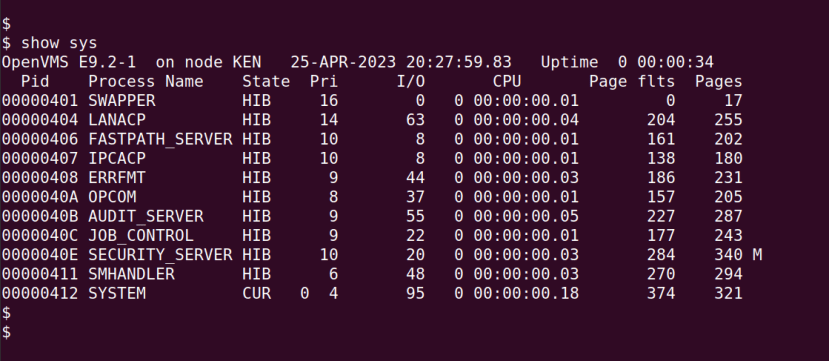

After logging in to the SYSTEM account using the password we had previously set, we could then enter SHOW SYS to see a list of running processes. This is actually SHOW SYSTEM abbreviated, since with OpenVMS we can abbreviate commands provided that the result is not ambiguous.

Next up we configured the TCP/IP stack and SSH, so that we could log in over the network rather than having to use the operator console, and so that we could copy all the licences across with scp.

TCP/IP

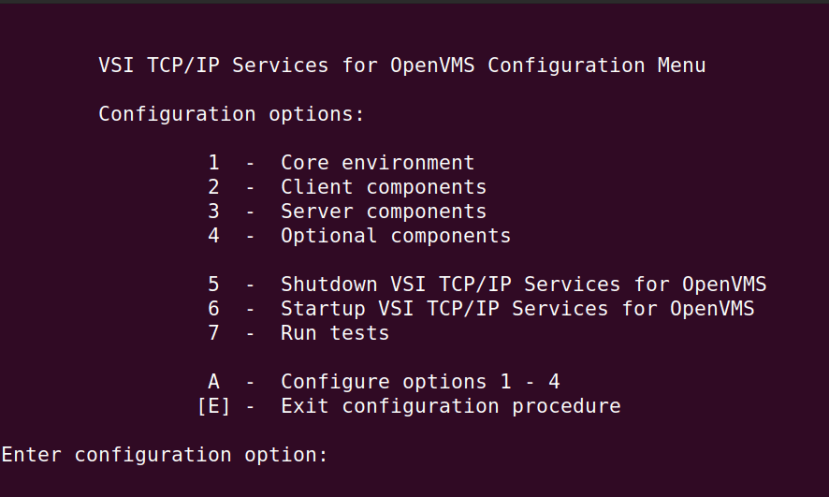

The OpenVMS equivalent of a UNIX shell script is a DIGITAL Command Language (DCL) file, which end with a .COM extension and to execute these we prefix them with an @ symbol. To configure the TCP/IP stack we need to enter:

$ @tcpip$configFrom option 1 we selected to configure the etwork interface with DHCP, and the default gateway and DNS server were set. The IP stack was then started via option 6. Options 2 and 3 are used to configure things such as FTP and NFS. For further details, see Configuring TCP/IP Services V6.0.

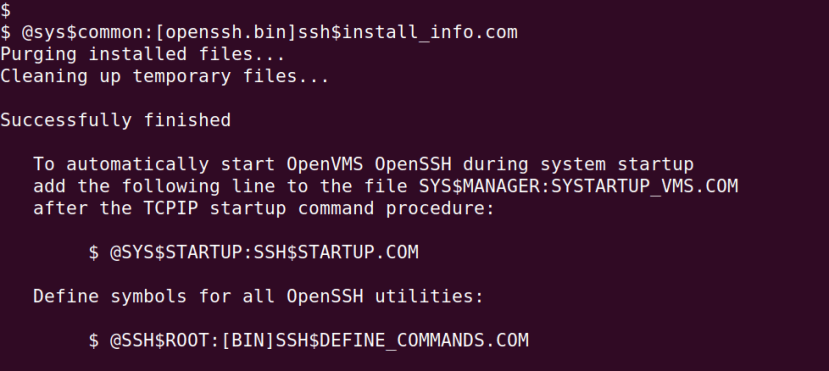

SSH is configured outside of the TCP/IP stack and if we followed the VSI provided documentation in strict order, this would have been configured earlier. In any case, the order is not critical and for details see Post-Installation Steps for OpenSSH. In short, we ran the DCL command files as instructed, which created the SSH server user and encryption key pairs etc.

Upon running the SSH installation command files we are instructed that we need to update the system startup command file, so that SSH is started at boot time.

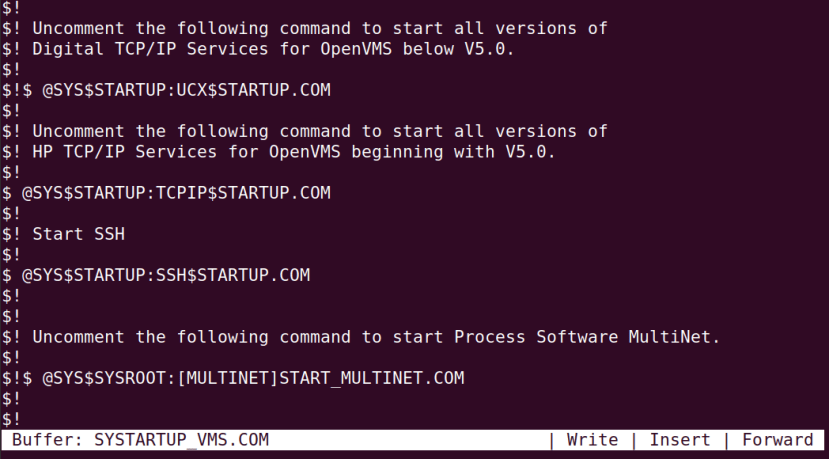

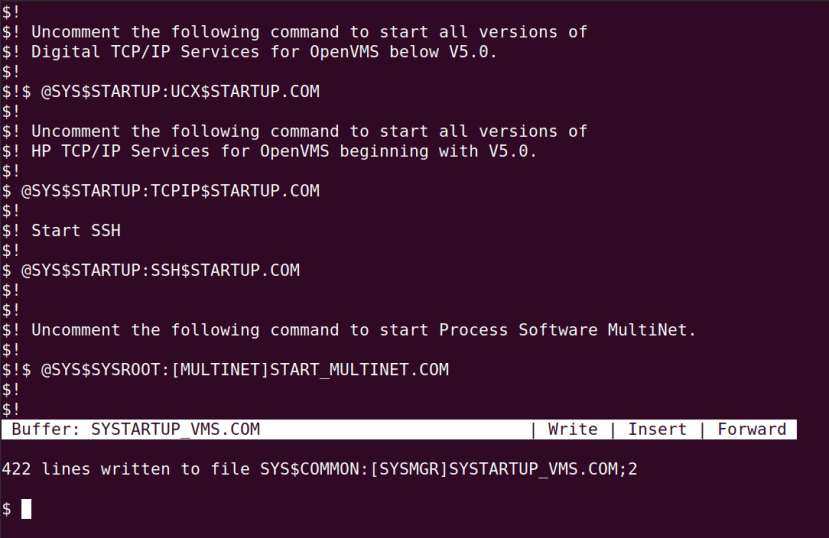

We also needed to uncomment the line which starts up the TCP/IP services. In the above screenshot we can see the Text Processing Utility (TPU) — a WYSIWYG text editor — with the system startup file loaded. Commented lines in a DCL file start with $! and the exclamation mark has been removed from the line which starts HP TCP/IP Services. We’ve also added a line to execute the SSH startup file as instructed, with a comment above this and additional padding for consistency.

To edit the system startup file we entered:

$ edit/tpu sys$manager:systartup_vms.comAfter editing CTRL-Z was pressed to save and exit. Here we can see that the file has the suffix ;2, as files in OpenVMS are versioned. If we ever wanted to revert to the previous version we would simply delete the latest version.

To save having to reboot, SSH was then started by entering:

$ @SYS$STARTUP:SSH$STARTUP.COMNote that OpenVMS commands are not case-sensitive. In DCL scripts you tend to format commands in upper case as matter of convention, whereas on the command line it doesn’t really matter. The above line was cut and pasted from the system startup command file.

Final words

An operating system such as OpenVMS should be shut down cleanly before the VM it is running inside is “powered off” and this can be done by executing:

$ @sys$system:shutdownAnd then responding to the prompts.

In this article, we taken a brief look at OpenVMS operating system history and how enthusiasts can now obtain licences and installation media, in order to get hands-on with the latest and greatest version of the O/S on PC hardware. We’ve looked at how Qemu with KVM may be configured on a Quieter2 mini PC to support running OpenVMS inside a virtual machine. We’ve covered VSI Boot Manager basics and OpenVMS installation, followed by TCP/IP and SSH configuration.

Along the way, we’ve barely scratched the surface of how OpenVMS is used and the features it provides, which may be something that we’ll explore in a little more detail in a future article.