Getting Started with the NVIDIA Jetson Nano 2GB

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

Setting up and an introduction to Jetson AI training and certification.

Back in January of this year I wrote about getting hands-on with the original Jetson Nano, covering things like CUDA and the Jetson software stack, before progressing to run through one of the provided examples. In a subsequent post I then took a first look at the new 2GB Jetson Nano, comparing this with the original 4GB model and also the Raspberry Pi 4 and Coral platform.

With the advent of the Jetson Nano 2GB it’s important to note that, we not only have an even more accessible CUDA platform, but structured training and a certification path that accompany this. In this post we’ll cover getting the new board set up and running the latest examples, before then taking a look at the training and certification.

Hardware setup

Computer vision is a key application for the Jetson Nano and AI in general. As such it may come as little surprise to learn that, in addition to the Jetson Nano Developer Kit — 4GB (199-9831) or 2GB (204-9968) — a camera will be required in order to complete the Jetson AI Fundamentals course. Either a Raspberry Pi Camera v2 (913-2664) , which uses the CSI-2 interface, or a Logitech C270 USB webcam is recommended. A USB-C power supply is also required.

We used a USB keyboard and mouse, plus a HDMI monitor, for the initial software setup. Once this was completed we have then have the option of connecting over the network, e.g. using SSH and forwarding our display (X11) over this to a remote workstation.

Software setup

The Linux operating system for Jetson Nano is based on the Ubuntu distribution, with a number of pre-configured additions courtesy of NVIDIA Jetpack SDK for convenience. There are two versions of the Getting Started Guide provided, one for the original Jetson Nano 4GB and one for the 2GB. Each of which links to a corresponding SD card image download, with the one for the later 2GB kit being tailored to its lower memory, using a more lightweight desktop environment.

Once we’ve downloaded the image file this can be written out to a Micro SD card, just as we would with a Raspbian O/S image for Raspberry Pi. On Linux this was done with:

$ sudo dd if=sd-blob.img of=/dev/sda bs=1MImportant: do not just copy the above verbatim and be certain to use the correct “of=” parameter that corresponds to your Micro SD card, otherwise loss of data may result!

After inserting the Micro SD card into the Jetson Nano module, power was applied and following a short delay, a graphical configuration utility started. At this point we needed to accept the license agreement and carry out a number of basic tasks, such as configuring the keyboard and our first user account, and setting the partition size. Once this was completed we could reboot, log in and upgrade to make sure that we have the very latest versions of packages with:

$ sudo apt update

$ sudo apt dist-upgradeThe Getting Started Guide also includes instructions for completing initial setup in “headless mode”, which will be useful if you don’t have access to a HDMI monitor etc. This instead uses a USB connection, which provides a UART for a serial terminal session.

Note that the Jetson Nano Developer Kit User Guide has hardware info for the module plus carrier board, along with a summary of the JetPack SDK components and links for further details.

Next steps

The final section in the Getting Started Guide provides links to additional guides, tutorials and courses, along with community resources, such as a wiki and forum. At this point if we wanted to hook up additional hardware, we could consult the aforementioned User Guide for the Carrier Board header pinouts and following which, connect peripherals via I2C, SPI and GPIO.

There are how-to guides for using Docker containers, setting up VNC — handy for accessing graphical applications from a remote Windows computer — and connecting Bluetooth audio devices. NVIDIA have invested heavily in not only optimising HPC software for AI and data science, but packaging this as Docker containers for ease of use; the NGC Catalog provides a hub for GPU-optimised software, which is integrated as a Docker registry and can be searched online.

In a previous post, Hands on with the NVIDIA Jetson Nano, I took a look at “Hello AI World”, the classic way to getting started with Jetson Nano. This involves cloning a GitHub repository and running pre-trained neural network models for classifying images and locating objects etc. Example inference apps are provided written in Python and C++, with training examples that demonstrate transfer learning and re-training.

Back in January when I wrote about the original Jetson Nano 4GB, there was also a course entitled, Getting Started with AI on Jetson Nano. However, this was v1 of the course, which has now been replaced by v2 and this forms the first part of the Jetson AI Fundamentals course, which in turn provides the foundations for the two NVIDIA certification tracks.

Jetson AI Fundamentals

Enrolling on the Jetson AI Fundamentals course is incredibly straightforward. An NVIDIA account is required and if we don’t already have one, we can sign up as part of the enrolment process. On the landing page for the course we are provided with details of the prerequisites and instructed to head over to the Course tab for Getting Started with AI on Jetson Nano.

On the Course tab we can find a link to a Welcome page which provides an outline and here we are told that we will learn how to:

- Set up your Jetson Nano Developer Kit and camera to run this course

- Collect varied data for image classification projects

- Train neural network models for classification

- Annotate image data for regression

- Train neural network models for regression to localize features

- Run inference on a live camera feed with your trained models

There are also some general notes on working through the course and this requires use of two browser windows. The first of these is for the course pages which provide a guided learning experience, while the second is used to provide a remote JupyterLab interface to the Jetson Nano.

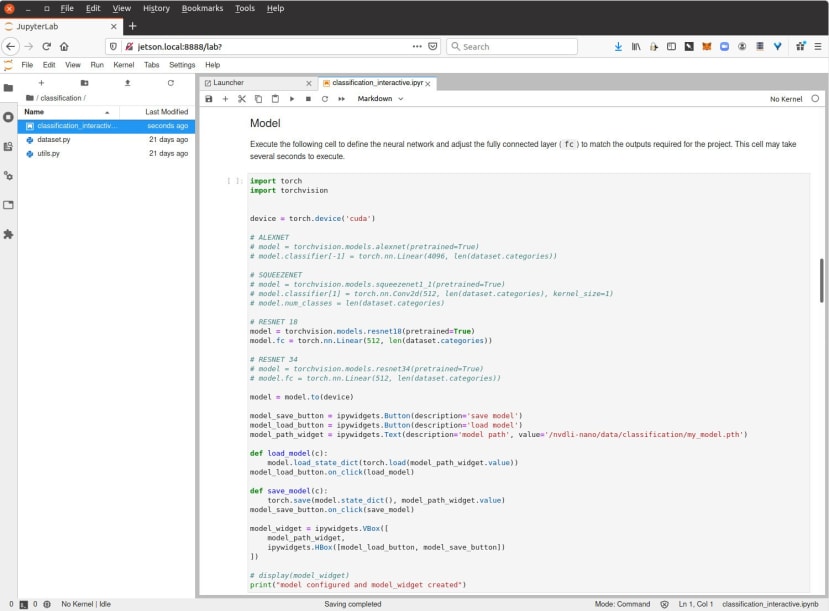

For those not familiar with Jupyter notebooks, they are a JSON formatted document with input/output cells which can contain code, text, mathematics, plots and media. JupyterLab is a web-based interactive development environment for working with them. Together they provide a feature rich environment for scientific computing, which makes it easy to create and share applications.

Following the Welcome page we have Setting Up Your Jetson Nano, which first takes us through basic hardware and software setup, as covered earlier. Following which it explains how to increase the swap size (using the SD card for “virtual RAM”), which may be necessary if we didn’t allocate sufficient during initial configuration and this is particularly important with the 2GB board.

On the third page of Setting up... we are told that we will be using the Jetson Nano in headless mode and we are taken through running up the Docker container that provides JupyterLab, together with the notebooks that we will be using in the course.

Following which we can load up JupyterLab in our second browser window and we’ve completed setting up for the course.

Next, after Setting up..., we have the sections:

- Image Classification

- Image Regression

- Conclusion

- Feedback

The Image Classification part of the course involves building AI applications that can answer simple visual questions, such as “does my face appear happy or sad?” and “how many fingers am I holding up?”. Which is to say, mapping images input to discrete outputs (classes). Image regression meanwhile maps image input pixels to continuous outputs. The examples cited being defining the X and Y coordinates for various features on a face — e.g. nose — and following a line in robotics.

Let’s take a look at image classification.

More than simply examples

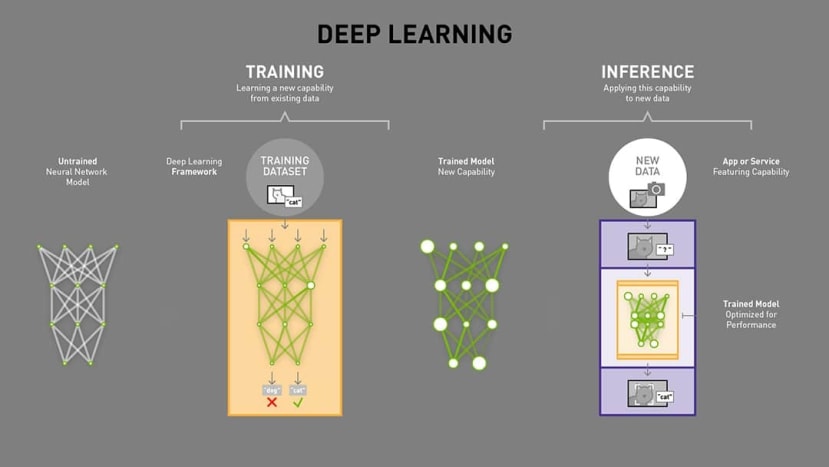

The course materials start right at the beginning, with a primer on AI and deep learning, showing a timeline that stretches back to the 1950s. The above graphic is also used to explain how deep learning models work with weights, training and finally, inference.

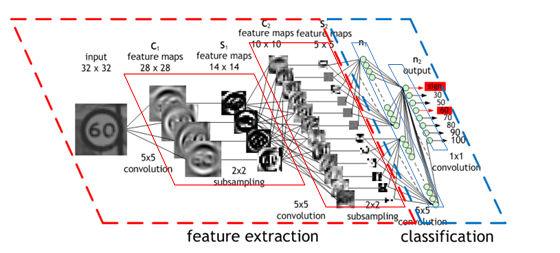

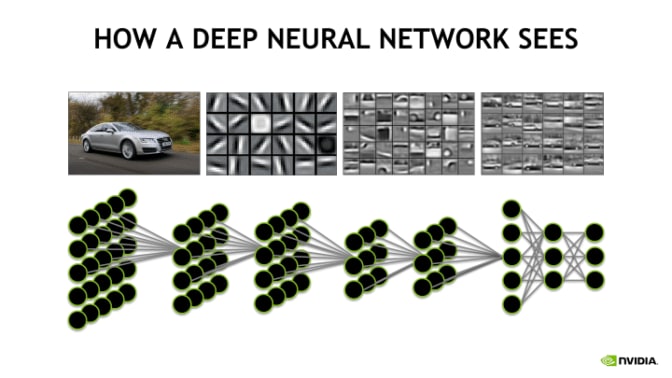

Convolutional neural networks are introduced next, with an explanation of concepts such as the input layer, feature extraction, and output layer which performs classification. Following which ResNet-18 and residual networks are introduced, along with transfer learning. At each point these are illustrated with diagrams which help to understand the concepts.

Of course, up until this point all we have done is read up on some theory, but this is about to change and with the basics out of the way, the course material explains that we will be modifying the last neural network layer — the fully connected layer — of the 18 that make up the ResNet-18 model. As provided the model has been trained to recognise the 1,000 classes in the ImageNet 2012 classification dataset. However, we’ll be modifying it to have have just 3 output classes. This will also mean having to train the network to recognise those 3 classes, but since it’s already been trained to recognise features common to most objects, the existing training can be reused.

At the top of the Image Classification section we are provided with an introduction courtesy of the above video.

The course notes instruct us to execute the code blocks in the notebook in turn, with an explanation being provided for each. This is done by selecting the cell, followed by Run → Run Selected Cells, or alternatively, SHIFT + ENTER.

We can see above that upon executing the first code block, the output of the ls command was displayed and execution ended. However, when running the second code block, which opened the camera, this did not return and a “kernel” continued running. The notebook warns us how we can only have one instance of the camera running at a time. We can shut down running kernels via the Kernel menu and by right-clicking on the notebook and selecting Shut Down Kernel.

Next the notebook guides us along progressively building up our application, creating a task, widgets, loading the network model, carrying out training and evaluation etc. At the end of which we should have an application which can determine the meaning of hand signals, thumbs-up and thumbs-down. Rather than cover each of these steps in detail, I’d encourage those interested in finding out more to get hands-on themselves!

Note that in addition to the Getting Started with AI on Jetson Nano course materials, there are two optional parts to Jetson AI Fundamentals. These are:

- JetBot. Building your own Jetson Nano-powered robot, configuring the software and using deep learning for collision avoidance and road following.

- Hello AI World. A series of tutorials, that were introduced in the post, Hands on with the NVIDIA Jetson Nano.

Certification

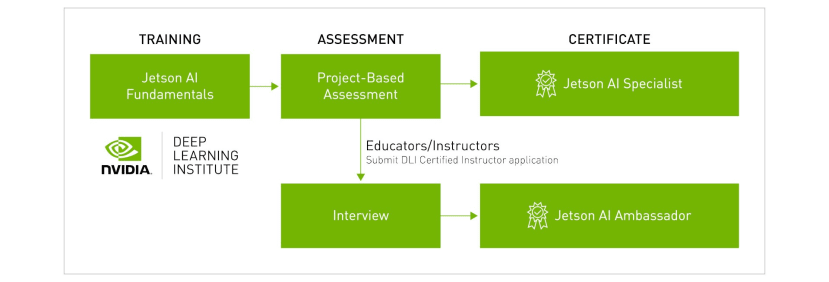

The Jetson AI Fundamentals course provides the foundations for Jetson AI Certification. Once this has been completed, the next step in becoming a Jetson AI Specialist is to complete a project that demonstrates an understanding. Suggested examples include “collect your own dataset and train a new DNN model for a specific application, add a new autonomous mode to JetBot, or create a smart home / IoT device using AI.”

The assessment criteria are stated as being:

- AI (5 points) - The project uses deep learning, machine learning, and/or computer vision in a meaningful way, and demonstrates a fundamental understanding of creating applications with AI. Factors include the effectiveness, technical complexity, and performance of your AI solution on Jetson.

- Impact / Originality (5 points) - The concept of your project is novel and applies AI to solve or address challenges or issues faced by yourself or society. Also, our ideas and work are either original or derivative in a significant way.

- Reproducibility (5 points) - Any plans, code, and resources needed for someone else to build and use the project are included in the repository and are easy to follow.

- Presentation and Documentation (5 points) - The video effectively demonstrates and explains various aspects of the project, and there exists a clear, complete readme in the repository that documents any steps needed to build/run the project along with diagrams and images. Note that educators should have an oral presentation component to their video to highlight their teaching abilities.

Jetson AI Ambassador certification is meanwhile targeted at educators and instructors, with an additional interview step involved and some teaching/training experience a requirement.

Final thoughts

JetPack SDK and the turnkey images for Jetson Nano make it possible to get up and running in no time at all, with a pre-configured software stack that enables CUDA-accelerated compute using a selection of popular frameworks. This convenience is further built-on via a catalogue of NVIDIA provided Docker containers, including one which is used to support Jetson AI training and certification. The use of JupyterLab for which provides a feature rich and easy-to-use environment.