Do I need a Multi-core Microcontroller?

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

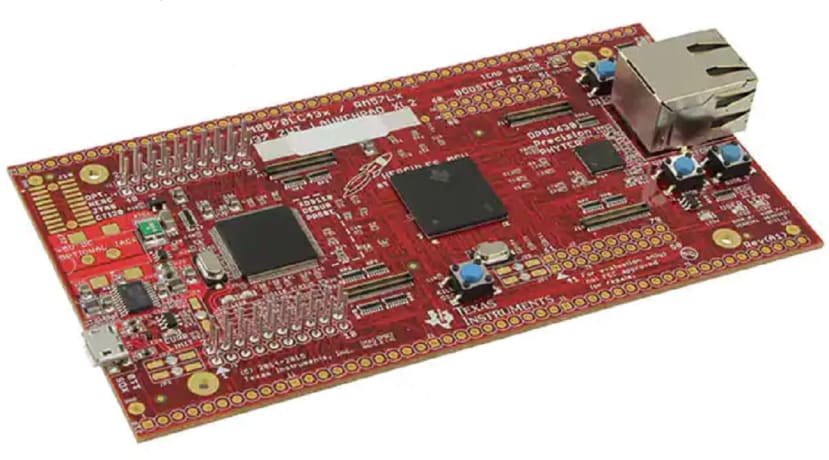

Texas Instruments Hercules RM57Lx LaunchPad board features a dual-core ARM Cortex-R5 RM57L843 microcontroller chip designed for high-reliability applications. The two cores run the same code in offset-lockstep thus providing protection from transient errors and hardware failures.

Microcontroller chips are the workhorses of embedded computing. Compared to the thoroughbred racehorses of the industry – microprocessors, with their multi-GHz clocks - they are slow, self-contained, and loaded-down with memory and peripheral interfaces. But the old single-core chips are struggling to meet the increasing demands of modern applications such as real-time digital signal processing and AI inferencing. OK, why not take one of these fast microprocessors and…. No, don’t even think about it: the average MPU contains interfaces for very fast external program and data memory (memory-management units or MMU), perhaps a graphics processing unit or GPU, and not much else. No GPIO. No ADCs. No PWM. Limited serial communication. So, what are the alternatives?

Get a bigger microcontroller

For decades the industry has been dominated by 8-bit MCUs like the Intel 8051 in its various forms, the Microchip PIC16 and more recently the Atmel ATmega328. These devices are still adequate for many real-time control applications, such as motor speed control; even relatively sophisticated algorithms for PID control can be run, providing the maximum shaft speed is not too high. Yes, and 8-bit resolution is frequently all that’s necessary for digital feedback control of electromechanical systems.

But that isn’t always the case. Once inexpensive desktop PCs arrived with GHz clock speeds, 32-bit cores and floating-point maths hardware, design engineers found they could run complex signal-processing code, if not in real-time, at least fast enough to recognise future possibilities. For example, the Discrete Fourier Transform (DFT) for converting a sampled signal in the time domain into a sampled spectrum in the frequency domain. In other words, finding the frequency components that make up a sampled arbitrary waveform. It involves an awful lot of maths operations and for a long time only possible on a powerful mainframe and even then, not in real-time. Some re-arrangement of the DFT maths yielded the famous Fast Fourier Transform (FFT) which now enables modern 16- and 32-bit microcontrollers to provide a spectral analysis of a continuous analogue waveform, albeit with a limited bandwidth. I have a 256-point complex FFT in Forth language code that runs on my FORTHdsPIC computer in 50ms. That means it can provide a real-time spectrum of a sampled signal which contains frequency components up to a maximum of 10Hz. Not that useful perhaps, but there are a couple of ways to speed things up:

- Re-write the FFT program in machine assembler language (‘bare-metal’ code). It’ll probably take more time to debug but should yield a substantial speed increase.

- Make use of the specialist DSP hardware and instruction set available on the dsPIC33 chip. I haven’t so far incorporated the DSP functions into FORTHdsPIC because the MCU’s single-core structure would require extra code overhead and result in little gain. I said ‘so far’, because a solution may be at hand: a dual-core dsPIC chip (see below).

- Move to one of the many MCU chips with a 32-bit ARM Cortex-M core. The Cortex-M4 has a suite of DSP instructions, while the M4F also includes a floating-point unit (FPU). Don’t get carried away with floating-point arithmetic though. Even with a hardware FPU available, fixed-point may yield faster code.

But why would you want to use powerful functions like the FFT in your embedded code? As I said before, it opens up new possibilities. For example, spectral analysis of the sound a machine makes can reveal signs of future failure, even enabling automatic mitigation by reducing its speed or increasing lubrication.

Real-Time Operating Systems for Multi-Tasking

On the other hand, if the requirement is to handle a number of relatively small, semi-autonomous real-time tasks, then a powerful single-core MCU running a real-time operating system (RTOS) may suffice. A general-purpose OS like Linux or Windows provides the illusion of multiple user programs like word-processors or Internet browsers running on a PC at the same time. In fact, they are ‘time-sliced’ by a scheduler which selects the task to run at any one time. The OS scheduler bases its decision on what to do next on the need to ensure the user sees apparently seamless and simultaneous execution of multiple tasks. The OS user interface is optimised to suit incredibly slow and sporadic interaction with a human, so precise task-switching times are hardly a priority.

The snag is that precise or deterministic timing is often very important in real-time embedded systems. It’s hard to imagine any digital control system that won’t require inputs to be sampled and the data processed at precise intervals. And that’s where an RTOS comes in. Task switching can be made much more predictable with very little code overhead - relative to a PC OS that is. Their small size makes it possible to run them on MCUs with fairly modest amounts of memory available. The downside is that installing an RTOS is not a ‘plug and play’ exercise: a lot of coding effort will be needed to make it work reliably with the tasks to be performed. Fortunately, a very popular RTOS ported to many platforms is available for free with the unimaginative name of FreeRTOS.

Multi-Cores for Multi-Tasking

After having looked at the alternatives, let’s see what these ‘new-fangled’ dual-core MCUs can offer.

Spreading the Load

At a basic level, semi-independent tasks can be allocated their own core to run on. As an example, a remote automatic weather station which sends a report on data collected over the last hour via a wireless link, could operate with one core logging the data while the other handles the transmission. Both cores would spend most of their time powered down. Core 1 would be woken up say every minute by a timer to take measurements and store them. That could mean it’s only active for less than 1% of the time. Core 2 gets woken by Core 1 on the hour and takes less than a few seconds to send the logged data out on a long-range low-data rate LoRa network. The second core is awake for even shorter intervals resulting in very low average power consumption. Surprisingly, adding cores may lead to less power being consumed!

Many dual-core MCUs are designed for master-slave operation where the master is a low-power unit such as an ARM Cortex-M0+ and the slave is the much more powerful Cortex-M4. In this case, the master handles program ‘administration’ firing-up the slave only to perform complex mathematical processing such as an FFT or AI inferencing.

Parallel Development

One advantage often touted for using multi-core devices is that separate teams can develop and debug the code for each core in isolation. Assuming of course, that the interface between the cores has been agreed by all the teams in advance.

Security

The security of network-connected devices is a hot topic at the moment. A secure boot, cryptographic libraries, secure communications or other secure features could execute on one core; the application on the second. This provides a hardware separation between insecure and secure domains.

Functional Safety

Another hot topic of the day is Functional Safety. The origins of the term functional safety go back to the 1990s when it became obvious that some sort of safety standard needed to be applied to the design and manufacture of machines featuring computer control or ‘automation’. A blizzard of new or updated standards followed with two of special importance today: IEC 61508 for Industry 4.0 related systems, and the subset ISO 26262 for the automotive industry. The latter is receiving a lot of attention, given the increasing amount of driver-assistance electronics (ADAS) in modern vehicles, and perhaps in the future, the arrival of fully-autonomous vehicles. The problem is that microelectronic devices don’t seem to be as reliable as they once did:

- The Restriction of Hazardous Substances Directive 2002/95/EC (RoHS 1) came into effect in 2006 and banned the use of Lead in electronic components. While accepting the safety reasons for this ban, an unfortunate side effect was a return to the days when ‘Whiskers and Dendrites’ grew off metal components over time until short circuits occurred, often out of sight within the transistor enclosure. Early devices had short lifetimes as a result until it was discovered that a lead coating prevented the phenomenon. Now, in spite of new surface treatments, the problem may be back. RoHS has an extensive list of exceptions for medical and Space equipment, but that still leaves the vast industrial and commercial market.

- In an attempt to keep up with Moore’s Law, chip manufacturers have been cramming more and more elements onto a single die. Basic transistor elements have shrunk in size over the years to enable this to be done; the snag is that stray sub-atomic particles from trace radioactive contamination of the chips themselves and even ‘cosmic rays’ from Space can play havoc with, say, the few electrons in a memory cell. To detect these ‘Single-Event Upsets’ or even permanent damage, some form of redundancy is required. That’s where extra cores come in, each running the same code and comparing results with the other.

Unless your project merits the use of leaded products, there’s not much you can do about the whisker problem, except to mitigate the effects with redundant hardware. You could make some kind of self-checking system around a basic dual-core processor such as the Raspberry Pi RP2040, but a lot more hardware is required than just the extra core. Fortunately, chip manufacturers have come up with multi-core microcontrollers designed for the high-reliability market. Two examples have been around for years: the Texas Instruments Hercules TMS570 and the Infineon TriCore Aurix ranges.

The TMS570 features two Cortex-R5F cores with extensive safety-related hardware and Error Correcting Code (ECC) memory. The two cores are hardwired to run the same code with their clocks in offset lockstep, meaning that the checking core is at least one clock pulse behind the main so that a common transient upset can’t affect them in the same way and hence defeat the checking system. A TI LaunchPad development board for this chip is available (205-6391) .

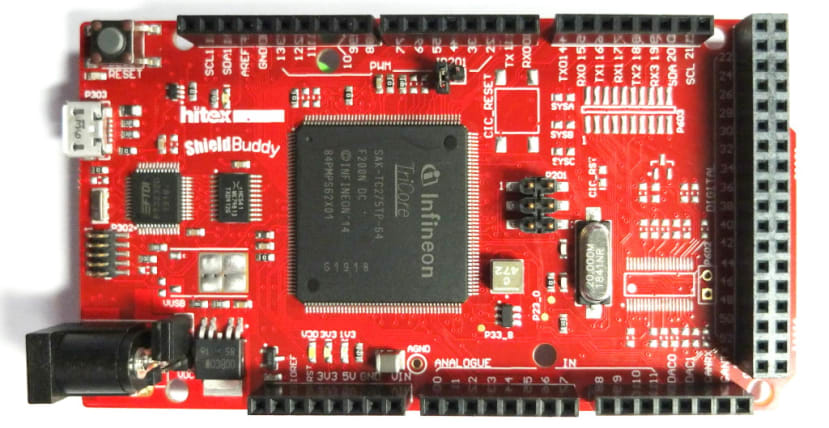

The Infineon Aurix device is designed for those who want three cores each running different code, but they would like two of them to have redundant checking. A popular development board for the TC275 is the Arduino Mega format Hitex ShieldBuddy (124-5257) . The datasheets don’t make much of it, but a glance at the chip’s block diagram reveals the presence of ‘shadow’ processors attached to two of the main cores.

Finally

In the coming months, I’m looking forward to porting FORTHdsPIC across to the dsPIC33CH Curiosity board and setting up the faster slave core to handle complex maths routines such as the FFT.

If you're stuck for something to do, follow my posts on Twitter. I link to interesting articles on new electronics and related technologies, retweeting posts I spot about robots, space exploration and other issues. To see my back catalogue of recent DesignSpark blog posts type “billsblog” into the Search box above.