Designing a Raspberry Pi Based Intelligent Ultrasonic Bat Detector App

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

Some people say that necessity is the mother of invention, but this omits other powerful drivers such as curiosity and the desire to learn new stuff. In the case of this project, the need for a bat survey on an old derelict building was one such driver, combined with a previous interest in bat detector technology and fascination with the ability of bats themselves to 'see' in the dark using echolocation.

UK law, quite rightly, protects nesting birds and roosting bats and so, for compliance, the local council insists on a professional survey to ensure that no protected animals are in residence. However, bats are incredibly hard to spot and can live in secret cavities of which this building has plenty! This is where the technology makes a difference.

As the story continues, and in a bid to save a bit of cash, I phoned up an ecologist friend for whom I had previously helped with bat surveys and proposed a 'work swap' where I would help set up a cabin in his garden and he would do a bat survey on the cottage. Then, bad news, he phoned me to say that his main detector was broken – could I fix it? No success there, so, determined to proceed, I went ahead and bought a fairly expensive Dodotronics USB ultrasonic mic for us to use. Thankfully, this proved to give excellent results!

Anyway, we got the survey done and, luckily for us, there were no bats roosting. But what to do with the mic? Sell it on eBay? It produced such amazing results that I chose to keep it and use it for this machine learning project instead. Here's one of the spectrograms that it is capable of facilitating:

Shown above is the acoustic signature of an echo-locating brown long-eared bat. The .wav file was initially recorded at 384,000 s/sec and processed with Audacity software. Can anybody spot which part of the data the bat uses for navigation?

Although auto-classification of bats had been done before, I thought it would be fun to have a go myself. After a few false starts and some communication on this, particularly friendly, Facebook group: Bat Call Sound Analysis Workshop I settled on a solution using Random Forest and a set of feature extraction algorithms from Bioacoustics. After following the Bioacoustics tutorial I managed to get some basic training and deployment scripts up and running in the R language, despite never having used it before. I did, however, have to go back more recently and work out how to use it properly to create .csv files for producing into graphs!

Finding data for the classifier was not as easy as we might expect. In theory, there should exist a number of 'citizen science' databases crammed full of audio recordings of the bats of the UK and northern Europe, but, for some reason, they've all been made inaccessible to the general public. Fortunately, I found 6 species in my back yard and, with the help of a few other enthusiasts, I cobbled together a data-set of 9 of the 17 species found in the UK for testing. I also added recordings of rattling my own house keys to help test the system when no bats were available.

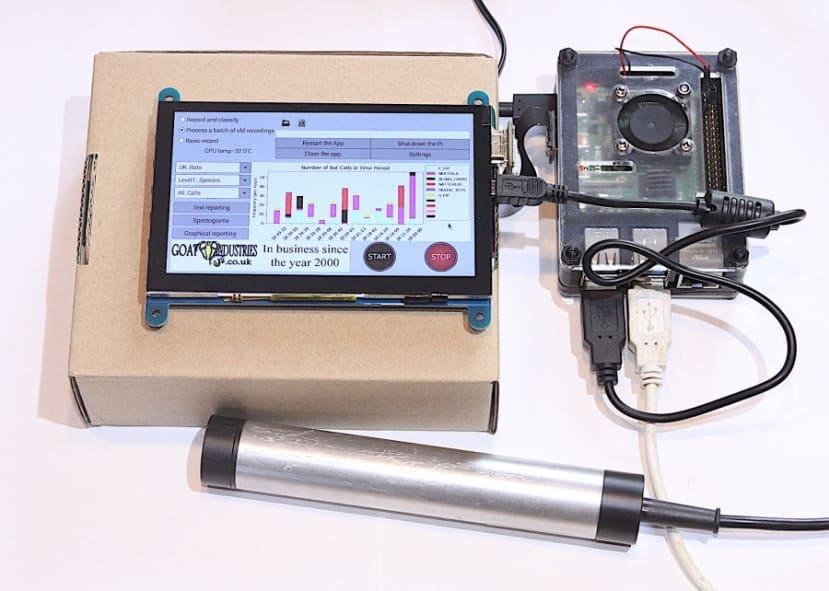

Spurned on by some good initial results, I progressed to creating a device that would be based on the Raspberry Pi 4. I did try version 3, but it was fairly useless due to lack of RAM, so version 4 it is:

The image above shows the Pi 4 Model B 4 Gb RAM (182-2096) mounted in a case including a fan with an 800 x 480 touch screen. The metal tube is the UltraMic 384. To run off a battery, it would need something like an X750 UPS shield to monitor the battery level and prevent a hard shut down and subsequent corruption of the SD card.

Creating the GUI with Gtk 3:

Gtk is one of those pieces of software that lurk in the back of our computers, and in this case, it's the one responsible for most of the windows and sophisticated buttons that we see in most of the applications that we love to use. There are quite a few options on how to use it – different historical versions and many different languages. My eventual choice was based on choosing the latest version, but not 'still in development', and finding at least one decent tutorial that gave some nice, usable, examples. I spent some time running through each example from This Tutorial in a Jupyter Notebook running Python 3... and most of them worked! The key to success was to experiment extensively with using horizontal and vertical panes and grids and other 'boxes' in which the widgets (buttons etc.) could be arranged. The results are very satisfying.

So how does the system work?

Firstly, assuming we're actually going to record some audio, the ultra-sonic mic picks up the audio PCM data using an STM M0 processor and streams it to the Pi via USB. On the Pi side, the software used to receive is the Alsa driver using the Bash language:

arecord -f S16 -r 384000 -d ${chunk_time} -c 1 --device=plughw:r0,0 /home/pi/Desktop/deploy_classifier/temp/new_${iter}.wav &

waitThe important details in the above are the '&' sign at the end of the top line and the 'wait' below it. These enable parallelisation so that, for example, classification and recoding audio will run at the same time on different cores of the CPU. The wait command creates a bit of hierarchy, preventing all the other bits of code initiating before the current recording has finished. In this way, batches of code run in the correct sequence. Some of the code, notably the GUI itself, is asynchronous, in that it kind of randomly polls various text and CSV files for data.

Feature extraction and classification are called right after the wait command and are written in the language R. Firstly, the audio data is analysed to look for certain useful artefacts or features. For bats, we are particularly interested in short lines and curves which can be visualised in spectrographs. Certain bats will, for example, gently start echolocation at 100 kHz and finish with a bang of amplitude and a short flat spot at 45 kHz. On the spectrograph, it looks like a hockey stick. Next, the features found for a particular chunk of audio are compiled into an array, or data frame, for processing in the Random Forest software, which is also written in R. Random Forest is basically a large array of decision trees which are each fed slightly different versions of the data to help prevent overfitting.

The classifier then spits out a text file detailing the probability results, a CSV of the ongoing results and renames the audio file with the most likely bat species and it's associated probability in case we want to look at it in more detail on a spectrograph:

write.table(Final_result, file = "Final_result.txt", sep = "\t", row.names = TRUE, col.names = NA)

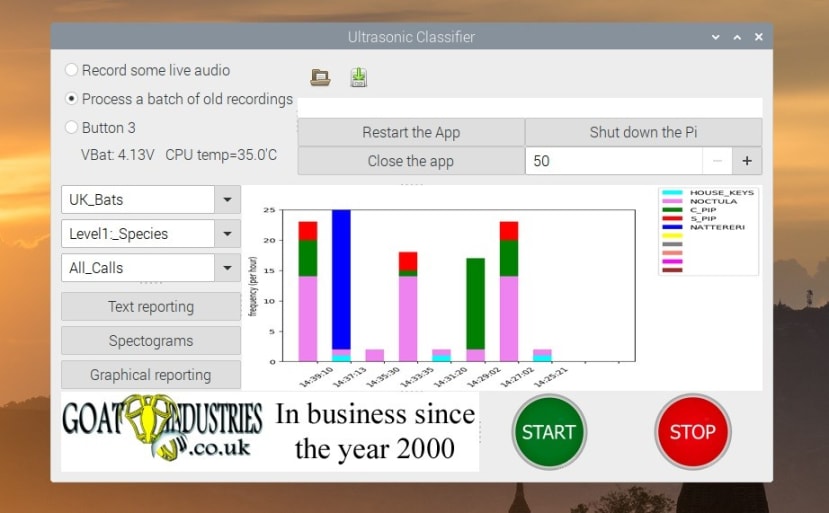

write.table(prevData, file = "From_R_01.csv", sep = ",", row.names = FALSE, col.names = TRUE)The results are picked up by a variety of python scripts, depending on which options have been previously chosen in the GUI. If, for example, we wanted to produce a nice bar chart representing the overall results, a piece of code called 'create_barchart.py' will create a .png image using the Python matplotlib.pyplot that the GUI can then import whenever it wants to:

fig.savefig('/home/pi/Desktop/deploy_classifier/images/graphical_results/graph.png', bbox_inches='tight', figsize=(6,4))Other than the main R, Bash and Python scripts, there are a bunch of 'helper' files that are used to communicate such things as 'stop', 'start' and 'spectrogram'. So hitting the spectrogram button changes the content of one of these files, which is then quickly picked up by one of the parent scripts, 'script_1.sh' to restart the whole GUI with a fancy spectrogram display box. The Granddad file 'run.py (as opposed to parents and children) will actually self destruct if it sees the correct helper file (close_app.txt), permanently closing all scripts running in the app. On clicking the bat icon on the desktop, Granddad then checks that no scripts associated with the app are still running from a previous session before launching its immediate children, e.g. script_1.sh.

Build it yourself!

Lastly, if anybody wants to build this system themselves:

- The Elecrow 800 x 480 touch screen is supplied with a CD ROM with a special version of Buster. Install the .img file to the Pi SD card. The SD card may need to have its main partition extended if it does not fill the whole card.

- Connect up a Pi 4 with 4Gb RAM to an 800 x 480 touchscreen. Don't forget the USB connection!

- Plug the UltraMic 384K into a spare USB port.

- Manually download bzip2-1.0.6 from soundforge website. It should now be in the 'downloads' folder. Maybe check that before continuing?

- To install the main dependencies and install R, RUN THIS SCRIPT in Bash.

- Now download the actual app files .zip file to 'Downloads': https://drive.google.com/open?id=1BMCv_QcOwQFqbjrVZzvCHE0Ke9pMbZlJ

- Extract the file to the desktop:

cd && cd /home/pi/Downloads/ unzip Pi4_Bat_detector.zip -d /home/pi/Desktop/ - Now install the R libraries – open a terminal and run each of the following lines, one by one:

R install.packages("randomForest") install.packages("audio") install.packages("rstudioapi") install.packages("bioacoustics") - If trying to view folders does not work properly, use:

sudo apt-get install --reinstall pcmanfm - There should now be two items on the desktop – an image of a bat and a folder called 'deploy_classifier'. Double click the bat icon to run the app.

- To run in full screen, open GUI.py in a text editor and replace the last six lines with:

win = ButtonWindow() win.set_position(Gtk.WindowPosition.CENTER) win.fullscreen() win.connect("destroy", Gtk.main_quit) win.show_all() Gtk.main() - Updates are available here: GITHUB

Caveat emptor: I am NOT a professional coder. Although my code works very well, it quite often does not conform to layout standards that are taught in universities, etc.