5 +1 things driving IIoT and 4.0: Approaching AI

Follow articleHow do you feel about this article? Help us to provide better content for you.

Thank you! Your feedback has been received.

There was a problem submitting your feedback, please try again later.

What do you think of this article?

I’m all but an expert in AI. Well, I’ve read a lot of theoretical articles about technologies, visions and problems in AI. But besides a few experiments with object recognition using open CV (“compute vision”), I’ve never practically stepped into this ocean of particular knowledge. So how can I dare writing an article about it? I’m daring because I think most of my engineering colleagues out there do have the same problem. We feel dazzled by the diversity and quantity of information and tools, resulting in a respectful paralysis. Things look too complex, and the level of knowledge needed for a successful implementation of AI into our projects seems to outmatch by far the time left for off- or on-the-job training. I finally found a fantastic tool for beginners and experts, and I like to share my first impressions.

The platform I’m going to discuss is the LUXonis “DEPTHai”. To join me on my journey into this ecosystem, you need to invest 200$ into the “DepthAI OAK-D (LUX-D) with Onboard Cameras and Enclosure (USB3C)” hardware platform. It is a 4K stereo camera system with integrated edge computing (Myriad X VPU with 4 TOPS!). European customers may want to buy it from Antratek to save customs trouble when importing into the EU (this will cost you 300€). But I promise you will enjoy this investment very much. In over 40 years of electronic and computer experience, I’ve rarely been surprised by such an easy-to-follow instruction into a complex technology.

It all started back in July 2020 when they published their Kickstarter campaign. You should look at the campaign page and get impressed by their “OpenCV AI Kit” features. They promised an under 1-minute start from unboxing to running an advanced image classifier. And more than this, it is not only easy to use but also open source: “OAK is a modular, open-source ecosystem composed of MIT-licensed hardware, software, and AI training - that allows you to embed the super-power of spatial AI plus accelerated computer vision functions into your product. OAK provides a single, cohesive solution that would otherwise require cobbling together disparate hardware and software components. “

Today, you can buy the Kickstarter campaign’s result as a robust system (CE and FCC compliant) with a metal case and a standard ¼” camera mount. The system is powered by an external 5 V / 12 W power supply.

There are three built-in cameras: Two 720 P, 120 Hz mono global shutter cameras for high-speed 3D perception and one 4 k, 60 Hz colour camera with digital zoom for object detection and tracking.

A 7 cm horizontal displacement of the two mono cameras allows calculating any detected object’s distance by using its parallel offset in the two pictures. The astonishing fact is that this tiny system can detect objects and calculate their distance with 30 frames per second!

Lot’s of promises. But let’s see what reality is like…

When unboxing the system, my first impression is, “That’s pretty straightforward!”: Camera system, power supply with cord, USB-3-cord, a duster and two cardboard labels. I resisted the temptation to power the system and plug the USB into my Win10 PC. Many painful experiences in the past resulted in an “r.t.f.m. mentality”. But when I directed my browser to the page printed on one of the labels, I was amazed that there was a section for muggles like me, saying I should start by powering the system and plugging in the USB connector. So I followed the instruction, and – surprise – my system seems to be utterly content with the plugged-in device! No error messages about unknown drivers, etc. The instructions for beginners in DepthAI are more or less written for Unix systems like the Raspberry Pi, but there was no problem with my Win10 PC. When I entered “python3”, I was asked to allow installation of Python from the Mircosoft Store. Implementing a virtual environment on a Windows system is nearly the same. The only difference is that the executable “bat” files are not in a subdirectory “bin” but “scripts” instead. I also needed to manually give full access rights to the venv directory. Here is what I had to do for preparing the demo:

d:\depthai-main>python3

d:\depthai-main>python3 -m venv myvenv

d:\depthai-main>source myvenv/bin/activate

Der Befehl "source" ist entweder falsch geschrieben oder

konnte nicht gefunden werden.

d:\depthai-main>tutorial-env\Scripts\activate.bat

Das System kann den angegebenen Pfad nicht finden.

d:\depthai-main>myvenv\bin\activate

Das System kann den angegebenen Pfad nicht finden.

d:\depthai-main>myvenv\scripts\activate

(myvenv) d:\depthai-main>pip install -U pip

Traceback (most recent call last):

File "C:\Program Files\WindowsApps\PythonSoftwareFoundation.Python.3.9_3.9.1520.0_x64__qbz5n2kfra8p0\lib\runpy.py", line 197, in _run_module_as_main

return _run_code(code, main_globals, None,

File "C:\Program Files\WindowsApps\PythonSoftwareFoundation.Python.3.9_3.9.1520.0_x64__qbz5n2kfra8p0\lib\runpy.py", line 87, in _run_code

exec(code, run_globals)

File "d:\depthai-main\myvenv\Scripts\pip.exe\__main__.py", line 4, in <module>

ModuleNotFoundError: No module named 'pip'

(myvenv) d:\depthai-main>python3 install_requirements.py

Requirement already satisfied: pip in c:\program files\windowsapps\pythonsoftwarefoundation.python.3.9_3.9.1520.0_x64__qbz5n2kfra8p0\lib\site-packages (21.1.1)

Collecting pip

Using cached pip-21.1.2-py3-none-any.whl (1.5 MB)

Installing collected packages: pip

WARNING: The scripts pip.exe, pip3.9.exe and pip3.exe are installed in 'C:\Users\volker\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.9_qbz5n2kfra8p0\LocalCache\local-packages\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

Successfully installed pip-21.1.2

WARNING: You are using pip version 21.1.1; however, version 21.1.2 is available.

You should consider upgrading via the 'C:\Users\volker\AppData\Local\Microsoft\WindowsApps\PythonSoftwareFoundation.Python.3.9_qbz5n2kfra8p0\python.exe -m pip install --upgrade pip' command.

WARNING: Skipping depthai as it is not installed.

Collecting opencv-python==4.5.1.48

Downloading opencv_python-4.5.1.48-cp39-cp39-win_amd64.whl (34.9 MB)

|████████████████████████████████| 34.9 MB 6.4 MB/s

Collecting opencv-contrib-python==4.5.1.48

Downloading opencv_contrib_python-4.5.1.48-cp39-cp39-win_amd64.whl (41.2 MB)

|████████████████████████████████| 41.2 MB 6.4 MB/s

Collecting requests==2.24.0

Downloading requests-2.24.0-py2.py3-none-any.whl (61 kB)

|████████████████████████████████| 61 kB 4.5 MB/s

Collecting argcomplete==1.12.1

Downloading argcomplete-1.12.1-py2.py3-none-any.whl (38 kB)

Collecting pyyaml==5.4

Downloading PyYAML-5.4-cp39-cp39-win_amd64.whl (213 kB)

|████████████████████████████████| 213 kB ...

Collecting blobconverter==0.0.10

Downloading blobconverter-0.0.10-py3-none-any.whl (8.4 kB)

Collecting depthai==2.5.0.0

Downloading depthai-2.5.0.0-cp39-cp39-win_amd64.whl (5.2 MB)

|████████████████████████████████| 5.2 MB ...

Collecting numpy>=1.19.3

Downloading numpy-1.20.3-cp39-cp39-win_amd64.whl (13.7 MB)

|████████████████████████████████| 13.7 MB ...

Collecting certifi>=2017.4.17

Downloading certifi-2021.5.30-py2.py3-none-any.whl (145 kB)

|████████████████████████████████| 145 kB 6.4 MB/s

Collecting chardet<4,>=3.0.2

Downloading chardet-3.0.4-py2.py3-none-any.whl (133 kB)

|████████████████████████████████| 133 kB ...

Collecting idna<3,>=2.5

Downloading idna-2.10-py2.py3-none-any.whl (58 kB)

|████████████████████████████████| 58 kB 1.4 MB/s

Collecting urllib3!=1.25.0,!=1.25.1,<1.26,>=1.21.1

Downloading urllib3-1.25.11-py2.py3-none-any.whl (127 kB)

|████████████████████████████████| 127 kB ...

Collecting boto3

Downloading boto3-1.17.93-py2.py3-none-any.whl (131 kB)

|████████████████████████████████| 131 kB ...

Collecting jmespath<1.0.0,>=0.7.1

Downloading jmespath-0.10.0-py2.py3-none-any.whl (24 kB)

Collecting botocore<1.21.0,>=1.20.93

Downloading botocore-1.20.93-py2.py3-none-any.whl (7.6 MB)

|████████████████████████████████| 7.6 MB 6.8 MB/s

Collecting s3transfer<0.5.0,>=0.4.0

Downloading s3transfer-0.4.2-py2.py3-none-any.whl (79 kB)

|████████████████████████████████| 79 kB 1.6 MB/s

Collecting python-dateutil<3.0.0,>=2.1

Downloading python_dateutil-2.8.1-py2.py3-none-any.whl (227 kB)

|████████████████████████████████| 227 kB 6.4 MB/s

Collecting six>=1.5

Downloading six-1.16.0-py2.py3-none-any.whl (11 kB)

Installing collected packages: six, urllib3, python-dateutil, jmespath, botocore, s3transfer, idna, chardet, certifi, requests, pyyaml, numpy, boto3, opencv-python, opencv-contrib-python, depthai, blobconverter, argcomplete

WARNING: The script chardetect.exe is installed in 'C:\Users\volker\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.9_qbz5n2kfra8p0\LocalCache\local-packages\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script f2py.exe is installed in 'C:\Users\volker\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.9_qbz5n2kfra8p0\LocalCache\local-packages\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

WARNING: The script blobconverter.exe is installed in 'C:\Users\volker\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.9_qbz5n2kfra8p0\LocalCache\local-packages\Python39\Scripts' which is not on PATH.

Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location.

Successfully installed argcomplete-1.12.1 blobconverter-0.0.10 boto3-1.17.93 botocore-1.20.93 certifi-2021.5.30 chardet-3.0.4 depthai-2.5.0.0 idna-2.10 jmespath-0.10.0 numpy-1.20.3 opencv-contrib-python-4.5.1.48 opencv-python-4.5.1.48 python-dateutil-2.8.1 pyyaml-5.4 requests-2.24.0 s3transfer-0.4.2 six-1.16.0 urllib3-1.25.11

Ignoring open3d: markers 'platform_machine != "armv7l" and python_version < "3.9"' don't match your environment

(myvenv) d:\depthai-main>python3 depthai_demo.py

Using depthai module from: C:\Users\volker\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.9_qbz5n2kfra8p0\LocalCache\local-packages\Python39\site-packages\depthai.cp39-win_amd64.pyd

Depthai version installed: 2.5.0.0

Downloading C:\Users\volker\.cache\blobconverter\mobilenet-ssd_openvino_2021.3_13shave.blob...

[==================================================]

DoneFinally, I started the demo python script. It opens two windows: One showing the RGB-Picture of the middle camera overlayed by frames around objects together with their 3D position information. The other showing a picture with spatial detection of objects and their colour coded depth position. Wow! I implemented the system into my PC environment in less than 5 minutes!

But how are the results? The demo script downloads a model which is a MobileNetv2 SSD object detector trained on the PASCAL 2007 VOC classes, which are:

- Person: person

- Animal: bird, cat, cow, dog, horse, sheep

- Vehicle: aeroplane, bicycle, boat, bus, car, motorbike, train

- Indoor: bottle, chair, dining table, potted plant, sofa, TV/monitor

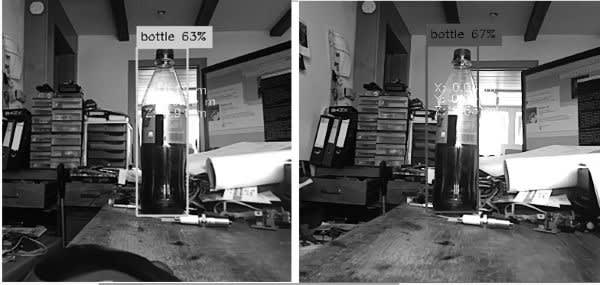

The system was rapid and precise when detecting a bottle standing in the midst of a horrible mess in my workshop. The following pictures give you an impression of what the left and right mono cameras see:

You may realise the parallel offset of the bottle due to the different camera angles.

I then started playing around with other objects and could even fool the object detection by using a puppet and a teddy (see title picture).

This whole concept is based on open source. You can download for free tons of models for your DapthAI system, which covers thousands of objects. Of course, you can use different tools to train your specific objects or write your specific code reacting to object detection.

Taking your first steps into AI and object detection can be as easy as this. So get de-paralysed and dare to start! Once you decided your individual level of understanding of the underlying technology, you can choose your way: You either implement existing components into your 4.0 projects. Or you develop soft- or hardware based on the open-source components.

But the most crucial step is to develop visions of benefits from this technology. The LUXONIS web pages do give real-life examples to inspire your imagination. I was amazed by this idea:

When trying to imagine industrial applications, I can see all kinds of robotic applications: Classical automation (e.g. 3D position detection and picking) and modern robot- or drone-based agriculture (correct and optimal positioning of cutting tools, detecting hidden animals at risk, etc.).

I’m convinced that the engineering community simply needs to play with such powerful tools to create innovative solutions. So what are you waiting for?

If you missed the other parts of 5 +1 things driving IIoT and 4.0: Approaching AI, here is are the links: